In recent years, one of the most significant challenges in robotics has been the development of machines that can truly perceive the world as humans do. While robots have become increasingly adept at performing specific tasks, such as object recognition or navigation within structured environments, achieving generalized perception remains elusive. In this context, self-supervised learning (SSL) has emerged as a promising approach. This article explores how self-supervised learning might hold the key to creating robots with generalized perception, capable of interacting with the world in a more flexible, human-like manner.

Understanding Self-Supervised Learning

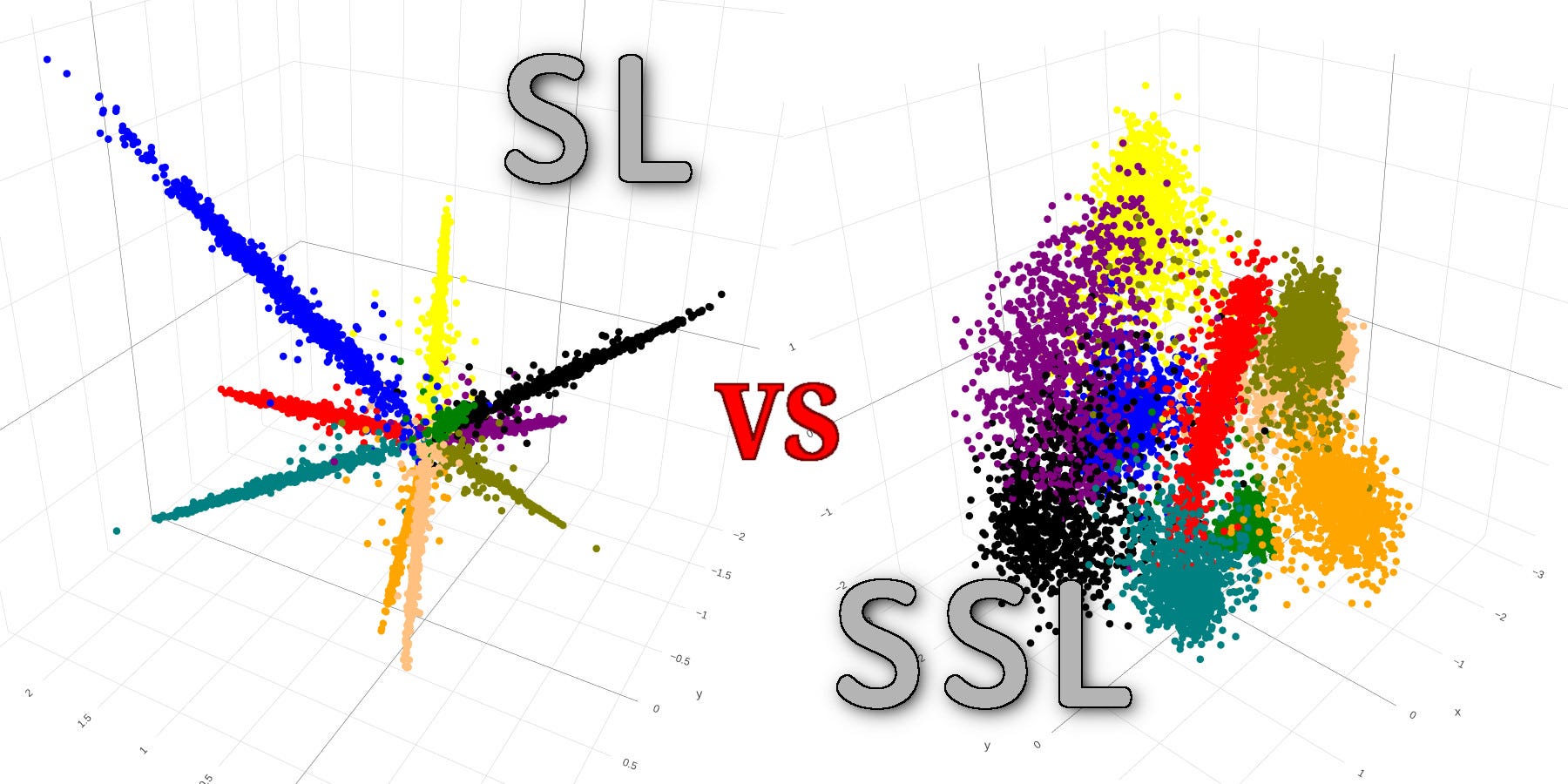

Self-supervised learning is a subfield of machine learning where the model learns to predict parts of its input data from other parts, without needing manually labeled data. This stands in stark contrast to traditional supervised learning, where a model is trained on a labeled dataset, with each input paired to a ground truth label. In SSL, the system generates its own supervision signal by leveraging inherent structures or patterns within the data itself. This enables the model to train on vast amounts of unlabeled data, making it more scalable and less reliant on costly human annotation.

The most common examples of self-supervised learning in vision tasks are techniques like predicting the context of an image from a patch or predicting the sequence of frames in a video. The key idea is to extract representations that capture the relationships and structures within the data, which can later be used for various downstream tasks like classification, segmentation, or action recognition.

Generalized Perception: The Holy Grail of Robotics

Perception in robotics refers to a machine’s ability to interpret and understand the surrounding environment. This process involves several subcomponents, including object recognition, depth estimation, scene understanding, and even emotional recognition in human-robot interactions. Generalized perception, however, refers to a system’s ability to handle diverse environments and situations without requiring task-specific programming or vast amounts of labeled data.

For example, imagine a robot navigating a house. It needs to understand not only that a chair is a chair but also that it can be used in various contexts: as a place to sit, an object to avoid, or an obstacle to be circumnavigated. The challenge lies in designing a robot that doesn’t just recognize chairs in a controlled environment but can also generalize this understanding to new, unseen contexts, such as in a park, a different house, or even a more chaotic space like a busy street.

Traditionally, robots have been limited to “narrow” perception tasks, performing well in specific, structured environments but faltering when faced with variability. This is where SSL could make a monumental difference.

The Role of Self-Supervised Learning in Generalized Perception

One of the fundamental benefits of self-supervised learning is its ability to extract rich, generalizable features from unlabeled data. In the case of robots, this can translate into more flexible, robust perception capabilities. Here’s how SSL is shaping the future of robot perception:

1. Learning from Unlabeled Data

The real-world environments robots must navigate are diverse and constantly changing. Manually labeling such data is a labor-intensive and expensive task, making it an impractical solution for large-scale deployment. SSL sidesteps this limitation by enabling robots to learn directly from raw, unlabeled data. For instance, a robot can process video feeds, images, or sensor inputs and begin to recognize patterns or structures without needing specific annotations.

By learning from these vast amounts of unlabeled data, robots can develop a more nuanced understanding of the environment, one that is not limited to the narrow tasks for which they were explicitly trained.

2. Improving Transfer Learning

One of the key challenges in robotics is transfer learning—the ability to apply learned knowledge to new, unseen tasks. Self-supervised learning excels in this area by generating robust representations that capture higher-order concepts. These representations are versatile and can be transferred to a variety of downstream tasks, allowing robots to adapt to new environments and scenarios without needing extensive retraining.

For example, a robot trained in one kitchen could potentially transfer its understanding of objects, spatial relationships, and human actions to another kitchen, or even a completely different environment like a warehouse or office, with minimal fine-tuning.

3. Learning Temporal and Spatial Features

Self-supervised learning techniques, especially in the realm of vision and robotics, often include predicting the future or the context of a scene. This helps robots learn to perceive not just individual objects but also the temporal and spatial relationships between them. In robotics, this is crucial for tasks like object manipulation, path planning, and human-robot collaboration.

For example, SSL could allow a robot to predict the next movement of a human and adjust its actions accordingly. Similarly, by understanding the spatial relationships in a room or outdoor space, a robot could autonomously plan a path through a dynamic environment, avoiding obstacles or interacting with objects in a more natural way.

4. Creating a Rich Representation of the Environment

A robot’s ability to perceive its environment is not just about recognizing objects—it’s about understanding the relationships between those objects. For instance, recognizing that a cup is on a table is important, but understanding that the cup can be lifted or moved is even more critical. Self-supervised learning allows robots to develop these rich environmental representations by learning from the context in which objects appear and interact with each other.

This type of learning is particularly powerful in robotics because it gives machines the flexibility to adapt to new situations, rather than just following predefined rules. Robots that can generate their own internal representations of the world are more likely to generalize well across a variety of tasks and environments.

Challenges and Future Directions

While SSL offers considerable promise, there are still several challenges to overcome. The complexity of real-world environments means that robots must process vast amounts of sensory data in real-time, which requires significant computational resources. Additionally, while SSL excels at learning from unlabeled data, it still requires a large quantity of high-quality data to achieve truly generalized perception.

Moreover, the lack of explicit supervision in SSL means that the learned representations may sometimes be ambiguous or difficult to interpret, which can pose challenges in safety-critical applications. For example, if a robot misinterprets an object or mispredicts a human action, the consequences could be dangerous, especially in environments like healthcare or manufacturing.

To address these challenges, future research will likely focus on improving the efficiency of SSL algorithms, making them more suitable for real-time applications in robotics. Moreover, the integration of multimodal data (e.g., vision, sound, and tactile feedback) could provide robots with a more holistic view of the world, further enhancing their ability to generalize across different tasks.

The Road Ahead: Bridging the Gap Between Vision and Action

The future of robot perception lies in the seamless integration of vision, action, and learning. Self-supervised learning is not a silver bullet, but it holds the potential to significantly improve a robot’s ability to perceive the world and act in a more human-like manner. By enabling robots to learn from their surroundings autonomously, SSL can empower them to adapt to new environments, handle more complex tasks, and ultimately become more integrated into human-centric environments.

Looking ahead, we may see robots that not only understand their environment but can also predict future events, collaborate more naturally with humans, and operate in dynamic, unstructured environments. As research in self-supervised learning and robotics progresses, we can expect to witness machines that are more autonomous, adaptable, and capable of perceiving the world as we do.

Conclusion

Self-supervised learning holds immense potential for advancing generalized robot perception. By allowing robots to learn from unlabeled data, SSL empowers machines to develop flexible, robust, and transferable representations of their environment. While challenges remain, the continued development of SSL techniques promises to unlock a new era of intelligent, adaptable robots capable of performing complex tasks across a wide range of environments. The future of robotics may be one where machines not only see the world but understand it.