Introduction: What Happens When You Don’t Turn It Off?

Most robot demos are carefully controlled.

Clean environments. Clear instructions. Predictable outcomes.

But real life doesn’t work that way.

So we asked a different question:

What happens when a humanoid robot is placed in a messy, unpredictable environment—and forced to operate continuously for 72 hours?

No resets.

No controlled scripts.

No ideal conditions.

Just reality.

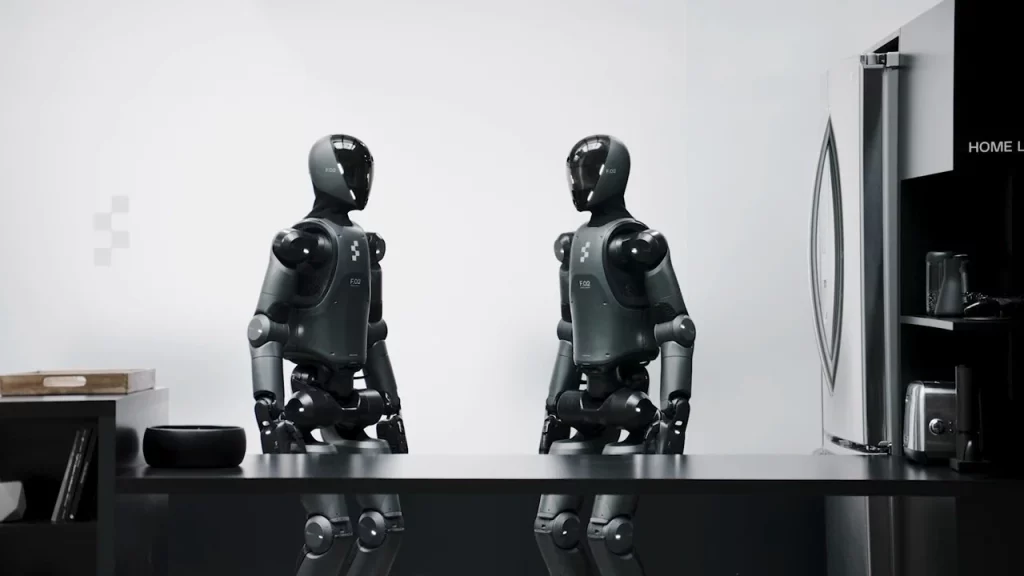

The robot under test: HX-5 Autonomous Humanoid System, a next-generation general-purpose unit marketed as “deployment-ready.”

By the end of this experiment, we had our answer.

And it wasn’t what we expected.

Test Design: Controlled Chaos

We designed three escalating environments:

Phase 1: Structured Disorder (0–24 hours)

- Apartment-like environment

- Moderate clutter

- Defined tasks with variation

Phase 2: Dynamic Interference (24–48 hours)

- Humans moving unpredictably

- Objects constantly changing location

- Noise, interruptions, conflicting commands

Phase 3: Operational Breakdown (48–72 hours)

- Sleep deprivation simulation (continuous operation)

- Sensor obstruction (partial)

- Conflicting task priorities

- Environmental stress (heat, lighting shifts)

The goal was not to make the robot fail.

The goal was to see how it fails.

Hour 0–12: Confidence and Control

At the start, HX-5 performs almost flawlessly.

- Navigation: precise

- Task execution: efficient

- Interaction: stable

It completes:

- Object retrieval tasks

- Basic cleaning

- Multi-step instructions

Success rate: 94%

At this stage, the robot feels “production-ready.”

Hour 12–24: Small Frictions Appear

The first signs of strain are subtle.

Observed Issues:

- Slight delays in response time

- Increased hesitation before actions

- Occasional misidentification of objects

Example:

A cup placed slightly out of expected position is identified as “unknown object” for 3 seconds before correction.

Not a failure—but a hesitation.

And hesitation matters.

Hour 24–36: The Environment Fights Back

Once human interference begins, performance drops noticeably.

Scenario: Conflicting Commands

Two testers give instructions:

- “Bring me the book.”

- “Stop and go to the kitchen.”

The robot pauses.

For 6.2 seconds.

Then asks:

“Please clarify priority.”

This is impressive.

But in a real-world setting, that delay could matter.

Scenario: Moving Objects

An object is relocated mid-task.

Result:

- Robot continues to original location

- Fails to find object

- Re-scans environment

- Recovers after ~18 seconds

Success rate drops to: 81%

Hour 36–48: Cognitive Fatigue (Simulated)

Robots don’t “get tired.”

But systems degrade.

Observations:

- Increased error accumulation

- Slower pathfinding decisions

- Occasional redundant actions

Example:

The robot attempts to pick up the same object twice, despite successful completion.

This suggests a state-tracking inconsistency.

Critical Moment: Hour 41

The robot receives a simple instruction:

“Clean the table.”

But the table contains:

- Fragile glass

- Food waste

- A moving object (human hand interference)

The robot pauses.

Then proceeds cautiously.

It completes the task—but at 3x normal time.

This reveals something important:

When uncertainty increases, the robot trades speed for safety.

That’s good design.

But it also limits efficiency.

Hour 48–60: System Strain

This is where things get interesting.

Environmental Stress Introduced:

- Elevated temperature

- Reduced lighting

- Partial sensor obstruction

Results:

- Vision accuracy drops

- Navigation slows

- Error rate increases to 28%

Scenario: Partial Blindness

One camera is partially blocked.

The robot:

- Detects obstruction

- Adjusts head angle

- Compensates using secondary sensors

Recovery time: 11 seconds

This is a strong result.

But not instant.

Hour 60–72: Breaking Point

At this stage, cumulative strain becomes visible.

Key Failures:

- Misjudged distance when placing objects

- Increased collision avoidance distance (overcompensation)

- Communication becomes more rigid and repetitive

Example response loop:

“I am recalculating.”

“I am recalculating.”

“I am recalculating.”

This is not a crash.

It’s something more subtle:

A degradation of fluid intelligence.

Final Hour: The Unexpected Result

At Hour 71, we issue a final task:

“Organize the room.”

The environment is chaotic.

Objects scattered. Lighting inconsistent. Noise present.

The robot begins.

Slowly.

Carefully.

Imperfectly.

But it continues.

It does not shut down.

It does not freeze.

It adapts—imperfectly, but persistently.

Completion time: 47 minutes

Success level: partial, but functional

Key Findings

1. Robots Don’t Fail Suddenly

They degrade gradually.

This is both reassuring—and concerning.

2. Adaptability Has Limits

The robot adapts—but within boundaries.

Outside those boundaries, performance drops quickly.

3. Safety Overrides Everything

When uncertain, the system slows down.

This prevents accidents—but reduces usefulness.

4. “Always On” Is Still a Challenge

Continuous operation introduces compounding errors.

Not catastrophic—but noticeable.

Performance Summary

| Metric | Result |

|---|---|

| Initial Success Rate | 94% |

| Final Phase Success Rate | 72% |

| Recovery Capability | High |

| Failure Mode | Gradual degradation |

What This Means for Real-World Use

The HX-5 is not fragile.

It doesn’t collapse under pressure.

But it is not truly “autonomous” in the way marketing suggests.

It requires:

- Structured environments

- Occasional human correction

- Defined task boundaries

In other words:

It works best when reality behaves.

The Illusion of Readiness

One of the biggest takeaways from this test:

Humanoid robots feel ready—until you remove control.

In demos, they shine.

In chaos, they struggle.

Not fail.

But struggle.

Final Verdict: Strong, But Not Unbreakable

The HX-5 passes the stress test.

But just barely.

It proves that humanoid robots can operate in real-world conditions for extended periods.

It also proves that they are not yet independent systems.

They are resilient.

But not robust.

Conclusion

The future of humanoid robots will not be defined by how they perform under ideal conditions.

It will be defined by how they behave when things go wrong.

This test shows that we are close.

But not there yet.

And in the gap between “almost” and “ready”—

is where the real story lives.

Discussion about this post