In an age defined by technological acceleration, artificial intelligence (AI) has become an omnipresent force shaping industries, businesses, and daily life. Two key paradigms have emerged at the forefront of AI development: on-board computing and cloud AI. These two approaches represent distinct philosophies of where and how AI “brainwork”—the complex computations, data analyses, and decision-making processes—should take place. Each model has its own merits, challenges, and use cases, and understanding the balance between the two is essential for industries, governments, and developers striving to make the most of AI’s transformative potential.

In this article, we will explore the characteristics, advantages, and limitations of on-board computing and cloud AI. We’ll dive into the technical nuances, the ethical considerations, and the real-world implications of where the intelligence should reside: at the edge of the device or deep within the cloud.

Understanding On-board Computing and Cloud AI

Before diving into the debate, let’s first define what we mean by on-board computing and cloud AI:

- On-board Computing (Edge AI) refers to the practice of running AI algorithms directly on a device or hardware. This can range from smartphones and autonomous vehicles to industrial robots and IoT (Internet of Things) devices. The key trait of this model is that all computations, data analyses, and decision-making occur locally on the device itself. The AI does not require an internet connection to function once the system is up and running.

- Cloud AI, on the other hand, refers to the use of cloud infrastructure—remote servers and data centers—to store and process data. In this model, devices send their data to the cloud, where it is processed using AI algorithms. The results are then transmitted back to the device. This model is dependent on internet connectivity and generally relies on large-scale data centers to manage massive amounts of computational work.

1. Latency: The Need for Speed

One of the most obvious differences between on-board computing and cloud AI is the issue of latency. Latency refers to the time delay between when a request is made and when the response is received. When AI computations occur in the cloud, this delay can be significant due to the time required to send data to a remote server, process it, and send the result back.

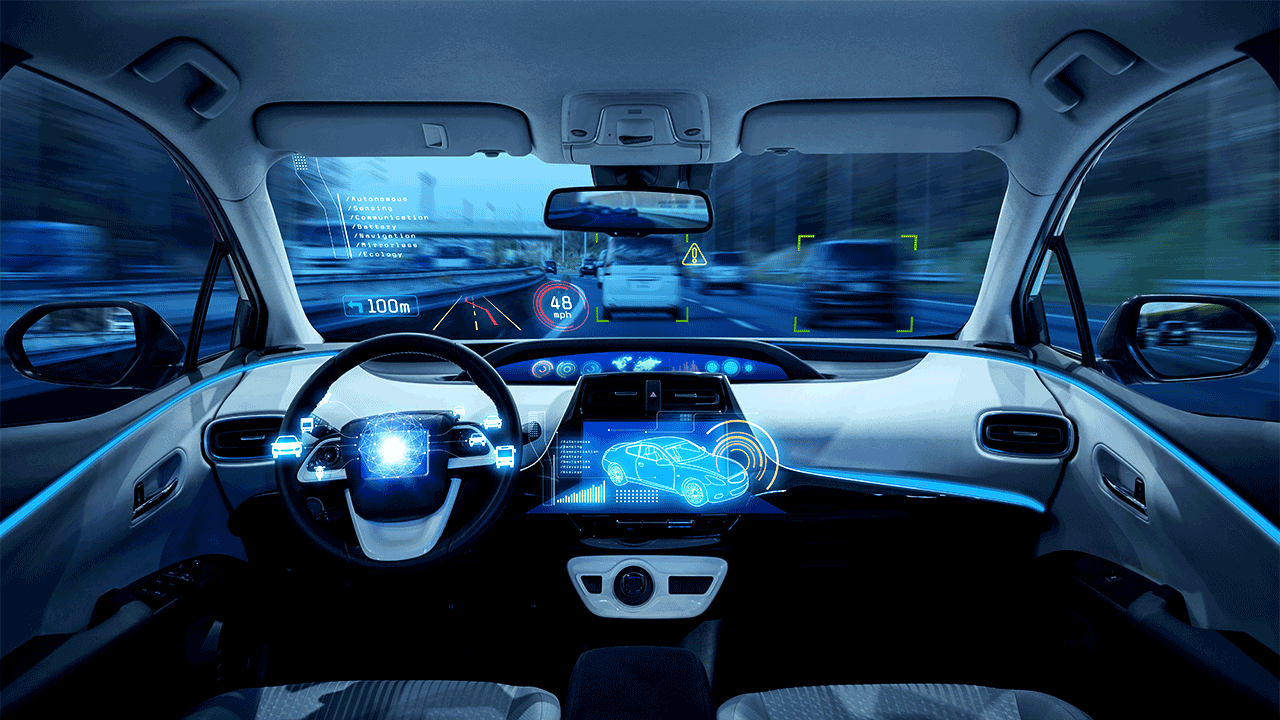

For instance, autonomous vehicles—machines that rely heavily on AI for real-time decision-making—cannot afford significant delays. A self-driving car needs to react almost instantaneously to changes in its environment. If the car must rely on cloud AI, even a few milliseconds of delay could have dire consequences. Therefore, on-board computing is essential for such applications. The AI must process data locally to ensure quick and reliable decisions.

In contrast, cloud AI works well in scenarios where immediate responses are not critical. For example, online recommendation systems (think of Netflix suggesting your next show) can afford a few seconds of delay. Since these types of decisions don’t involve real-time actions, cloud-based AI systems are an ideal solution.

2. Scalability and Flexibility

While on-board computing excels in applications requiring speed, cloud AI shines when it comes to scalability. The cloud offers near-infinite computing power, which allows businesses to scale their AI systems easily and flexibly. When a company wants to upgrade its AI model or process more data, they don’t need to invest in new hardware. They simply access more resources on the cloud.

This scalability makes cloud AI ideal for industries that need to process massive amounts of data across many devices, such as in healthcare, finance, and e-commerce. Cloud AI allows for the collection and analysis of enormous datasets that might otherwise be difficult or impossible to process locally. For example, large-scale facial recognition systems, predictive analytics in stock trading, or large image databases for medical imaging analysis often rely on the power of cloud-based AI.

On the flip side, on-board computing is limited by the physical capabilities of the device. While edge devices are becoming more powerful with advancements in hardware (such as NVIDIA’s Jetson or Apple’s A-series chips), they still cannot match the computing power of a large-scale cloud infrastructure.

3. Security and Privacy

As AI continues to handle sensitive data—such as medical records, financial information, or personal communications—security and privacy have become major concerns. Here, the two approaches diverge significantly.

On-board computing offers an inherent advantage when it comes to data privacy. Since data is processed locally on the device, there is less need to transmit personal data over the internet. This minimizes the risk of data breaches and helps to ensure compliance with strict privacy laws, such as GDPR in Europe. This is especially critical in sectors like healthcare, where data confidentiality is paramount.

However, while on-board computing offers a degree of privacy, cloud AI has its own security mechanisms. Cloud service providers like Amazon Web Services (AWS) and Google Cloud invest heavily in data encryption and access control to protect sensitive information. These services also have dedicated teams working on security, providing expertise that might be beyond what can be achieved with local devices.

Yet, cloud-based AI systems inherently pose a higher risk of data exposure. Any transmission of data over the internet presents a potential vulnerability. Although data encryption reduces this risk, the fact remains that sensitive data must leave the device, passing through multiple channels, making it more susceptible to cyber-attacks.

4. Energy Consumption

Energy efficiency is another major consideration when comparing on-board computing and cloud AI. On-board computing typically consumes less energy when performing computations locally. Devices like smartphones and autonomous drones are often designed with energy-efficient hardware to extend battery life, making them ideal candidates for edge-based AI.

Conversely, cloud AI requires significant energy to power large-scale data centers. These data centers, responsible for housing thousands of servers running AI algorithms, are notorious for their high energy consumption. In fact, according to a study by the International Energy Agency (IEA), data centers worldwide consume more than 1% of global electricity. While cloud providers continue to invest in renewable energy sources, the energy footprint of cloud computing remains a concern, particularly when it comes to the environmental impact.

5. Network Dependency and Connectivity

While on-board computing works seamlessly offline, cloud AI requires a constant internet connection to function. In areas where connectivity is limited, such as rural locations or remote regions, cloud AI might be impractical. For example, drones or robots deployed in disaster zones or outer space may rely entirely on on-board computing to function. Similarly, autonomous cars need to operate in environments where network outages could lead to disastrous consequences.

On the other hand, the advantage of cloud AI is its ability to perform complex computations and data analyses that on-board computing cannot handle. In situations where network connectivity is stable, cloud AI offers the benefit of centralized updates and the integration of new data across devices.

6. Ethical and Societal Implications

The choice between on-board computing and cloud AI is not just a technical decision; it also has ethical and societal implications. As AI becomes more ingrained in our daily lives, the question arises: Where should the “brain” of AI reside?

With on-board computing, the ethical considerations center around issues of autonomy and local control. Users may feel more secure and in control when their devices process data locally. Additionally, AI models that run on the device may be less likely to be manipulated by external forces, ensuring that decisions made by devices are independent of centralized authorities.

In contrast, cloud AI presents concerns about the centralization of power. By relying on large companies (such as Google, Amazon, or Microsoft) to host AI models, there is a risk of monopolies and exploitation. These companies might gain access to immense amounts of data, which could be used for surveillance or to influence individual behavior. This raises questions about the ownership of data and the rights of individuals.

Moreover, centralizing AI in the cloud could also exacerbate digital divides. Regions with limited internet access or older infrastructure may be left behind, unable to leverage the benefits of advanced AI technologies.

Conclusion: Striking a Balance

The debate between on-board computing and cloud AI is not about choosing one over the other. Instead, it’s about finding a balance between the two models to best serve different use cases. In some scenarios, on-board computing is the clear winner, offering speed, privacy, and energy efficiency. In others, cloud AI’s ability to scale, process massive datasets, and centralize computing power is indispensable.

Ultimately, both approaches will continue to evolve, and hybrid models that combine the best of both worlds are becoming more common. Devices may process data locally for real-time decision-making while still leveraging the cloud for heavy-lifting tasks such as model training and large-scale data analysis.

As AI becomes more integrated into our lives, the question of where the “brain” of AI resides will have increasingly significant ethical, societal, and environmental implications. By navigating this complex landscape thoughtfully, we can harness AI’s potential while addressing its challenges.