In the rapidly evolving world of robotics, where science fiction edges ever closer to reality, one name has risen quickly to prominence: Figure AI. With its humanoid robot Figure 02, the company claims to be pushing the envelope of what autonomous machines can see, think, and do. But bold claims — especially in cutting‑edge robotics — invite scrutiny. Does Figure 02 actually deliver meaningful advances in AI‑enhanced vision and control, or is it another ambitious prototype wrapped in buzzwords?

To answer this, we need to examine Figure 02 from multiple angles: its technical architecture, vision capabilities, motor control and autonomy, real‑world applications, and how well it fares against expectations from both industry insiders and critical observers. This isn’t about hype — this is about whether Figure 02 truly represents a new era in humanoid robotics.

1. The Vision: What Figure AI Is Trying to Achieve

At its core, Figure AI’s mission is straightforward but profound: build humanoid robots that can autonomously perform real‑world tasks in human environments. That’s an ambitious goal — one that blends AI perception, natural language understanding, dexterity, and autonomous decision‑making into a single machine.

Figure 02 is meant to be the first generation capable of operating in dynamic, unstructured settings for extended periods — in factories, warehouses, or even homes — with minimal human intervention. To achieve this, Figure AI has integrated multiple advanced systems into a cohesive whole:

- Multi‑camera vision system for perception

- Vision‑language models (VLMs) for visual understanding

- Speech‑to‑speech conversational AI

- Enhanced motor control with human‑like dexterity

- Onboard compute optimized for real‑time perception and planning

These features are designed to help the robot see its environment, interpret what it sees, and decide what to do next — not through pre‑programmed routines, but through real‑time AI reasoning. The promise is that the robot doesn’t just look at the world — it understands it.

But how does this manifest in practice?

2. Eyes Wide Open: AI‑Enhanced Vision System

One of the most hyped aspects of Figure 02 is its onboard AI‑enabled vision suite. This isn’t a mere collection of cameras — it’s a multi‑camera, multi‑modal perception system deeply integrated with AI models for real‑time visual reasoning.

2.1 A Network of Sight

Figure 02 includes six RGB cameras strategically positioned around its body — head, torso, and back — to provide a 360° perspective of its surroundings. These cameras feed raw visual data into an onboard Vision Language Model (VLM) that interprets the scene contextually.

Rather than simply detecting objects, the robot is designed to understand what it sees, identifying items, estimating their relevance to a task, and reasoning about next actions. This is a significant departure from traditional robotic vision systems — which typically rely on predefined object recognition and structured environments — into something that resembles semantic perception.

2.2 Real‑Time Visual Reasoning

The integrated VLM doesn’t just passively label objects; it influences decisions. For example, if you ask Figure 02 to “place the red box on that shelf,” its vision and language models need to:

- Recognize “red box” among other items

- Understand where the target shelf is

- Determine a safe motion path to pick and place

This integration of vision and language understanding allows for dynamic interaction with complex environments, a leap beyond scripted automation.

2.3 Self‑Correction and Scene Understanding

Figure AI claims that its vision system enables self‑correction — the ability to identify and amend mistakes based on new sensory input. This kind of feedback loop is essential for real‑world operation, where unpredictability is the norm. For instance, if an object slips from its grasp during a pick‑and‑place task, the AI should re‑assess and adjust — instead of stopping or requesting human help.

This capability, while impressive on paper, requires not just perception but situational awareness and adaptive planning — a challenge at the forefront of embodied AI research.

3. Control Systems: The Brain in Motion

While vision tells the robot what is around it, the control system tells it how to move. Dexterity and mobility are notoriously difficult to master in humanoid robotics.

3.1 Human‑Like Dexterity

Figure 02’s hands have 16 degrees of freedom, enabling expressive and precise manipulation. This high degree of freedom allows Figure 02 to perform actions previously reserved for specialized industrial manipulators or human hands — like grasping varied shapes and handling delicate tasks.

To put it in perspective: typical industrial robotic arms might have 6–7 degrees of freedom, while human hands have 27. Achieving 16 in a compact humanoid form is technically demanding, particularly when coupled with AI perception for object reasoning.

These advanced hands are not just for show — they reportedly allow the robot to lift heavy objects (up to ~25 kg), a nontrivial capability when operating among humans or for manufacturing assistance.

3.2 Motor Control and Walk Stability

Figure 02 is also designed to walk and balance dynamically — not just shuffle in place. Thanks to advances in control algorithms and reinforcement learning‑based gait training, the robot can navigate uneven surfaces and maintain stability, a key milestone in humanoid robotics.

However, this is precisely where reality often diverges from expectation. Complex motion like stair climbing, sudden perturbation recovery, or navigating crowded environments are still active areas of research — and performance varies widely depending on conditions.

4. AI Integration: The Invisible Framework

All these sensors and actuators mean little without a robust computational backbone.

4.1 Onboard Compute

One of the standout claims for Figure 02 is that it has three times the AI inference power of its predecessor. This increase in compute capability allows the robot to run sophisticated AI models — including vision, language understanding, and motion control — without offloading processing to the cloud.

This is crucial for low‑latency decision‑making, especially in environments where cloud connectivity is limited or unreliable.

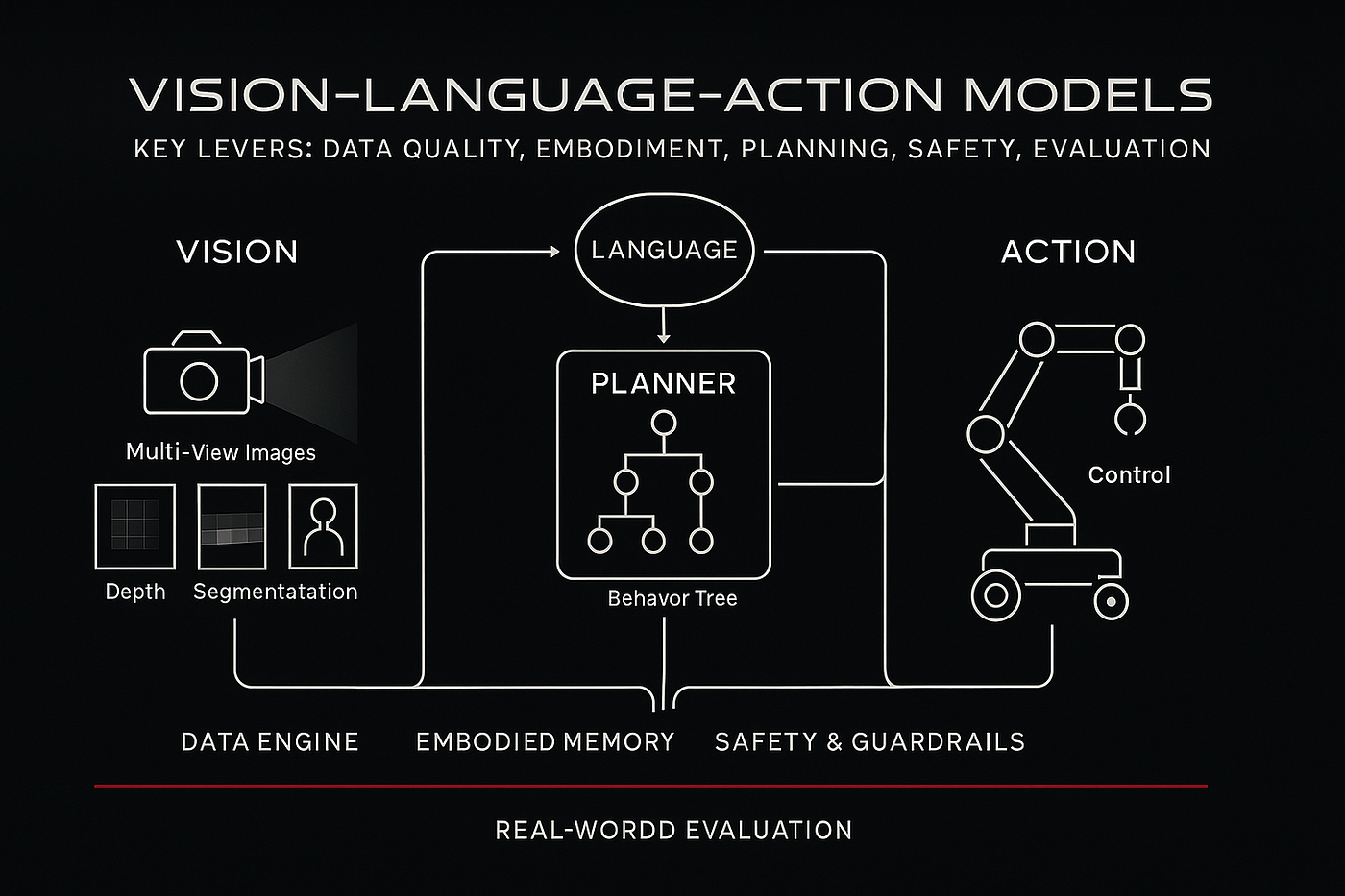

4.2 Vision‑Language‑Action Models

According to deeper technical discussions (such as those around Figure’s Helix model), the robot may employ next‑generation Vision‑Language‑Action (VLA) architectures designed to unify perception, cognition, and motor control under a cohesive neural paradigm. These models allow for generalization — meaning the robot isn’t limited to predefined tasks but can interpret new commands and scenarios more flexibly.

This kind of model blurs the lines between perception and action planning, a crucial step toward truly autonomous operation.

4.3 Conversational Abilities

Figure 02 isn’t a silent worker: it features microphones and speakers, enabling speech‑to‑speech interaction. This lets users give commands verbally and receive confirmation or queries — turning robot control into a dialogue rather than a button‑pushing exercise.

The integration of conversational AI with visual and motor systems doesn’t just make the robot easier to command — it improves situational interpretability. A robot that can ask “Did you mean the blue box or the large blue bin?” is far more useful than one that vaguely guesses.

5. From Lab to Floor: Real‑World Performance

All this technical promise begs a critical question: Can Figure 02 deliver outside controlled settings?

5.1 Industrial Deployment

Figure AI has publicly shown Figure 02 operating in a BMW manufacturing facility, performing repetitive tasks such as lifting parts and placing them accurately. This is a step beyond demos in isolation — it means integration with human workflows and existing automation systems.

But real‑world deployment comes with nuance. According to reports from the factory, the tasks are highly structured and constrained — not the open‑ended autonomy some marketing implies. The robot repeatedly picks and places specific components within a limited workspace, which is easier than navigating a crowded factory floor independently.

5.2 Autonomy vs. Structure

This highlights a key distinction: fully autonomous operation versus assisted autonomy in structured environments.

In controlled settings — fixed lighting, known object types, and predictable workflows — robots like Figure 02 can excel. Their vision systems and AI reasoning can navigate the scenario reliably. But in dynamic or chaotic settings — where objects and tasks vary unpredictably — performance can be uneven.

That gap is not a shortfall unique to Figure 02; it’s a broader challenge in robotics. Autonomous perception and reasoning in open worlds remain cutting‑edge research problems.

6. The Reality Check: Strengths and Limitations

Figure 02 undoubtedly represents a leap forward compared to its predecessor and many competitors. Its vision system, compute power, and integrated AI are technologically impressive. But to understand whether it lives up to its claims, we need a balanced look at both strengths and limitations.

6.1 Strengths

- Advanced perception: Six RGB cameras and VLM allow nuanced scene understanding.

- Human‑like manipulation: Hands with 16 degrees of freedom enable complex tasks.

- Onboard autonomy: Local compute reduces dependency on cloud and improves responsiveness.

- Language integration: Conversational AI enhances usability.

6.2 Limitations

- Limited unstructured deployment: Tasks shown so far are constrained environments.

- Complex locomotion remains challenging: Walking, climbing, or navigating unpredictable terrain is still imperfect.

- Cost and practicality: High development costs and operational complexity limit immediate widespread use (a common challenge with humanoid robots).

In short, Figure 02 is a technological milestone, but not a fully realized general‑purpose humanoid worker. It excels in certain structured or semi‑structured tasks and advances critical components of autonomy, but falls short of the sci‑fi ideal of a robot that can independently navigate any human environment on day one.

7. The Bigger Picture: Where This Fits in Robotics

Understanding Figure 02 isn’t just about evaluating a single machine — it’s about appreciating where robotics stands today.

Humanoid robots aim to operate in environments humans designed, with tools and physical interfaces built for human bodies. That makes them appealing compared to fixed automation, which is rigid and inflexible. But this versatility comes at a cost: perception, balance, and adaptive control are inherently difficult.

Figure 02’s advancements suggest that embodied AI — the integration of perception, cognition, and action — is making measurable progress. Integrating vision language models with robot control, enhancing misuse tolerance, and supporting natural human interaction are all steps toward scalable autonomous systems.

However, true general‑purpose autonomy remains a horizon goal. Most real‑world settings still require human supervision, task splitting, or environmental constraints to function reliably.

8. The Verdict: Does Figure 02 Live Up to Its Claims?

Yes, but with important caveats. Figure 02 delivers on several of its claims:

✔️ AI‑enhanced vision that does more than detect objects — it interprets scenes.

✔️ Integrated language and perception systems that make interaction intuitive.

✔️ Human‑like manipulation and autonomy in structured environments.

But it does not yet fully deliver on the broadest interpretations of autonomy — especially in unpredictable, open‑ended settings where sensor noise, unexpected obstacles, and non‑repetitive tasks are the norm.

Figure 02 is a major step forward, not a final destination. Its innovations push the field ahead and narrow the gap between experimental robotics and practical deployment. For industries willing to integrate cutting edge AI with controlled workflows — such as advanced manufacturing, logistics, or guided warehouse tasks — it represents a potent new tool.

But for those expecting a household humanoid that can independently manage chores, dynamic navigation, or unscripted human interaction — we’re not quite there yet.

In that sense, Figure 02 both lives up to its claims and highlights the challenges still to be solved — a hallmark of meaningful progress on the road to genuinely autonomous machines.