Human beings are emotional creatures. From the moment a baby cries and smiles to the final thoughtful reflection of an elderly mind, emotions shape our thoughts, decisions, values, and social lives. We laugh, we cry, we form close bonds, we feel empathy—and at times we struggle to articulate exactly what we feel. Emotions are not just internal biological signals; they are the currency of human connection.

So when engineers, roboticists, and AI scientists ask the question How close are we to robots that understand human emotions?, they are not asking whether machines can mimic the shape of a smile or identify a sentiment in a tweet. They are asking a much deeper and richer question: Can a machine perceive, interpret, and respond to emotions in a way that feels authentic and meaningful to a human being? And if so, what are the scientific breakthroughs, technological gaps, ethical questions, and philosophical implications we must grapple with as this reality unfolds?

In this article, we’ll explore this question from multiple angles: the science and technology behind emotion‑aware robots, real world examples, the psychological and social context, current limitations, ethical and societal concerns, and a glimpse into where we might be headed.

1. The Science of Emotion Recognition: What It Even Means

At first glance, the idea of a robot that “understands” emotions sounds like science fiction. But at the technical level, several foundational components are already under development: emotion detection, emotion interpretation, and affective response generation.

1.1 What Is Emotion in Machines?

For humans, emotions are complex neurological and biochemical processes triggered by internal states and external stimuli. For machines, emotions are abstracted into patterns—visual cues, vocal signals, physiological data, and language signals that correlate with emotional states. In machine learning terms, this involves multimodal data interpretation, where machines analyze multiple inputs simultaneously: facial expressions, eye movements, voice intonation, posture, and even heart‑rate or skin conductance when available.

This multimodal approach is pivotal because humans rarely communicate emotion through one channel alone. When a friend says “I’m fine” but avoids eye contact and speaks in a flat tone, you understand something different than the literal words. AI research is trying to catch up with this reality by teaching machines to weigh multiple emotional signals together. An example of this is the context‑aware emotion perception models used in human‑robot interaction research, which incorporate audio, video, and contextual cues to improve recognition accuracy across domains.

1.2 Affective Computing: The First Stage

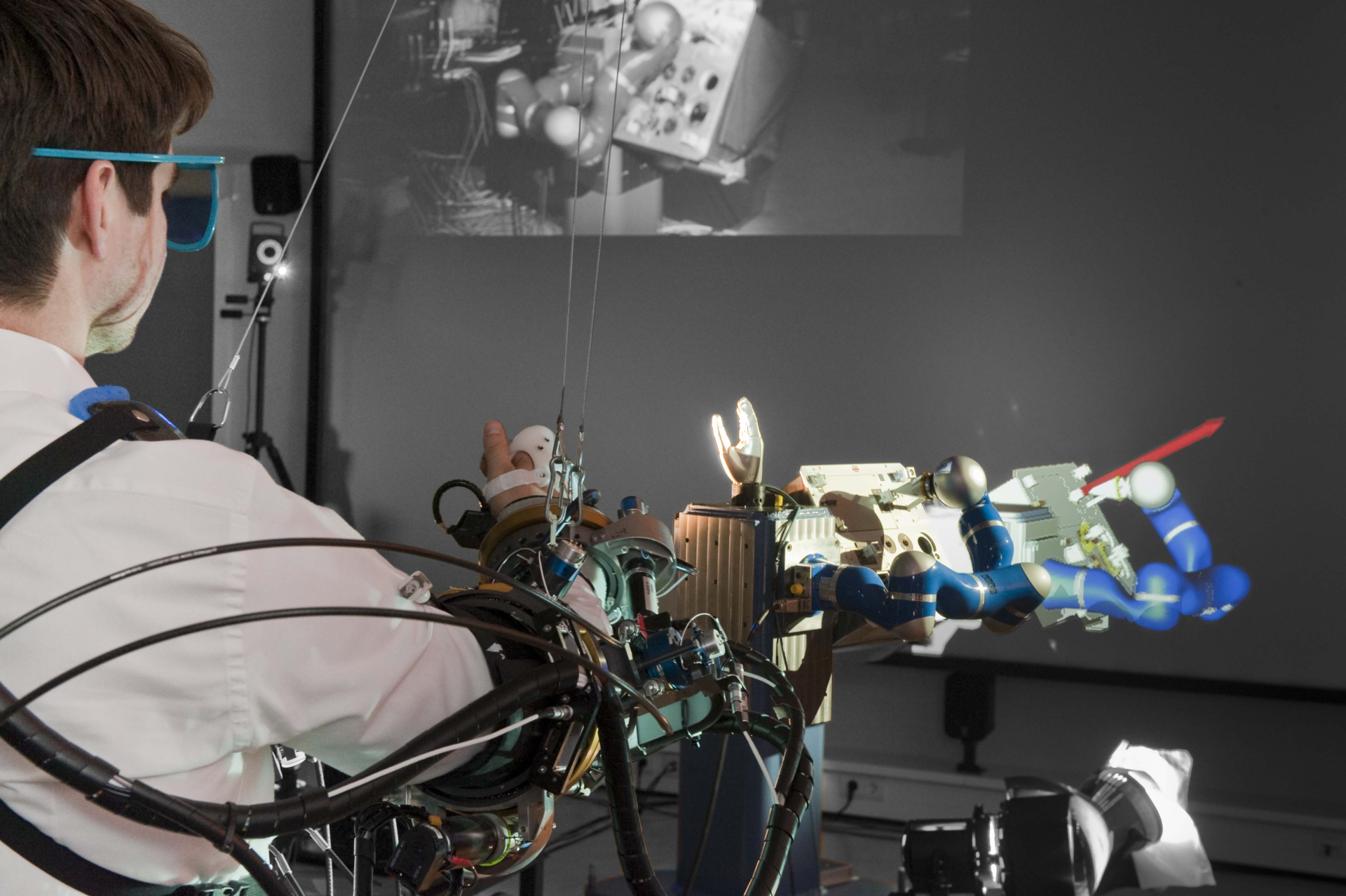

The field of affective computing, pioneered by researchers like Rosalind Picard at MIT, seeks to give computers the ability to recognize and simulate affective processes. Early milestones in this field include experimental robots like Kismet—a robot head created to interact socially with humans using expressive motions and sounds. Robots like these don’t feel emotion but can display outward cues that humans interpret as emotional.

Similarly, social robots such as Japan’s Kobian can simulate a range of emotional expressions, including culture‑specific gestures, to create a more natural interaction interface. These early efforts serve as proof of concept that mechanical platforms can embody emotional interaction frameworks.

1.3 Beyond Detection: Emotion Interpretation

Recognition is one thing; interpretation is another. For example, a machine may detect sadness in someone’s voice, but understanding context—why the person is sad, what they may need next, and how best to respond—is far more complex.

Recent work in AI has explored frameworks that go beyond mere classification (happy, sad, angry) to context‑aware interpretation. This incorporates social context, personal history, and pattern recognition across interactions. Deep research projects, like those combining visual and auditory emotion classification for use in healthcare robots, are attempting to refine this understanding for real‑time application in sensitive environments like hospitals.

2. Real Examples: Robots Recognizing and Responding to Emotion

We already have robots in the world that incorporate rudimentary emotional recognition and expression. These are prototypes or commercial products, but they offer insight into where technology stands right now.

2.1 Pepper — The Face of Emotional Robots

SoftBank Robotics’ Pepper is one of the most famous early emotional robots. Designed to recognize human mood through facial and voice cues, Pepper uses multiple sensors to interpret emotional states and adjust its behavior accordingly. Pepper has been deployed in retail, telepresence, and service environments, offering a glimpse of how robots can interact socially beyond task‑based functions.

2.2 Social Robots in Research and Healthcare

Robots like Nadine, developed with expressive speech, gesture recognition, and conversational memory, showcase the next generation of social robots that anchor human‑robot dialogue in a more persistent and personalized way.

In healthcare settings, robots are being engineered to sense and respond to patient emotions—a field especially pertinent in caregiving robots. Research illustrates how patient emotional classification can influence robot responses aimed at improving well‑being and cooperation.

2.3 Consumer and Learning Robots

Beyond research labs, some platforms have reached consumer markets. The AISoy1, for example, is designed to adapt to human interaction over time, offering more dynamic and unpredictable emotional responses than traditional programmed systems.

Such machines blend sensors with learning algorithms to adjust behavior over successive interactions, hinting at what future emotion‑aware companion robots might look like.

3. The Psychology of Perceived Understanding

Even as machines improve technically, human perception remains a major factor.

A recent behavioral study found that people often rate emotional AI responses as less empathetic than identical responses labeled as human‑generated—even when the content is the same. This suggests a psychological phenomenon where users attribute “real” understanding only if they believe the source is human.

This is highly significant: Understanding in humans is not only about recognizing emotional cues and responding correctly; it is also about whether the human believes the other party genuinely cares. Authentic emotional understanding, trust, and empathy are deeply rooted in shared experience and mutual vulnerability—something current AI doesn’t truly possess.

So one may ask: if a robot responds appropriately to sadness or joy, does it understand that emotion? Or is it merely a highly sophisticated mimic? Philosophers, psychologists, and AI ethicists debate this intensely, because the answer affects how we design, regulate, and integrate these machines.

4. Challenges and Limitations: Why It’s Harder Than It Looks

4.1 Multimodal Emotion Recognition Gaps

While facial expression analysis and speech emotion recognition have become fairly advanced with deep learning, these technologies still struggle with cultural nuance, individual differences, and subtle emotional blends. Humans are notoriously variable in how they express emotions—one person’s smile might hide anxiety, another’s silence might signal frustration.

Even context‑aware models that integrate audio and visual cues only achieve moderate accuracy improvements in laboratory experiments. These gaps highlight how much contextual and social information humans use in emotional inference, and how hard that is to replicate in machines.

4.2 The Appearance vs Reality Problem

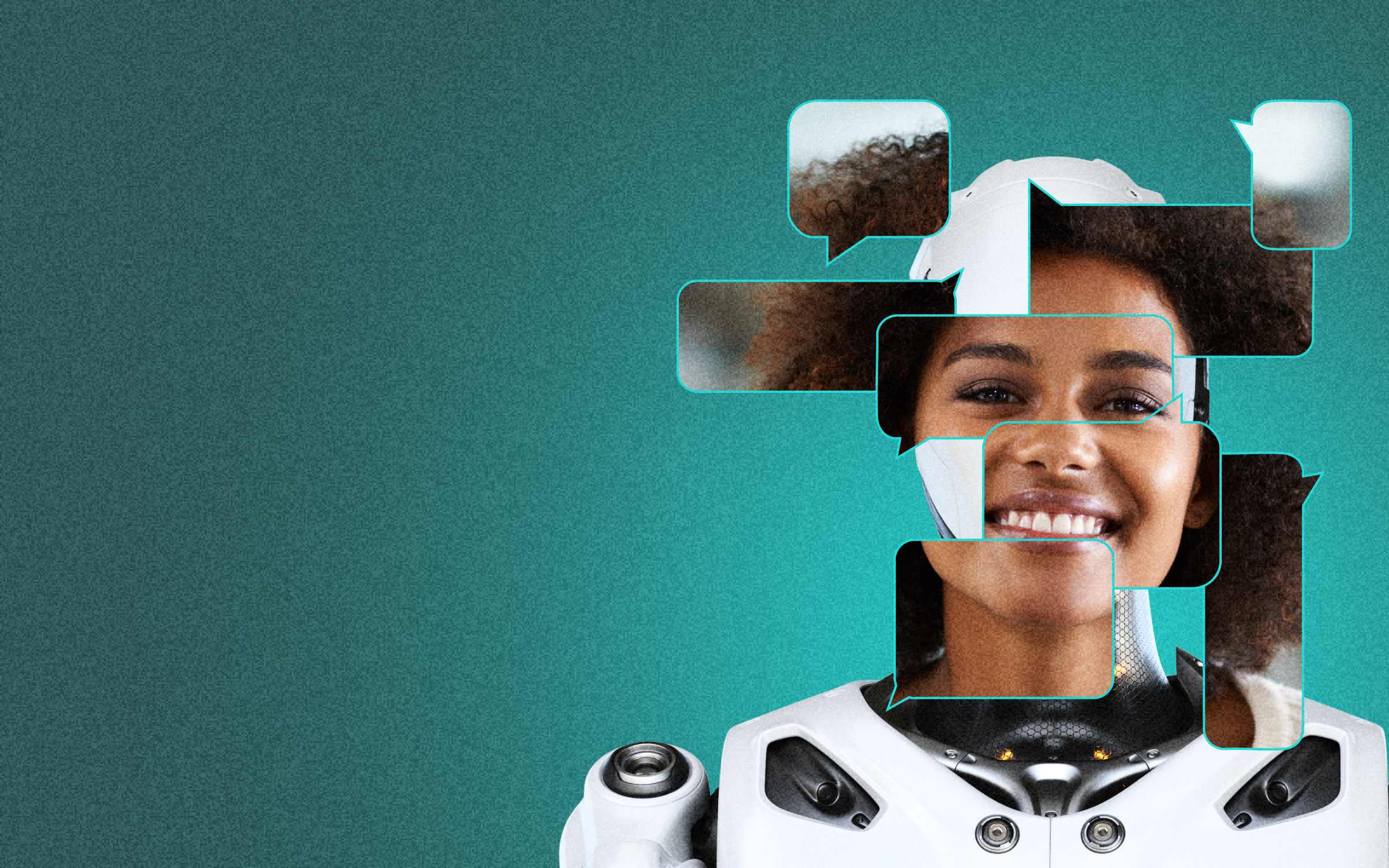

As robots become more expressive and life‑like—such as ultra‑realistic robot faces that blink, nod, and mimic human facial expressions—humans may form emotional attachments even if the underlying system lacks genuine emotional comprehension. This creates psychological and social risks, where users may project emotional depth onto machines that don’t possess it.

4.3 Cultural Variation and Norms

Emotion expression varies widely across cultures. A gesture seen as polite in one context may be rude in another. Building robots that can navigate these nuances requires immense data diversity and culturally sensitive models—something still in early stages of research.

5. Ethics, Trust, and Societal Impacts

If machines begin to appear to understand human emotions, what are the ethical implications?

5.1 Emotional Manipulation

Emotion‑aware robots could potentially influence human behavior—for example tailoring interactions to increase sales, or steering users toward certain decisions. Without strong ethical safeguards and transparent design, emotional AI could be weaponized for persuasion or manipulation.

5.2 Privacy and Data Use

Emotion detection often involves collecting sensitive data—facial expressions, voice recordings, biofeedback—that may reveal personal psychological states. Who owns this emotional data? How is it stored and protected? What rights do users have over machines that “watch” them emotionally?

5.3 Social and Emotional Dependency

As robots become more socially engaging, there’s a risk of users substituting machine interaction for human connection. Loneliness, emotional dependency, and reduced social skills are potential unintended consequences if robots fill roles traditionally held by humans. We must carefully calibrate where and how emotion‑aware robots are deployed.

6. And What Comes Next?

So how close are we to robots that truly understand human emotions? The honest answer is: *we are close in terms of emotional recognition and response generation, but far from achieving deep emotional understanding or authentic empathy in the human sense.

Technically, robots can now detect a spectrum of emotional cues through advanced sensors and machine learning. Emerging systems integrate multimodal perception—face, voice, body language—and even semantic context to improve interpretation. But emotional understanding is not just classification; it’s social meaning, context, and lived experience.

As research accelerates, including innovative frameworks for evaluating AI emotional cognition and multimodal reasoning, we will see robots that feel more natural, responsive, and socially competent than ever before. Some will assist in caregiving, education, therapy, and companionship roles, becoming collaborative partners rather than tools.

But a crucial part of this journey will be addressing not just technical challenges, but ethical and social ones. Engineers and policymakers must work together to ensure that emotion‑aware robots enrich human life without undermining dignity, autonomy, or psychological wellbeing.

The future of emotionally intelligent robotics is not just about machines that appear to understand us—it’s about how we integrate them into human society in ways that complement, rather than substitute, the deep emotional connections that define what it means to be human.