The future of humanoid robotics hinges on a deceptively simple yet deeply complex capability: managing contact not just with hands or feet but with the entire body. To appreciate why whole‑body contact handling is so essential, we need to explore how humanoids interact with the physical world, what makes their control problems unique compared to other types of robots, and how recent research is pushing boundaries in tactile sensing, motion planning, and learning. This article walks you from foundational principles to cutting‑edge research, framed in clear thematic sections that illuminate why this topic is more than a niche interest—it’s central to the next generation of real‑world robotic applications.

1. What Is Whole‑Body Contact Handling?

At its core, whole‑body contact handling refers to a humanoid robot’s ability to use any part of its body—not just hands or feet—to interact with objects and environments in a stable, robust way. This goes beyond simple gripping or walking: it includes leaning on walls, bracing against objects, balancing with arms, pressing torsos against surfaces for stability, and even manipulation tasks that involve broad areas of contact. In other words, it’s about making every part of a robot’s body functional and responsive in physical interaction, just as humans instinctively use their entire bodies in complex tasks. Some research groups call this multicontact manipulation or whole‑body manipulation, and the topic is gaining rapid attention across robotics labs worldwide.

Contrast this with the traditional view of robot contact: for decades, industrial robots treated contact as something to minimize or avoid because rigid, unilateral contact complicates control and can destabilize the system. Humanoid robotics flips this assumption by embracing physical contact as a tool for functionality, not just an error condition. That’s why mastering whole‑body contact handling is now viewed as a major step toward robot autonomy in unstructured environments and real‑world deployment outside controlled industrial settings.

2. Why Whole‑Body Contact Is Hard: Dynamics and High‑Dimensionality

Humanoid robots are more complex to control than industrial arms or wheeled mobile robots. Their dynamics are high‑dimensional: every joint can influence balance, stability, and motion in ways that quickly become entwined. When you introduce contact at arbitrary points on the robot’s surface—elbows, knees, torso, shoulders—the problem expands dramatically. You’re no longer dealing with just a few predefined contact points, but potentially thousands of contact modes and force trajectories. This combinatorial explosion makes planning and control extremely challenging.

Even in simplified planning models, reasoning about whole‑body contact requires algorithms that can:

- Model contact forces and friction across non‑planar surfaces;

- Predict how distributed contacts affect stability and the robot’s center‑of‑mass (CoM);

- Integrate sensor feedback in real time under uncertainty.

Many research works approach these problems through continuous optimization frameworks, which treat the robot’s entire surface and contact state in an integrated mathematical model. These methods can significantly reduce computation time while maintaining feasibility in planning complex sequences of movements with multiple contact points.

3. Physical Advantages of Whole‑Body Contact

3.1 Enhanced Stability

One of the clearest motivations for whole‑body contact handling is stability. A humanoid robot stands upright only because its controller manages reaction forces between the feet and the ground. However, when the robot can also press an arm into a wall or use its torso against a stable surface, it widens its support polygon—the geometric region where the resultant ground reaction forces can maintain balance. This dramatically enhances stability in challenging conditions like uneven terrain, pushing against objects, or moving heavy payloads.

Think of a firefighter bracing their body against a ladder while climbing with heavy equipment: the additional body contact points aren’t decorative—they’re essential for keeping balance. Robots need the same sort of capability in real‑world tasks, whether that means traversing rubble in a disaster response mission or maneuvering around furniture in a domestic environment.

3.2 Load Distribution

Whole‑body contact also allows robots to distribute forces over broad areas, reducing the stress on individual joints or actuators. When lifting or manipulating large objects, relying solely on the arms could easily overload actuators. Spreading contact forces over shoulders, torso, and even back surfaces gives the robot mechanical leverage and reduces the risk of overtorque conditions that could damage hardware.

This strategy becomes especially relevant in tasks like pushing a heavy door open: a robot might use its entire body to brace and generate force more efficiently than with just its arms. Robots that can only use extremities are limited to tasks with small, predictable loads.

4. Sensory Foundations: Tactile and Force Feedback

For whole‑body contact to be effective and safe, robots must sense the contact they make. Traditional mobile robots rely on proprioception (joint positions, encoder readings), along with vision and sometimes force/torque sensing at a few key locations. But that’s not enough for broad area contact. The next frontier lies in distributed tactile sensing—a dense network of sensors across limbs and torso that can detect contact magnitude, direction, slip, and pressure distribution.

One standout research direction equips humanoid robots with deformable, sheet‑like distributed tactile sensors that integrate seamlessly with the body surface. These sensors, combined with force/torque data and visual information, enable motion controllers to adapt quickly to unstructured contact situations such as leaning, brushing, or colliding lightly with obstacles. In simulation and experiments, this sensor fusion approach improves stability and robustness against environmental unpredictability.

These tactile arrays act like an “artificial skin” that gives robots a sense of embodied awareness similar to human touch. This capability allows them to respond to contact more gracefully, reducing the likelihood of damaging collisions and enabling nuanced interactions like balancing against a wall without slipping.

5. Motion Planning and Control Techniques

5.1 Optimization‑Based Whole‑Body Controllers

A major thrust of research into whole‑body contact handling centers on optimization‑based control architectures. These systems define a hierarchy of motion objectives—e.g., balance first, then limb motion, then manipulation goals—and solve for the best set of control actions that satisfy constraints like torque limits, friction cones, and contact stability. Multi‑objective optimization frameworks can dynamically redistribute priorities based on task context, such as switching from walking to pushing an object.

Modern controllers often use hierarchical formulations that handle the conflicting demands of multiple tasks. For example, controlling balance might take precedence over arm movement when contact is uncertain. Alternatively, if a robot must push a heavy box, the controller might prioritize contact stabilization and force distribution over locomotion speed.

5.2 Learning‑Driven Approaches

Optimization has its limits—especially when dealing with real‑world uncertainties like sensor noise, variable friction surfaces, or unmodeled dynamics. That’s where learning‑based methods enter the picture. Reinforcement learning (RL) and imitation learning approaches can help humanoid robots acquire robust contact policies from data.

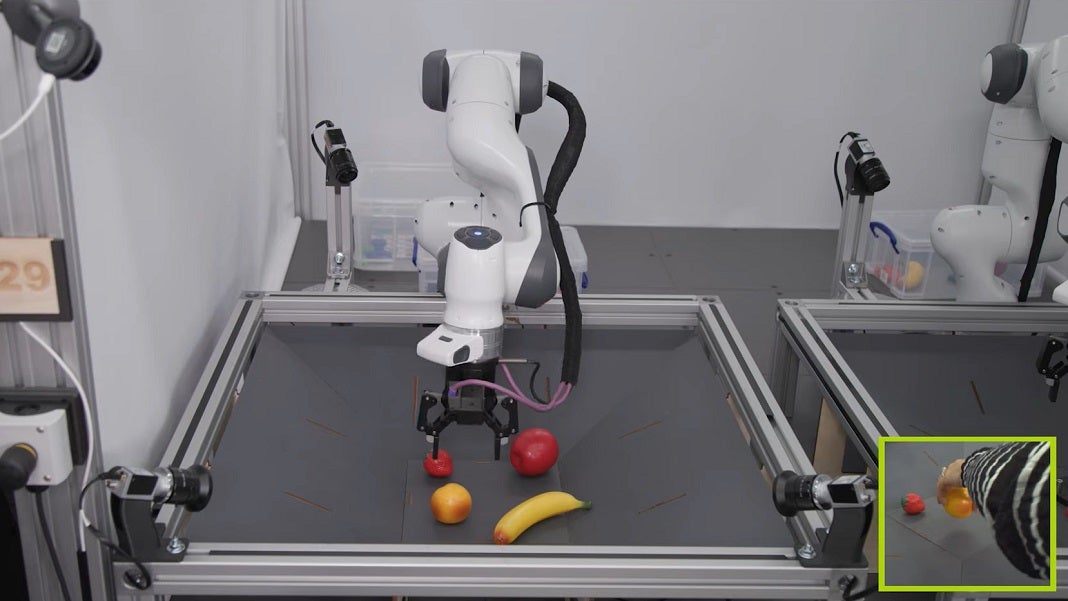

One cutting‑edge example is a tactile‑modality extended control policy learned through deep imitation learning from human teleoperation. This method learns how to coordinate whole‑body contacts by integrating vision, joint states, and tactile data. In experiments with full‑scale humanoid platforms, such policies exhibit robust behavior even in previously unseen tasks—highlighting the promise of data‑driven approaches for real‑world contact handling.

Reinforcement learning has also been used in simulation to teach robots complex contact‑rich tasks with minimal demonstrations. Oddly enough, policies learned this way can generalize better to real hardware than purely model‑based controllers, especially when combined with techniques like domain randomization.

5.3 Multi‑Contact Sequencing

Humanoid tasks often involve sequences of contacts, not just static points of force. Climbing stairs while leaning on a railing, moving a large object while stepping backward, or bracing both arms against a table require planning how contacts will change over time. Recent frameworks decompose these problems into sequential stages with individual contact objectives, enabling more efficient learning and control of long‑horizon tasks.

6. Safety, Human‑Robot Interaction, and Ethics

A robot that handles physical contact using its entire body must be predictable and safe, especially in environments shared with humans. Unlike industrial manipulators that operate in segregated zones, humanoids are envisioned to work in homes, hospitals, and workplaces. Whole‑body contact handling introduces new safety risks: what happens when a robot pushes against a human by accident, or leans too hard on a fragile object? Research in physical human–robot interaction (pHRI) emphasizes the need to model human comfort zones, shared force thresholds, and reflex‑like safety behaviors to prevent harm.

From an ethical standpoint, designers must ensure that contact control systems respect personal space and safety norms, especially as robots become capable of gentle touch and forceful support depending on context. In caregiving scenarios, for example, robots might need to physically support an elderly person—a whole‑body contact task where both efficacy and safety are paramount.

7. Applications Where Whole‑Body Contact Matters

7.1 Disaster Response and Search‑and‑Rescue

In disaster zones, environments are unpredictable and cluttered with irregular objects. A humanoid robot that can use its entire body to brace, push, and balance can navigate rubble much more effectively than one limited to point contacts. Whole‑body contact can be the difference between stumbling and successfully climbing over obstacles while carrying equipment.

7.2 Domestic Assistance

In home environments, robots might need to open refrigerators, move furniture, help lift household items, or stabilize themselves while balancing on uneven floor mats. Here, broad contact handling enables natural interaction with the environment, much like a human uses elbows against a countertop for support.

7.3 Industrial Collaboration

Factories increasingly employ cobots—robots that collaborate with humans. Humanoid cobots with whole‑body contact handling can assist in assembly lines, hold parts steady while a human worker tightens screws, or reposition objects safely without requiring elaborate fixtures or tooling.

8. Future Challenges and Directions

Despite significant progress, many hurdles remain for whole‑body contact handling in humanoid robotics:

- Real‑time computation — Contact planning and control are computationally intensive, especially with high‑dimensional body models.

- Sensor fusion — Integrating vision, tactile, and force sensing into cohesive control loops under uncertainty is still an open research frontier.

- Generalization — Learning‑based controllers must generalize reliably from simulation or limited demonstrations to diverse real‑world conditions.

- Human‑centric safety — Frameworks for safe pHRI need rigorous validation to ensure robots interact appropriately with humans across tasks and environments.

As research bridges these gaps, we can envision humanoid robots that navigate complexity with human‑like grace—not by mimicking every motion humans make, but by understanding and exploiting contact the way humans do. This embodied intelligence—where robots feel and adapt through contact—is key to unlocking wider adoption of humanoid platforms in everyday life.