Introduction: The Quest for a Unified Theory

In the rapidly evolving landscape of artificial intelligence and robotics, one question looms large for researchers, engineers, and philosophers alike: Is there a unified theory behind embodied intelligence in robots? This question isn’t just academic—it goes to the heart of how and whether machines can truly navigate and understand our physical world with the fluidity, adaptability, and contextual responsiveness that biological organisms demonstrate. Across decades of parallel developments in cognitive science, AI, and robotics, the notion of “embodied intelligence” has emerged as a dominant paradigm shift, challenging traditional computational models of intelligence and demanding fresh frameworks that honor the inseparable link between body, environment, and cognition.

The answer is both yes and no—yes in that there are converging frameworks that aim to unify principles of embodied intelligence across disciplines; and no in that a single, definitive, all‑encompassing theory has not yet crystallized in the way, say, Newton’s laws unify classical mechanics. What we have instead is a spectrum of theoretical constructs and engineering models that collectively point toward a more complete understanding of how intelligent agents—particularly robots—can be embodied in, and shaped by, their physical and social environments.

This article will explore the historical roots, modern frameworks, biological inspirations, computational architectures, limitations, and future paths toward realizing something that approaches a “unified theory” of embodied intelligence in robotics.

1. Embodied Intelligence: Beyond Abstract Computation

To grasp why a unified theory is difficult yet valuable, we must first revisit what embodied intelligence (EI) actually means. At its core, embodied intelligence rejects the notion that intelligence is purely abstract computation divorced from physical bodies. Instead, it asserts that intelligence arises from the continuous dynamic interaction between a physical agent and its environment. In humans and animals, cognition emerges not merely from neural processes in isolation, but from the ways bodies engage with and react to sensory input, motor actions, and environmental feedback.

This viewpoint has deep roots in both cognitive science and robotics. The cognitive science concept of embodied cognition argues that human thought processes are shaped by bodily interactions with the world, not just abstract neural computations. This perspective challenges classical Cartesian dualism and represents cognition as inseparable from physical embodiment.

Likewise, robotics pioneers in the 1980s—most notably Rodney Brooks and his “Nouvelle AI”—proposed robot architectures that forgo detailed internal representations of the world in favor of reactive systems that respond directly to environmental stimuli, with intelligence emerging from situated action rather than top‑down symbolic reasoning.

Embodied intelligence in robots therefore isn’t just about putting sensors and actuators together; it’s about understanding how sensors, controllers, and actuators can co‑evolve such that behavior emerges naturally from the physical interactions between agent and world.

2. Historical Foundations and Philosophical Roots

Understanding where embodied intelligence—and by extension a potential unified theory—comes from requires exploring several pivotal historical and philosophical roots.

2.1. Alan Turing and Early AI Thought

In the mid‑20th century, Alan Turing speculated that true machine intelligence would require an agent to learn and interact with the world much like human infants do—suggesting that apprenticeship in the environment, not abstract reasoning alone, would be key to developing flexible intelligence.

2.2. Cybernetics and Control Theory

At the same time, Norbert Wiener’s cybernetics laid the groundwork for feedback loops and control systems, showing that complex adaptive behavior can arise from simple feedback mechanisms embedded in physical systems.

2.3. 4E Cognition and Cognitive Science

Cognitive science in recent decades has established the “4E” framework—Embodied, Embedded, Enactive, and Extended cognition—suggesting that cognition must be understood as dependent on sensorimotor loops with the world. This has profound implications for robotics: if cognition is not brain‑only but body‑world coupled, then robots must be designed with that coupling in mind to achieve true adaptive intelligence.

2.4. Subsumption Architecture and Reactive Robots

Brooks’s subsumption architecture embodied these ideas into robotics by layering simple behaviors that interact with the environment in real time, allowing robustness and adaptability without heavy symbolic reasoning.

Together, these foundations demonstrate that the dream of a unified theory has always been interdisciplinary—drawing from philosophy, cognitive science, control theory, and robotics engineering.

3. What Would a Unified Theory Need to Explain?

A unified theory of embodied intelligence in robots would need to integrate several core facets:

3.1. Physical Morphology and Dynamics

The physical form—shape, materials, size, range of motion—fundamentally influences how a robot interacts with the world. Morphology modulates what tasks are feasible and what sensory input is meaningful.

3.2. Sensorimotor Coupling

Real intelligence requires coupling perception with action. A unified theory must describe how sensory input and motor output form a closed loop that allows behavior to emerge from interaction, not just internal computation.

3.3. Learning Through Interaction

Rather than pre‑programmed behaviors, embodied agents must adapt by learning from experience—through continuous engagement with the environment. This is akin to how infants learn about their world, gradually acquiring skills as they interact.

3.4. Cognitive and Social Context

Robots operating in human settings need not only environmental intelligence, but also social intelligence—the ability to interpret, predict, and adapt to human actions and intentions.

3.5. Integration of Computation with Embodiment

Finally, intelligent systems must integrate computation (e.g., neural networks, reinforcement learning, world models) with physical embodiment so that decision‑making is sensitive to context, dynamics, and real‑world physics.

Current research is advancing modular frameworks that approximate such integration, but a single overarching mathematical or conceptual theory that ties all these together remains elusive.

4. Modern Frameworks and Convergent Theories

While a singular unified theory does not yet exist, several promising frameworks are converging:

4.1. Embodied AI Taxonomies

Recent research categorizes embodied intelligence into levels or layers, from simple reactive behaviors to advanced goal‑driven cognition integrating planning, memory, and social reasoning.

4.2. Vision‑Language‑Action Models

Cutting‑edge work is integrating visual perception, natural language understanding, and action in robots, building systems that can interpret their surroundings, understand instructions, and act accordingly. These models help unify sensory, linguistic, and motor domains under cohesive architectures.

4.3. AGI and Ontogenetic Architectures

Some theoretical work proposes architectures for artificial general intelligence that are inherently embodied, developmental, and ontogenetic—mirroring biological growth processes rather than static designs.

4.4. Hybrid Cognitive‑Physical Frameworks

Other proposals suggest merging large multimodal models (e.g., language, vision, simulation) with physical embodiment, allowing robots to build internal world models that interact seamlessly with their physical actuators and sensors.

These frameworks don’t yet constitute a single “theory of everything” for embodied intelligence, but they outline the common principles and components that any such theory would likely incorporate.

5. Case Studies: Embodied Intelligence in Action

To illustrate how these ideas play out concretely, consider several examples:

5.1. Soft Robotics and Adaptive Control

Soft robots exploit compliant materials to adapt passively to environments. Their intelligence arises not just from control algorithms but also from the mechanical properties of their bodies.

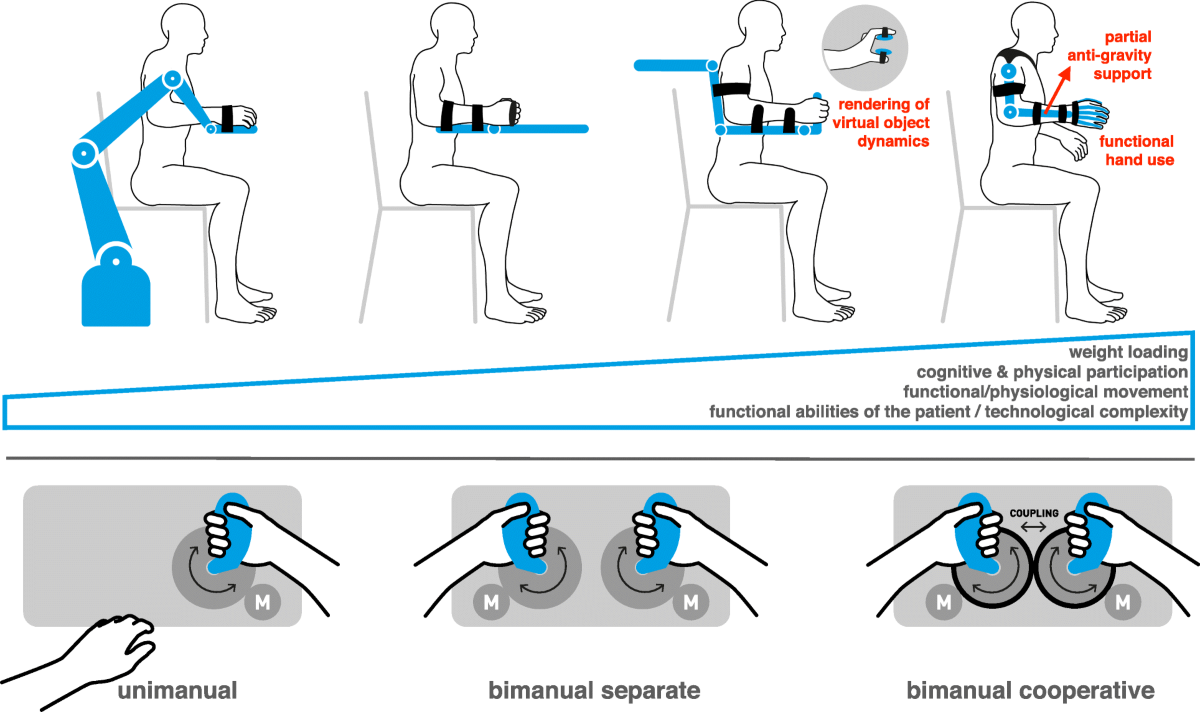

5.2. Human‑Robot Collaboration

In assembly lines or collaborative workspaces, robots must interpret human gestures, language, and intent in real time—requiring sensorimotor intelligence integrated with social cognition.

5.3. Developmental Robotics

Robots like MIT’s Cog project emphasized learning through social and environmental interaction, akin to human infant development—underscoring the need for embodied, contextual learning.

6. Current Challenges to Unification

Despite rapid progress, several profound challenges remain:

6.1. Data and Experience

Unlike virtual data that can be cheaply simulated, real robotic experience in the physical world is costly and slow to acquire, limiting what models can learn about complex environments.

6.2. World Modeling

Developing internal models that are both computationally efficient and accurate enough to guide action in unpredictable real‑world situations remains difficult.

6.3. Embodiment Metrics

We lack universal metrics to quantify how embodied a system’s intelligence is or how well cognition arises from body–environment interaction.

6.4. Integration of Cognitive and Physical Loops

Bridging high‑level reasoning (like planning and language understanding) with low‑level sensorimotor control remains a major architectural challenge.

6.5. Social and Ethical Complexity

Robots embodied in human environments must respect safety, ethics, and social norms—raising questions that are beyond pure engineering.

7. Toward a Future Unified Theory

Though no singular unified theory yet exists, the pieces are coming together. A unifying framework for embodied intelligence in robots would likely synthesize:

- A formal mathematical description of sensorimotor loops and morphology–behavior coupling

- A computational architecture that marries perception, decision‑making, and action in context

- A developmental model for how embodied agents grow capabilities through experience and interaction

- A social cognition layer that accounts for human‑compatible reasoning, cooperation, and trust

Such a theory would not only advance robotics but also deepen our understanding of intelligence itself—not as a disembodied abstraction, but as a dynamic, adaptive, body‑world system.

In the quest for such unification, the interplay of biology, computation, physics, and engineering continues to be the crucible where insights emerge. The journey may be ongoing, but the shared principles forming at this intersection bring us ever closer to realizing robust, adaptable, and genuinely embodied robotic intelligence in the real world.