Introduction — The Next Frontier of Human‑Like Machines

In the twilight zone between science fiction and today’s reality, humanoid robots — machines shaped like humans — are advancing faster than most people realize. They’re no longer just cinematic props or academic curiosities: they are evolving into potential labor partners, caregivers, factory workers, household helpers, and mobile AI agents capable of interacting with the world in ways once thought impossible.

At the heart of this transformation? Open AI‑backed software development toolkits (“SDKs”) and AI models — the invisible digital brains that could finally empower robots to understand language, perceive environments, and make decisions on the fly. But are these SDKs truly game changers for humanoids? Or are they just another layer of hype in a long line of robotics promises?

In this article, we will explore how OpenAI’s support — from financial backing to model integration and SDK innovations — is reshaping the technical and commercial landscape of humanoid robotics.

Understanding the Humanoid Robotics Landscape

Before we dig into SDKs and AI, let’s define what we mean by “humanoids.”

Humanoid robots are robots with a bipedal structure resembling the human body, usually with two arms, a torso, and a head. The goal is to build general‑purpose machines capable of navigating human environments and interacting with tools and objects designed for people.

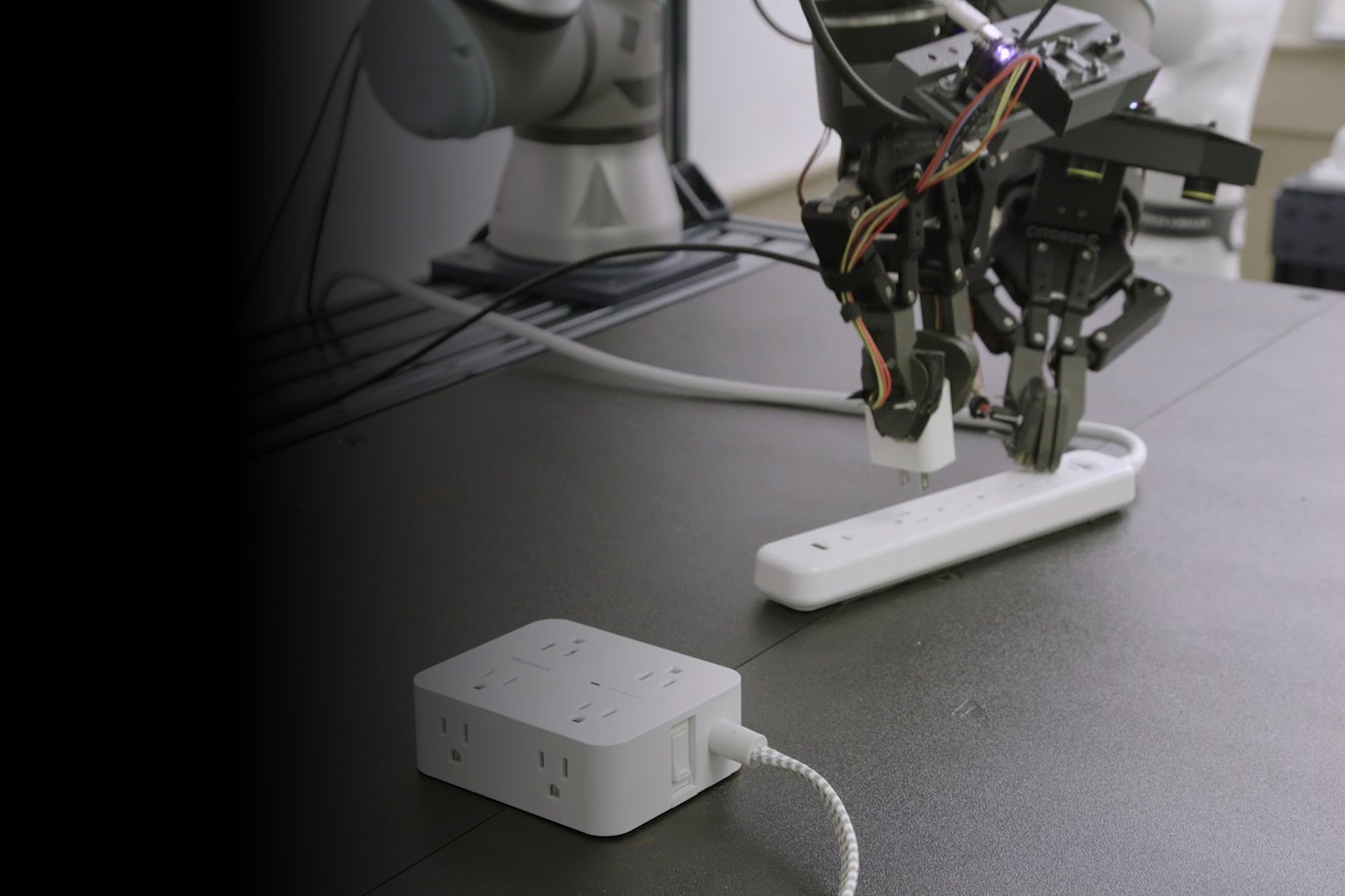

Companies like Figure AI have been among the most visible in this domain. Since its founding in 2022, Figure has developed robots such as Figure 01 and Figure 02, prototypes designed for warehouse tasks and manual labor, blending hardware engineering with AI‑driven perception and control.

Additionally, other startups like 1X Technologies — also backed by OpenAI funds — are pushing humanoids into home‑friendly roles, like carrying groceries, household chores, and general physical assistance.

Why SDKs Matter to Humanoid Robotics

An SDK is more than just a collection of libraries and tools: it’s the software foundation that lets developers build capabilities, adapt systems, and produce real‑world functionality. In robotics, SDKs bridge the enormous gap between sensing and physical action.

Traditional robot coding focuses on low‑level control: motor commands, trajectory planning, and sensor loops. But humanoid robots require contextual intelligence:

- Understanding language instructions (“pick up the box on the left”)

- Seeing and interpreting scenes like messy room layouts

- Responding adaptively to unpredictable physical environments

- Learning from experience or from human guidance

This is where AI‑powered SDKs come in, especially ones backed or influenced by OpenAI’s research — because they unify perception, reasoning, and action into usable tools.

From Basic Control to Rich Intelligence

Legacy robotics toolkits (e.g., ROS) are excellent for basic motor control and simulation, but they don’t provide high‑level reasoning or multimodal understanding. OpenAI’s SDK‑style APIs for large language models (LLMs), vision, speech, and reasoning introduce new layers:

- Language → Action Mapping: Translating human directives into robotic commands

- Vision Grounding: Robots perceiving objects and scenes using multimodal AI

- Task Planning: Breaking down complex instructions into sequenced routines

- Adaptive Learning: Using data and feedback loops to improve performance

This combination transforms robots from rigid machines into interactive partners capable of sophisticated tasks.

OpenAI’s Role — Backing, Models, and Partnership Dynamics

OpenAI’s involvement in humanoid robotics is multifaceted:

1. Strategic Financial Backing

OpenAI, along with major investors like Microsoft and Nvidia, participated in a $675 million funding round for Figure AI — a watershed investment showing confidence in humanoid robotics’ future.

This backing enables robotics companies to:

- Scale engineering teams

- Acquire expensive hardware

- Fund large‑scale cloud training for models

Funding signals confidence — but it also reflects a strategic vision that AI and physical robots will increasingly converge.

2. Collaboration on Next‑Gen AI Models

OpenAI agreed to work with Figure AI to develop specialized AI models for humanoid robots, helping machines understand and reason from language in a flexible way.

This reduces one of the biggest bottlenecks in humanoid technology: interpreting human language and environments in a robust, generalizable manner.

3. Return to Robotics Research

After disbanding robotics divisions in the early 2020s, OpenAI has quietly re‑started robotics work and built a humanoid robotics lab, growing its team significantly.

This reflects a deeper commitment: moving AI models from purely software agents into the physical world with real consequences.

4. Exploration and Internal Development

Reports suggest OpenAI may also be exploring its own humanoid robotics hardware efforts — an indication that it sees integrated AI + hardware ecosystems as strategically essential.

How OpenAI‑Backed SDKs Shift Development

Enhanced Perception and Sensing

One of the greatest challenges in humanoid robotics is perception: recognizing objects, judging distances, understanding human cues, and adapting to cluttered environments.

OpenAI’s multimodal models, accessible through SDK‑style APIs, allow robots to:

- Understand what they see

- Translate speech into action

- Identify when tasks succeed or fail

This shifts development away from brittle, rule‑based coding into AI‑driven perception pipelines.

Natural Language Understanding

Natural language is the most intuitive interaction layer between humans and robots. OpenAI’s language models and SDKs create bridges from speech/text to action.

Instead of programmers hard‑coding instructions, these SDKs allow robots to:

- Interpret conversational tasks

- Ask for clarifications

- Self‑correct

This dramatically reduces barriers for end‑users who are not robotics experts.

Generalization and Learning

Traditional programming forces rigid routines: the robot performs exactly what it was told — nothing more, nothing less.

AI‑powered SDKs enable:

- Task generalization

- Learning from mistakes

- Cross‑environment adaptation

This is key for real‑world deployment beyond test labs.

Real‑World Scenarios: Where SDKs Shine

Industrial Use Cases

In manufacturing, logistics, and automotive plants, humanoids with OpenAI‑powered cognition can assist human workers by:

- Handling repetitive or unsafe tasks

- Moving between workstations autonomously

- Understanding spoken directives from workers

Partners like BMW are already testing robots with advanced AI models — a promising preview of industrial applications.

Home and Personal Assistance

For household roles, robots need rich interaction skills: conversation, intention inference, and dynamic task planning.

Companies like 1X are exploring this space, with prototypes designed for:

- Carrying groceries

- Tidying rooms

- Learning user preferences

These are early indicators of what consumer‑level humanoids might look like.

Caregiving and Service

Imagine humanoid robots that can:

- Assist elderly people

- Support rehabilitation exercises

- Monitor and respond to medical needs

The SDKs that combine AI, perception, and physical control make these possibilities more tangible.

Challenges and Limitations

Computation and Hardware Constraints

AI models are computationally heavy, and humanoid robots have limited onboard power and processing. Balancing local vs. cloud computation is still an active engineering challenge.

Safety and Trust Issues

AI‑driven robots must make decisions in the real world — where mistakes have real consequences. SDKs must embed predictable, transparent behavior to build trust.

Human‑Robot Interaction Complexity

Expecting robots to “understand humans” involves massive contextual and cultural nuance — something far harder than it seems. SDKs provide a jumpstart, but significant work remains.

Economic and Social Impacts

Widespread humanoid adoption could reshape:

- Labor markets

- Social dynamics

- Care infrastructure

These broader implications require careful planning beyond pure engineering.

Conclusion — Are Open AI‑Backed SDKs a Game‑Changer?

The short answer: Yes — but with a necessary dose of realism.

OpenAI‑backed SDKs are accelerating humanoid robotics by:

- Providing AI models that make robots intelligent rather than just mechanical

- Reducing development friction

- Enabling natural language, perception, and reasoning capabilities

- Catalyzing investment and industry confidence

However, the journey from prototype to universal humanoids remains long. SDKs are a critical piece of the puzzle, not the entire solution.

For anyone watching the evolution of robotics, the integration of advanced AI — especially through SDKs that empower developers — signals one of the most exciting transitions in modern technology. The true impact of these tools will unfold over years, but the shift toward AI‑driven humanoids is undeniably underway.