In the rapidly advancing world of robotics, we are entering an era where robots are increasingly capable of performing tasks that were once the exclusive domain of humans. From autonomous vehicles to AI-driven surgical robots, the lines between human responsibility and robotic action are becoming increasingly blurred. One of the most pressing questions in the field of robotics today is: can robots be held liable under existing law? This is not just a theoretical issue; it has real-world implications for …

The Rise of Robotics and Autonomous Systems

Robots and autonomous systems are no longer confined to factory floors or research labs. They are now part of our daily lives, operating in various industries such as transportation, healthcare, and customer service. The rise of artificial intelligence (AI) and machine learning has allowed these machines to perform tasks with increasing autonomy and sophistication. For example, self-driving cars navigate roads, drones deliver packages, and AI-powered systems make decisions that can impact individuals’ li…

As robots become more integrated into society, they take on roles that can have serious consequences if something goes wrong. Imagine an autonomous vehicle causing an accident or a surgical robot malfunctioning during an operation. In such cases, the question arises: who is liable for the damages caused by the robot’s actions?

Legal Liability in the Context of Robots

To understand whether robots can be held liable, we first need to look at how liability works in existing legal systems. Liability generally refers to the legal responsibility for one’s actions, particularly when those actions cause harm to others. In human-centric legal systems, liability is typically assigned to individuals or organizations based on their actions or negligence. However, robots, by their very nature, are not human and cannot be held accountable in the same way.

Current Legal Frameworks

Under current law, liability for damages caused by robots typically falls to the manufacturer, developer, or owner of the robot. This means that when a robot causes harm, the entity responsible for the robot’s design, production, or operation is often held accountable. For example, if a self-driving car causes an accident, the car manufacturer or the developer of the car’s autonomous software may be held responsible.

However, there are many complexities surrounding this issue. For instance, if a robot malfunctions due to a software bug or hardware failure, it could be argued that the manufacturer is at fault. On the other hand, if the robot makes a decision based on faulty data or its programming, the responsibility could shift to the developers who designed the algorithm.

In some cases, insurance may cover damages caused by robots, but the question of liability remains unresolved in many situations. As robots continue to grow more autonomous, there is an increasing need for new legal frameworks to address these complex issues.

The Problem of Autonomous Decision-Making

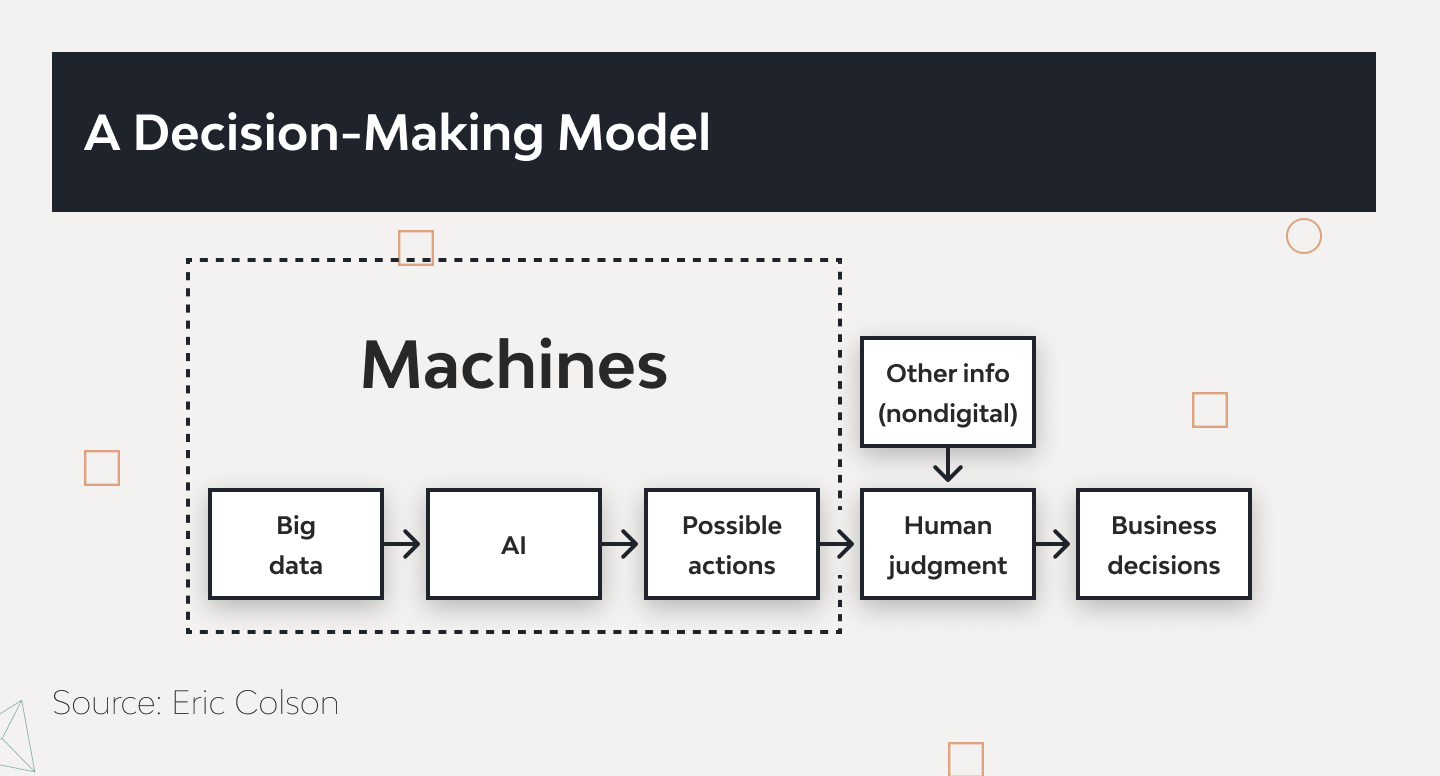

One of the biggest challenges in holding robots liable is their increasing autonomy. Traditional liability laws were designed with human actors in mind, but robots can make decisions without direct human intervention. For example, a self-driving car uses sensors and AI algorithms to make real-time decisions about speed, direction, and navigation. If something goes wrong, it’s often difficult to determine who is at fault because the decision-making process was not directly controlled by a human.

This problem becomes even more pronounced when robots are involved in more complex decision-making scenarios, such as healthcare or military operations. If a surgical robot makes an error that results in harm to a patient, it may be hard to pinpoint exactly where the fault lies—was it the design of the robot, the data used to train the system, or the decisions made by the robot’s AI during the procedure?

The Role of Intent and Accountability

In legal systems, accountability often hinges on the intent behind an action. Humans can be held responsible for their actions because they have the capacity for intent and understanding of their actions’ consequences. But what about robots? Can a robot’s actions be attributed to an intent, or is it simply following programmed instructions or learning from data?

Robots, unlike humans, do not have consciousness, emotions, or understanding of their actions. They are tools created to carry out tasks in a pre-defined manner, based on algorithms and programming. This raises the question of whether robots can truly be held accountable for their actions in the same way a human can. In a legal sense, holding a robot accountable would require attributing some form of responsibility or intent to its actions, something that current legal frameworks are not equipped to do.

Ethical and Moral Considerations

While the legal question of robot liability is important, it is equally crucial to consider the ethical and moral implications of holding robots accountable. As robots become more autonomous, we must ask ourselves whether it is fair to assign them responsibility for their actions, especially when their decisions are based on algorithms created by humans.

In the case of self-driving cars, for example, ethical dilemmas arise when a car must make a split-second decision between two harmful outcomes, such as choosing between hitting a pedestrian or swerving and causing harm to the passengers. How should the car’s AI make this decision? Should it prioritize the safety of its passengers or the life of the pedestrian? These types of ethical questions add a layer of complexity to the legal issue of liability.

Moreover, if robots are held liable for their actions, what impact will this have on human responsibility? Will it reduce the accountability of the designers and developers who created these systems, or will it lead to a new era of shared responsibility between humans and robots?

Moving Towards a New Legal Framework

The increasing autonomy of robots and AI systems necessitates the development of new legal frameworks that can address the challenges of liability. In some countries, regulators have already begun to explore the possibility of creating laws specifically tailored to autonomous systems. For example, in the European Union, there have been discussions about creating a legal framework that holds manufacturers of autonomous robots accountable for damages caused by their machines.

These discussions are still in their early stages, and it remains unclear how such laws would be enforced or what criteria would be used to determine liability. However, it is clear that current legal systems are insufficient for addressing the complexities of modern robotics.

Conclusion: The Future of Robot Liability

In conclusion, the question of whether robots can be held liable under existing law is a complex one. While current legal frameworks tend to place responsibility on the manufacturers, developers, or owners of robots, there are significant challenges in determining liability when robots operate autonomously and make decisions without direct human input. The ethical implications of robot liability also add another layer of complexity, as we must consider whether it is fair to assign responsibility to machi…

As robotics and AI continue to evolve, it is clear that new legal and ethical frameworks will be necessary to address the unique challenges posed by autonomous systems. These frameworks will need to balance the interests of human responsibility with the growing autonomy of robots, ensuring that individuals and organizations are held accountable for the actions of their machines while also recognizing the limitations of robots themselves.

Ultimately, the future of robot liability will likely involve a combination of legal, ethical, and technological solutions. As society continues to embrace the potential of robotics, we must also ensure that the law keeps pace with these advancements, creating a fair and just system for all involved.