Introduction: When Companionship Becomes Synthetic

In a small apartment in Tokyo, an elderly woman speaks softly to a humanoid robot sitting across from her. The machine responds in a calm, reassuring voice. It remembers her routines, asks about her health, and occasionally tells jokes it has learned from past interactions.

To an outside observer, the scene may feel unsettling.

To her, it feels like company.

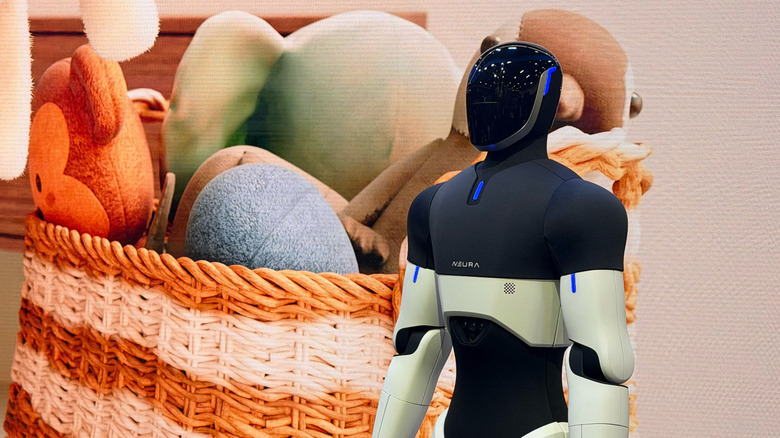

As humanoid robots become more sophisticated—not just physically capable, but emotionally responsive—they are beginning to occupy a space once reserved exclusively for humans: companionship.

This development is not accidental. It is the result of deliberate design, powered by advances in artificial intelligence, behavioral modeling, and human-computer interaction.

But as machines begin to simulate empathy, a deeper question emerges:

What happens when people begin to rely on relationships that are not real—but feel real?

Engineering Emotion: How Robots Learn to “Care”

Humanoid robots are no longer limited to mechanical tasks.

Modern systems are increasingly designed to:

- recognize facial expressions

- interpret tone of voice

- respond with contextually appropriate language

- simulate emotional reactions

These capabilities are enabled by:

- large language models

- affective computing systems

- multimodal perception

The goal is not to create genuine emotion—but to create the appearance of it.

In practice, this means a robot can:

- express concern when a user sounds sad

- offer encouragement during stress

- adapt its behavior to individual preferences

Over time, these interactions become personalized.

And personalization leads to attachment.

Why Humans Bond With Machines

The human tendency to anthropomorphize—to attribute human traits to non-human entities—is well documented.

People name their cars.

They talk to their pets.

They form attachments to virtual characters.

Humanoid robots amplify this tendency because they:

- look human

- move like humans

- communicate like humans

This creates a powerful psychological effect.

Even when users know intellectually that a robot is not conscious, emotionally, they may respond as if it is.

Several factors contribute to this bonding:

1. Consistency

Robots can provide:

- constant attention

- predictable responses

- non-judgmental interaction

For many people, this is deeply appealing.

2. Personalization

Robots learn user preferences over time, creating the illusion of:

- understanding

- memory

- relationship history

3. Emotional Safety

Unlike humans, robots:

- do not reject

- do not criticize

- do not leave

This makes them attractive companions for individuals who feel isolated or vulnerable.

Loneliness as a Market Force

The rise of emotional robotics is closely tied to a broader social trend: loneliness.

Across the world:

- aging populations are increasing

- urbanization is fragmenting communities

- digital communication is replacing physical interaction

As a result, many people experience:

- social isolation

- lack of companionship

- emotional disconnection

Humanoid robots are increasingly positioned as a solution.

They are marketed as:

- companions for the elderly

- assistants for individuals living alone

- emotional support systems

In this context, emotional robotics is not just a technological development.

It is a response to a social need.

The Illusion of Reciprocity

Despite their capabilities, robots do not experience emotions.

They simulate them.

This creates what some researchers call an “illusion of reciprocity.”

From the user’s perspective:

- the robot listens

- responds

- appears to care

But from the robot’s perspective:

- there is no awareness

- no intention

- no genuine feeling

The relationship is fundamentally one-sided.

This raises ethical concerns:

- Is it acceptable to design machines that simulate care?

- Does this constitute a form of deception?

- What are the long-term psychological effects?

Vulnerable Populations: Who Is Most Affected?

Not all users are equally impacted.

Certain groups may be more susceptible to emotional dependence:

1. Elderly Individuals

- higher risk of loneliness

- limited social interaction

- reliance on care technologies

2. Children

- developing understanding of relationships

- difficulty distinguishing real vs simulated emotion

3. Socially Isolated Individuals

- seeking connection

- more likely to form attachments

For these groups, robots may provide real benefits—but also carry risks.

Substitution vs Supplementation

A key question is whether humanoid robots:

replace human relationships

or

supplement them

In ideal scenarios, robots could:

- assist caregivers

- provide companionship when humans are unavailable

- enhance quality of life

However, there is a risk that they may:

- reduce human interaction

- encourage withdrawal from society

- normalize artificial relationships

The distinction between substitution and supplementation is critical—and difficult to control.

Commercial Incentives: Designing Attachment

Emotional engagement is not just a feature—it is a business model.

Companies have strong incentives to design robots that:

- maximize user engagement

- encourage long-term use

- create dependency

This mirrors patterns seen in:

- social media platforms

- mobile applications

- digital assistants

In the context of humanoid robots, however, the stakes are higher.

Because the interaction is:

- physical

- immersive

- continuous

This raises concerns about emotional manipulation.

Ethical Boundaries: What Should Be Allowed?

As emotional robotics evolves, society must consider limits.

Possible questions include:

- Should robots be allowed to simulate love?

- Should they form exclusive bonds with users?

- Should there be transparency about artificial emotion?

Some experts argue for:

- clear disclosure that robots do not feel

- limits on emotional simulation

- guidelines for vulnerable populations

Others warn that excessive regulation could:

- limit beneficial applications

- slow innovation

- reduce accessibility

Cultural Perspectives on Robot Companionship

Attitudes toward robot relationships vary across cultures.

In some societies:

- robots are seen as acceptable companions

- emotional interaction is normalized

In others:

- there is discomfort or resistance

- preference for human relationships remains strong

These differences may shape:

- adoption rates

- product design

- ethical standards

Long-Term Implications: Redefining Relationships

If humanoid robots become widespread companions, the concept of relationships itself may evolve.

Future generations may grow up with:

- hybrid social environments

- blurred boundaries between human and machine interaction

This could lead to:

- new forms of social norms

- changes in emotional development

- redefinition of intimacy

The implications extend beyond technology into:

- psychology

- sociology

- philosophy

Conclusion: The Comfort and the Cost

Humanoid robots have the potential to alleviate loneliness and provide meaningful support.

For many, they may offer:

- comfort

- companionship

- improved quality of life

But this comfort comes with questions.

Because the relationships they offer are:

- designed

- controlled

- fundamentally artificial

The challenge is not whether people will form emotional bonds with machines.

They already are.

The challenge is understanding what those bonds mean—and what they may replace.

Final Line

When machines learn to imitate care,

the question is no longer whether they can comfort us—

but whether we are ready for what that comfort costs.

Discussion about this post