In the world of humanoid robots, perception plays a crucial role. Whether it’s for navigating through a crowded environment, interacting with people, or performing delicate tasks, how a humanoid perceives its surroundings can make or break its effectiveness. Traditional perception systems rely on a combination of sensors, and one of the most widely used is LiDAR (Light Detection and Ranging). LiDAR provides a precise, three-dimensional map of the environment, enabling robots to detect obstacles, recognize features, and navigate autonomously. However, there has been increasing interest in replacing LiDAR with camera-only perception systems. But is it really possible to achieve the same level of precision and reliability with just cameras? Let’s dive deep into this fascinating topic.

The Basics of LiDAR and Camera Perception

Before we explore whether camera-only perception can replace LiDAR, it’s important to understand the roles each sensor plays in robot perception.

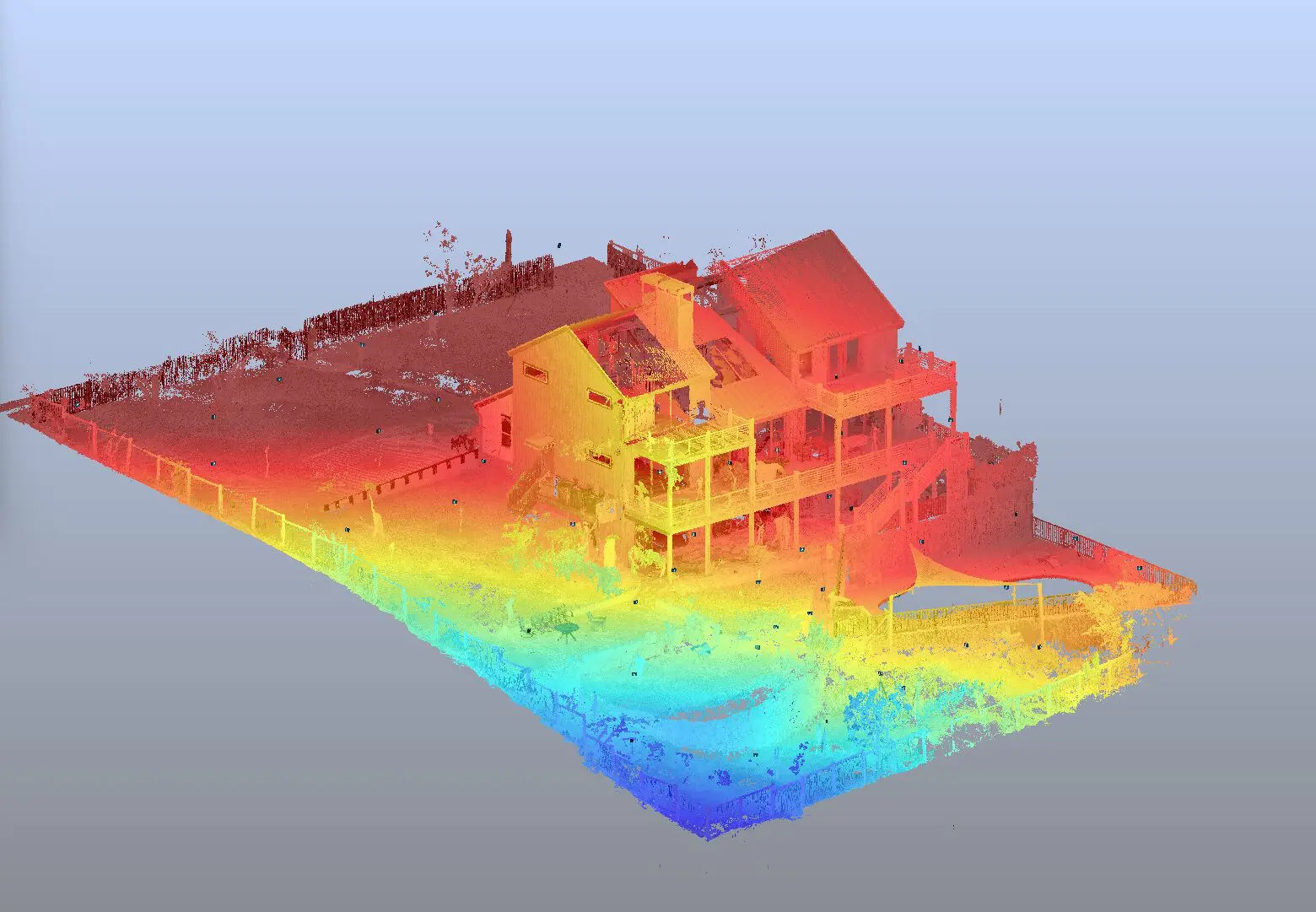

LiDAR: The Gold Standard in Depth Sensing

LiDAR works by emitting laser beams and measuring the time it takes for the beams to bounce back after hitting an object. This process allows LiDAR systems to create highly accurate, three-dimensional maps of the environment, capturing depth information with exceptional precision. LiDAR is particularly useful in environments with poor lighting conditions or where high-precision navigation is required, such as in autonomous vehicles or humanoid robots in industrial settings.

Some key advantages of LiDAR:

- Precise Depth Perception: LiDAR can measure distances with millimeter accuracy.

- Works in Low Light: Unlike cameras, LiDAR is not affected by lighting conditions.

- Reliability: LiDAR systems are less sensitive to changes in color or texture, making them suitable for diverse environments.

Cameras: The Eyes of the Robot

Cameras, on the other hand, work by capturing light reflected from objects in the environment. The data from cameras is typically two-dimensional (2D), but with sophisticated algorithms, depth perception can be derived through techniques like stereo vision or depth mapping. Cameras have several advantages over LiDAR, especially when paired with advanced machine learning models for image processing.

Advantages of camera perception:

- Rich Visual Data: Cameras provide high-resolution color images, allowing the robot to “see” textures, colors, and patterns.

- Low Cost: Cameras are significantly cheaper than LiDAR sensors.

- Size and Weight: Cameras are lightweight and compact, making them ideal for smaller robots.

But there are challenges too. Cameras alone don’t provide direct depth information, and interpreting depth from a 2D image requires sophisticated algorithms, like stereo vision or deep learning models. Furthermore, cameras struggle in low light or overexposed conditions, making them less reliable than LiDAR in certain environments.

Can Camera-Only Perception Replace LiDAR in Humanoids?

Now, let’s address the core question: Is it possible to replace LiDAR with cameras in humanoid robots?

Advances in Computer Vision and Machine Learning

In recent years, computer vision, combined with deep learning techniques, has made tremendous strides. Through convolutional neural networks (CNNs), robots can analyze and interpret visual data with incredible accuracy. By using techniques such as monocular depth estimation (predicting depth from a single image), stereo vision (using two cameras to triangulate depth), and structure-from-motion (estimating the 3D structure from multiple 2D images), it is possible to derive depth information from cameras.

These methods allow a robot to understand its surroundings in a similar way to how humans do: by interpreting visual cues, shapes, and distances. In fact, some humanoid robots, like Honda’s ASIMO, already employ camera-based vision systems to navigate and perform tasks.

However, while these advancements have enabled significant progress, there are still some limitations compared to LiDAR. Depth estimation from cameras can be imprecise, especially in challenging environments where lighting is poor, objects have similar colors, or surfaces are reflective. The lack of direct, reliable depth sensing makes cameras less robust in some real-world applications, particularly in complex and dynamic environments.

Limitations of Camera-Only Perception in Humanoids

There are several key challenges that must be addressed before cameras can fully replace LiDAR in humanoid robots:

- Depth Accuracy and Precision: Cameras can only estimate depth with varying degrees of accuracy. LiDAR, by contrast, provides real-time, precise depth information, making it ideal for tasks like collision avoidance, navigation, and precise manipulation. Even advanced camera-based systems can struggle with occlusion (objects blocking others) and challenging lighting conditions, leading to inaccuracies in depth estimation.

- Reliability in Low-Light Environments: Cameras depend on ambient light to function, and they perform poorly in dim or dark environments. LiDAR sensors, on the other hand, can function effectively in complete darkness. For humanoids working in environments like warehouses, construction sites, or emergency situations, the ability to see in low-light conditions could be a critical factor.

- Robustness in Dynamic Environments: In fast-moving environments with rapidly changing conditions (e.g., people walking around a humanoid), cameras can become overwhelmed by the sheer amount of visual data they need to process. LiDAR, however, excels at detecting stationary and moving objects, providing stability even when the environment is unpredictable.

- Computational Overhead: While cameras capture rich, high-resolution data, processing this information in real time requires significant computational power. When trying to extract accurate depth maps or detect 3D features, the computational burden can become intense. LiDAR sensors, by contrast, offer fast and efficient depth sensing with relatively less computational load.

The Case for a Hybrid Approach

Given the limitations of camera-only systems and the reliability of LiDAR, a hybrid approach is often the preferred solution in humanoid robots. This approach combines the strengths of both sensors—cameras for detailed visual information and LiDAR for accurate depth sensing. By integrating data from both sources, robots can achieve better performance in terms of accuracy, reliability, and robustness.

For example, a humanoid robot might use cameras to detect objects, recognize faces, or analyze textures, while LiDAR would provide precise depth maps for navigation and collision avoidance. By combining the strengths of these two technologies, humanoid robots can navigate more effectively, adapt to changing environments, and perform tasks with greater precision.

The Future of Camera-Only Perception in Humanoids

While LiDAR continues to be an essential component of many humanoid robots, advancements in computer vision and machine learning could eventually lead to fully camera-based perception systems. Researchers are actively exploring ways to improve depth estimation, reduce computational overhead, and enhance performance in low-light or dynamic environments.

For example, researchers are investigating the use of neural radiance fields (NeRFs), which are neural networks capable of rendering realistic 3D scenes from 2D images. With these systems, cameras could provide highly detailed and accurate depth information, even in complex environments. Additionally, advancements in sensor fusion techniques could enable robots to combine visual data from multiple cameras, improving depth estimation and creating more reliable perception systems.

Conclusion

While camera-only perception in humanoids is still in its developmental stages, it shows tremendous potential. With the rapid advancements in computer vision, deep learning, and sensor fusion, it is conceivable that camera-based systems could one day replace LiDAR in many humanoid applications. However, for now, LiDAR remains the gold standard in depth sensing, and a hybrid approach that combines cameras and LiDAR offers the most reliable and effective solution for humanoid robots.

As technology continues to evolve, we can expect humanoid robots to become more intelligent, more adaptable, and more capable of operating autonomously in a wide range of environments. The future is bright for robots with camera-only perception, but they still have a ways to go before they can fully replace LiDAR.