The world of robotics has rapidly evolved, with robots increasingly playing key roles across various industries, from manufacturing to healthcare. As these machines become more integrated into society, the need for human-level perception becomes more pressing. For robots to interact effectively and meaningfully with humans, they must not only be aware of their environment but also be able to perceive it as humans do. The question arises: Are current sensor suites enough to achieve human-level perception in robots?

This article explores the capabilities and limitations of current sensor technologies in robotics, examines the challenges that robots face in replicating human sensory experiences, and delves into the future of sensor systems. Through this discussion, we will consider what advancements are necessary for robots to perceive and understand the world as humans do.

The Evolution of Sensor Suites in Robotics

In the early days of robotics, sensors were relatively simple, often limited to proximity or motion detection. These early robots operated in structured, controlled environments where complex human-like perception wasn’t a necessity. However, as robots began to work alongside humans in more dynamic and unpredictable settings, such as homes or healthcare environments, the need for more sophisticated sensors became clear.

Today, robots are equipped with a variety of sensor suites, such as:

- Cameras and Visual Sensors (RGB, Depth, Infrared): These sensors capture visual information and help robots understand their surroundings by analyzing images or video feeds. They are crucial for tasks like object detection, facial recognition, and navigation.

- LiDAR (Light Detection and Ranging): LiDAR technology emits laser beams and measures their reflection to map out a robot’s environment in 3D. This is particularly useful for autonomous vehicles and robots navigating unfamiliar spaces.

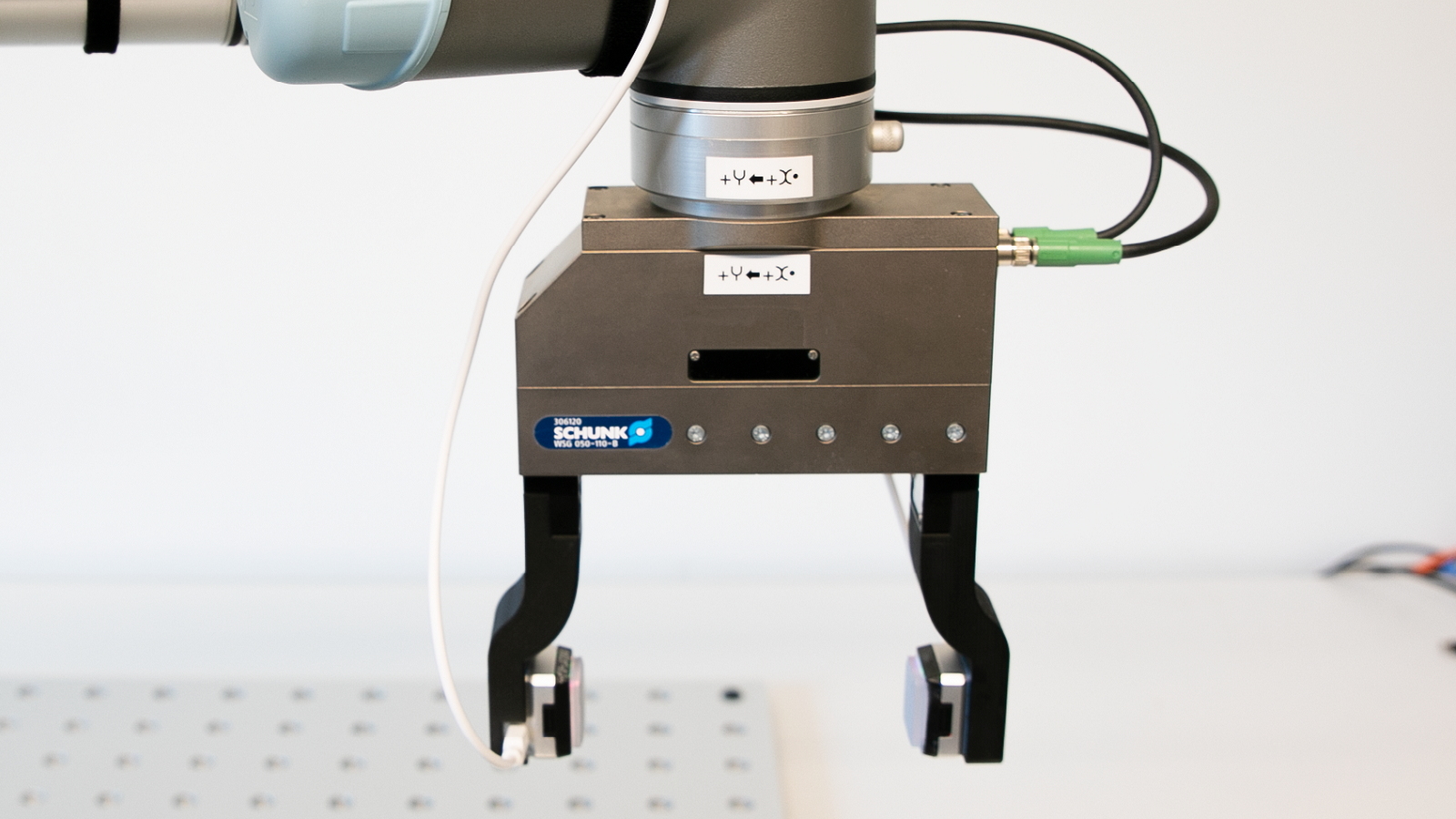

- Touch Sensors (Tactile): Touch sensors allow robots to feel their environment. These sensors can detect force, pressure, and temperature, helping robots interact with objects delicately, such as when assembling or holding an object.

- Auditory Sensors (Microphones): Microphones help robots detect sounds, including human speech. This is a fundamental component for natural language processing and human-robot interaction.

- Proximity Sensors: These sensors help robots detect nearby objects, allowing them to avoid obstacles and plan paths accordingly.

While these technologies represent significant progress, they are still far from achieving the nuanced and robust perception of a human. To understand why, we need to explore the inherent differences between how humans and robots perceive the world.

The Human Advantage: Multimodal Perception

Humans don’t rely on a single sensory input to interact with the world. Our brains integrate information from various sensory modalities—sight, hearing, touch, taste, and smell—to form a coherent understanding of our environment. This is called multimodal perception.

For instance, when you interact with a cup of coffee, you don’t just see it (visual perception); you also feel the warmth (tactile perception), smell the aroma (olfactory perception), and perhaps even hear the subtle sound of the liquid stirring (auditory perception). All of these inputs are processed together, allowing you to understand the context and interact with the object effectively.

In contrast, most robots today are limited to using a single or a small subset of these sensory inputs. While some advanced robots can integrate data from multiple sensors, the richness and depth of their perception are still limited. A robot might detect an object in its visual field, but it may not “understand” it in the same multifaceted way a human would, lacking the ability to integrate other sensory cues or contextual understanding.

Why Current Sensor Suites Fall Short

There are several reasons why current sensor suites in robots struggle to replicate human-level perception:

- Limited Sensor Fusion: While robots may use multiple sensors, the integration of sensory data is not always seamless. Robots may struggle to combine visual, auditory, and tactile information in real-time, leading to incomplete or inaccurate perceptions of their environment. Humans, on the other hand, are experts at combining sensory information effortlessly, which aids in perception and decision-making.

- Lack of Depth in Sensory Inputs: Human sensory inputs are highly detailed and rich. For example, humans can perceive subtle textures with their sense of touch or hear a whisper from a distance. Robot sensors, on the other hand, often provide a more superficial level of data. While cameras can detect objects, they struggle to fully grasp the texture, weight, or emotional significance of those objects in the same way humans do.

- Context Awareness: Humans can interpret sensory data within the context of their environment and experiences. For instance, we can hear a faint sound in a crowded room and instantly recognize it as a friend calling our name, even if there are many other noises around. Current robot sensors lack this level of contextual awareness, making it difficult for them to prioritize and interpret sensory data effectively.

- Sensitivity to Dynamic Changes: The world around us is constantly changing, and humans have an incredible ability to adapt to these changes. For example, we can adjust our visual perception in low light or rapidly change our focus when new objects appear. While robots are improving in this area, most sensor suites are still slow to adapt to dynamic environments, and many are unable to recognize subtle changes in real-time.

The Role of Machine Learning in Enhancing Perception

One promising avenue for improving robot perception is machine learning. Through training, robots can learn to recognize patterns and make sense of complex data. For instance, robots equipped with advanced computer vision algorithms can be trained to recognize objects, faces, and even emotions in images and videos.

However, while machine learning can improve a robot’s ability to process sensory data, it still relies heavily on the quality and quantity of the data being fed into the system. The more data a robot has, the better it can learn to perceive the world. This presents challenges, particularly in terms of data collection, privacy, and the computational resources required to process large datasets.

Moreover, robots’ learning abilities are still relatively limited. While they can process large volumes of data quickly, they lack the level of common sense reasoning that humans use to interpret sensory information. Humans can understand abstract concepts and make decisions based on incomplete or ambiguous data—an area where robots still fall short.

The Future of Sensor Technology in Robotics

As robotics continues to advance, new sensor technologies are emerging that could enhance a robot’s perception of the world. Here are some promising innovations:

- Neuromorphic Sensors: These sensors mimic the way the human brain processes information. Neuromorphic sensors could allow robots to process sensory data more efficiently, enabling them to make quicker decisions and adapt to dynamic environments.

- Multisensory Integration: Future robots may be equipped with even more advanced sensor suites that allow for more seamless integration of sight, sound, touch, and even smell. This could allow robots to have a richer, more nuanced understanding of their surroundings.

- Quantum Sensors: Quantum technology has the potential to revolutionize many fields, including robotics. Quantum sensors could provide robots with unprecedented sensitivity and accuracy in detecting minute changes in their environment, such as detecting subtle chemical signatures or measuring minute temperature fluctuations.

- Advanced AI and Cognitive Systems: Combining advanced machine learning with cognitive systems may allow robots to interpret sensory data in a more human-like manner. These systems could enable robots to recognize emotions, understand complex social interactions, and make more nuanced decisions based on context and experience.

Conclusion: The Road Ahead for Human-Like Perception in Robots

While current sensor suites in robots are impressive, they are still far from achieving the level of human perception. To match human sensory capabilities, robots need to integrate sensory data more seamlessly, interpret context more effectively, and adapt to dynamic environments in real-time.

The future of robot perception lies in the continued development of advanced sensor technologies, machine learning algorithms, and cognitive systems. As these technologies improve, robots will become better at perceiving the world in a more human-like way, which could open up new possibilities for human-robot collaboration, healthcare, and beyond.