End-to-end motion learning represents an exciting frontier in humanoid control, where robots learn complex movements and tasks directly from raw sensory data without the need for handcrafted rules or explicitly programmed motions. This paradigm eliminates the dependence on traditional techniques like inverse kinematics and trajectory planning, opening up new avenues for creating more versatile and adaptable humanoid robots.

This article will explore what end-to-end motion learning looks like, how it is applied to humanoid control, its benefits, challenges, and the cutting-edge techniques that make it possible. Along the way, we’ll delve into the significant impact of this innovation on robotics, AI, and beyond.

Understanding End-to-End Motion Learning

What Is End-to-End Motion Learning?

End-to-end motion learning is a machine learning paradigm that enables robots to learn complex movement behaviors from raw data—such as images, force sensors, and joint angles—without the need for manual intervention or predefined instructions. In simpler terms, instead of programming a robot’s movements through explicit commands or designing complex control systems, the robot learns how to move by interacting with the environment and adapting its behavior over time through deep learning techniques.

This approach contrasts with traditional methods, which often rely on hand-coded solutions or modular systems where each component (like perception, planning, or control) is carefully designed. End-to-end learning allows for a more flexible and holistic approach to humanoid control, where the robot itself learns optimal actions based on experience and feedback.

The Role of Deep Learning in Motion Learning

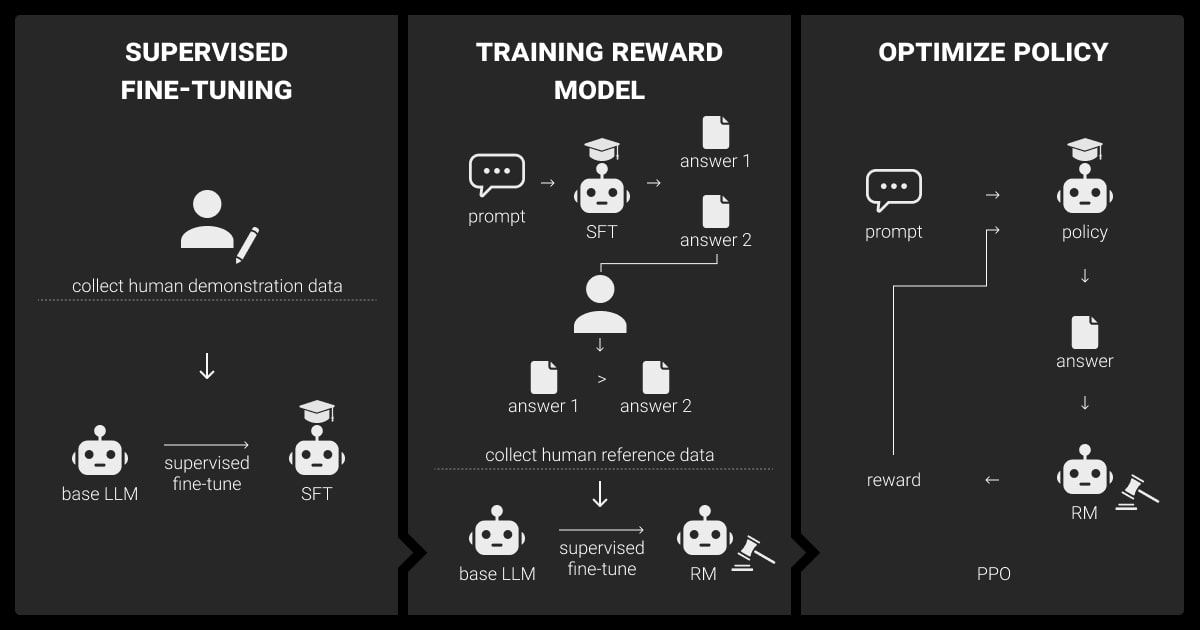

At the core of end-to-end motion learning lies deep learning, particularly reinforcement learning (RL), convolutional neural networks (CNNs), and recurrent neural networks (RNNs). These algorithms allow the robot to:

- Perceive the environment: Cameras, force sensors, and other inputs provide a raw stream of sensory data.

- Learn to associate actions with outcomes: The robot explores its environment and adjusts its movements based on trial and error, using rewards and punishments as feedback mechanisms.

- Generalize across tasks: The trained model can generalize to a wide range of tasks and environments, adapting to new situations without requiring extensive retraining.

How Does End-to-End Learning Work in Humanoid Robots?

Humanoid robots—designed to resemble and move like humans—have an intricate set of mechanical joints and actuators that allow for complex movements like walking, running, balancing, and manipulating objects. End-to-end motion learning can be applied to these robots by integrating the learning process into the entire pipeline from sensory input to actuation.

Here’s how the end-to-end learning process works in practice for humanoid control:

- Data Collection: The robot’s sensors (vision, touch, accelerometers, etc.) gather environmental data and internal states (like joint positions and velocities).

- Neural Network Training: A deep neural network processes this raw data to learn the relationships between the robot’s actions and sensory feedback. This might include walking across uneven terrain, picking up an object, or climbing stairs.

- Decision Making: The neural network outputs control signals that directly drive the robot’s actuators, telling the robot how to move its limbs, adjust its posture, or interact with objects in real-time.

- Continuous Feedback: The system continuously refines its control strategy through reinforcement learning, where it is rewarded for successful tasks and penalized for failure, iterating over many cycles until the robot has mastered the task.

Applications of End-to-End Motion Learning in Humanoid Robots

Autonomous Walking and Balancing

One of the most famous applications of end-to-end motion learning in humanoid robots is autonomous walking and balancing. Teaching a robot to walk naturally is a difficult task that traditionally involved a lot of preprogrammed rules. With end-to-end learning, humanoid robots learn how to walk by themselves through reinforcement learning. They start by stumbling and falling, but through repeated trials, they improve their balance and coordination. Eventually, they can walk across different terrains with impressive stability.

For instance, Boston Dynamics’ Atlas robot uses similar techniques to autonomously perform complex maneuvers like backflips and parkour. End-to-end learning has enabled Atlas to continuously adapt and enhance its walking performance, making it more agile and responsive to dynamic environments.

Robotic Manipulation and Object Interaction

Another area where end-to-end motion learning shines is in robotic manipulation—where robots are tasked with handling objects. End-to-end learning allows humanoid robots to learn to grasp, manipulate, and place objects by receiving sensory input such as vision data or force feedback.

In a real-world application, robots can be trained to pick up various objects and place them in specific locations based purely on visual data. Through trial and error, the robot learns the optimal grip strength, positioning of its arms, and angles of motion to successfully complete tasks. This can be particularly useful in industrial environments, where robots perform assembly tasks, sort objects, or assist in warehouses.

Human-Robot Interaction

End-to-end motion learning also enhances human-robot interaction (HRI). By learning from real-time sensory feedback, humanoid robots can engage in more natural, adaptive interactions with humans. For example, a humanoid robot could learn to recognize human gestures and respond accordingly. This makes robots more intuitive and responsive, which is vital for applications like caregiving or customer service.

For instance, a robot could be trained to respond to a human’s hand wave or body posture by mimicking a movement or offering assistance. In this way, robots are able to learn and interact with humans without needing explicit programming for each individual situation.

Challenges and Limitations

While end-to-end motion learning has shown promising results in humanoid control, there are still several challenges that need to be addressed.

1. Data Efficiency

Deep learning models often require vast amounts of data to train effectively. For humanoid robots, this can mean hours of real-world trials, which can be both time-consuming and costly. Although simulation environments can help alleviate this problem, they still lack the complexity and unpredictability of real-world scenarios.

2. Robustness and Safety

End-to-end learning can sometimes result in unpredictable or unsafe behaviors. Since the robot learns through trial and error, there is always the risk of the robot attempting dangerous or damaging actions during the learning process. Developing robust safety mechanisms is critical to ensure that humanoid robots can function safely in real-world environments.

3. Generalization to New Tasks

While end-to-end learning can help a robot perform a wide variety of tasks, transferring learned behaviors from one task to another remains a significant challenge. Unlike humans, who can easily generalize learned behaviors, robots often need extensive retraining when presented with slightly different conditions or tasks. This limitation highlights the need for further research in generalization techniques.

4. Computational Resources

Training deep learning models, especially for humanoid robots with complex movements, requires significant computational resources. High-end GPUs and distributed computing systems are often needed to process the large amounts of data required to train these systems effectively.

Future of End-to-End Motion Learning in Humanoid Control

Advancements in Reinforcement Learning

The future of end-to-end motion learning for humanoid robots will likely be driven by advancements in reinforcement learning. As RL algorithms become more efficient and data-efficient, we can expect humanoid robots to learn new tasks faster and with fewer trials. Furthermore, new techniques like meta-learning and transfer learning could allow robots to generalize their learning across tasks more effectively.

Integration with Soft Robotics

The development of soft robots, which are more flexible and adaptable than traditional rigid robots, could also benefit from end-to-end motion learning. By allowing robots to learn through flexible structures, we can expect more versatile humanoid robots that can adapt to a wider variety of tasks, including delicate handling and interacting with complex environments.

Collaborative Robotics and Swarming

End-to-end motion learning could also play a pivotal role in collaborative robotics, where multiple robots work together to complete a task. By learning from each other’s experiences and sharing learned behaviors, humanoid robots could operate in teams to perform complex, large-scale tasks like disaster relief or manufacturing assembly lines.

Ethical Considerations and Regulation

As humanoid robots become more capable, ethical questions around their use and regulation will become even more pressing. Issues of safety, privacy, labor displacement, and accountability will require careful thought as robots take on more roles in human-centric environments.

Conclusion

End-to-end motion learning in humanoid control is a transformative development in robotics that promises to revolutionize how robots interact with the world. By removing the need for handcrafted programming and enabling robots to learn from their own experiences, this approach opens up new possibilities for autonomous robots that can perform a wide range of tasks in dynamic environments.

While challenges remain, the potential benefits—greater adaptability, improved interaction, and safer, more efficient robots—are immense. As technology advances and new methods are developed, end-to-end motion learning will continue to shape the future of humanoid robots and AI.