In the unfolding landscape of robotics and artificial intelligence, one question has sparked debate among engineers, scientists, and technologists alike: Does the Figure Helix model simplify real‑world robot control? It is a question at once technical and philosophical, touching on the heart of what it means to make robots that operate not only with precision, but with adaptability, autonomy, and human-level fluency. To answer it, we must take a deep, structured journey through the challenges of robot control, the historical limitations of existing models, and the way that the Helix architecture — pioneered by Figure AI — reinscribes the very foundations of how physical agents perceive, think, and act in the messy complexity of human environments.

This article deconstructs Helix piece by piece, analyzing whether it simplifies robot control in a meaningful way — by reducing complexity for developers, enhancing adaptability in real world tasks, and enabling robots to act with both dexterity and generalization previously seen only in science fiction. Along the way, we’ll explore its technical innovations, real‑world demonstrations, limitations, and implications for the future of automation.

1. The Challenge of Real‑World Robot Control

When engineers speak of robot control, they refer to a pipeline that starts with sensory perception — what the robot sees — continues through decision making — how it chooses what to do — and ends in motion execution — how it actually moves joints, fingers, and limbs. Each of these stages by itself is deeply complex.

In traditional robotics, control is often divided into layers:

- Perception systems that interpret sensor data (vision, lidar, touch).

- Motion planners that calculate joint trajectories.

- Task‑specific controllers derived from precise kinematic models and handcrafted rule sets.

This bottom‑up approach requires huge amounts of expert engineering, including precise modeling of kinematics and dynamics, careful calibration of sensors, and task‑specific programming that rarely generalizes beyond narrow use cases. Even for a single task like picking up a cup, developers had to create dedicated models or classical algorithms to sense the object, plan the grasp, and execute motion. Scaling this to 10,000 different objects or tasks meant exploding complexity and manual effort.

This fragmentation is why robots — controlled by different systems for vision, planning, and action — have struggled to operate outside controlled industrial environments for decades.

2. Vision‑Language‑Action Models: A New Paradigm

The Helix model represents a fundamentally different approach: a Vision‑Language‑Action (VLA) model that unifies perception, natural language interpretation, and continuous motion control in a single end‑to‑end learning system. The idea isn’t just to build better controllers, but to collapse layers that historically were siloed — essentially creating a unified “brain” for robots.

A VLA model integrates three streams:

- Vision: The robot observes the world, captures visual inputs from cameras and sensors.

- Language: Natural language instructions or context reasoning are fused with visual understanding.

- Action: The model directly outputs continuous control commands to move motors, actuators, and joints.

By merging these components, VLA systems can potentially avoid the traditional disconnect between high‑level reasoning and low‑level control — allowing robots to respond to human instructions and adapt in real time.

Helix, developed by Figure AI, is a prominent new entrant in this category, and the first to demonstrate significant capabilities in high‑dimensional humanoid control.

3. What Makes Helix Unique?

At first glance, Helix may look like another AI model. But several features distinguish it in the world of robot control:

3.1. Unified End‑to‑End Learning

Unlike systems that train separate components for perception, planning, and control, Helix learns a shared representation that connects what the robot sees to how it moves.

Through training on around 500 hours of teleoperated robot behavior paired with natural language labeling, Helix learns to take raw sensory input, internalize semantic meaning, and emit continuous motor control signals that drive real actions — all without task‑specific fine‑tuning.

This end‑to‑end training simplifies the robot control pipeline — eliminating many intermediate manual engineering steps.

3.2. Dual System Architecture: Fast & Smart

Helix’s architecture is inspired by human cognitive systems: a two‑part model where:

- System 2 handles higher‑level reasoning and language interpretation at lower frequency (about 7–9 Hz).

- System 1 executes real‑time continuous control of the robot at very high frequency (~200 Hz).

This separation mirrors how humans think: slow, deliberate planning interwoven with rapid, reflex‑like execution. For robots, this means decisions don’t get lost in the gap between thinking and acting — a perennial problem in robotics control.

3.3. Full Upper‑Body Control

Helix is among the first VLA models to demonstrate continuous, high‑frequency control of an entire humanoid’s upper body — including arms, torso, head, and individual fingers. This 35‑degree‑of‑freedom manipulation allows robots to perform nuanced, human‑like tasks far beyond the limited movements typical of earlier systems.

3.4. Generalization Without Fine‑Tuning

One of the most striking claims about Helix is its ability to pick up objects it has never seen before — just by understanding the language prompt and vision input. It does not require separate training datasets for each new object or task.

This level of generalization directly addresses a core challenge in traditional robotics: brittle performance outside of known scenarios.

3.5. Multi‑Robot Collaboration

Perhaps even more revolutionary, Helix has been shown to coordinate multiple robots simultaneously using the same neural network weights — enabling collaborative tasks that require teamwork, synchronized manipulation, and shared objectives — a capability that would be exceptionally complex using classical control architectures.

4. Does Helix Truly “Simplify” Robot Control?

At this point, we can unpack this question more precisely:

- Does Helix reduce developer burden?

- Does it make robots more adaptable to real world environments?

- Does it actually simplify the complexity of control algorithms in practice?

4.1. Reduction of Manual Engineering

Yes — Helix significantly lowers the amount of manual, task‑specific engineering required. Where earlier robot systems demanded separate vision, planning, state estimation, and motion control modules, Helix consolidates them into one learned policy. This shift means less handcrafted code and less engineering time to integrate disparate modules.

Most importantly, Helix frames robot behavior as a single learned function mapping visuo‑lingual context to motor actions, reducing the need for handcrafted interface layers.

Essentially, developers can now treat robot control more like end‑to‑end learning in modern AI, which is simpler in concept and demands less manual feature engineering than classical robotics pipelines.

4.2. Real World Adaptability

In real world environments — where objects vary in shape, texture, and context — Helix demonstrates a capability that classical systems struggle to match: zero‑shot generalization.

When a human instructs a Helix‑equipped robot to “pick up the red cup and place it on the table,” the robot can interpret the prompt, visually locate the object, and execute the motion — without having seen that exact scenario before.

This adaptability is a form of simplification: instead of exhaustive task replication or static programming, the system learns behaviors that generalize.

4.3. Complexity in Model Training

However, it is important to clarify that Helix does not eliminate complexity entirely. The model itself is complex — involving large neural networks and a sophisticated architecture that would be challenging to design and train. The simplification is mostly on the user end: once Helix is trained, developers can leverage it without hand‑crafting controllers for every new scenario.

In other words, Helix shifts complexity from controllers coded by humans to policies learned by data. While this simplifies integration and deployment, it demands high‑quality data and careful model training — a different kind of complexity inherent in modern AI systems.

5. Technical & Practical Limitations

While Helix represents a major leap forward, it is not without limits.

5.1. Data Requirements

Training an end‑to‑end model capable of capturing visuo‑lingual relationships and motion dynamics requires large amounts of robot behavior data — in this case, hundreds of hours of teleoperated demonstrations. Collecting such datasets can be expensive and time consuming.

Moreover, the quality of training data — including diverse examples of objects, environments, and tasks — heavily influences generalization performance.

5.2. Hardware Constraints

Despite claims of running on embedded low‑power GPUs, real‑time continuous control at 200 Hz across many degrees of freedom still demands robust hardware. Developers cannot assume that Helix frees robots from hardware limits.

In some environments, sensor noise, unexpected physical interactions, or hardware wear can still challenge the model, requiring fallback safety systems or deeper control tuning.

5.3. Interpretability and Debugging

One of the broader challenges with end‑to‑end learned systems is interpretability. When a robot does something unexpected, tracing back to the failure mode is nontrivial compared to rule‑based or modular controllers, which can be debugged step by step.

This opacity — a classic problem in deep learning — could complicate safety validations, certification, and deployment in critical environments.

5.4. Safety and Edge Cases

Generalization does not guarantee correctness in all edge cases. A robot using Helix might misinterpret ambiguous language inputs or respond poorly to unusual scenarios not represented in training data. This potential unpredictability underscores the need for hybrid safety systems that combine learned policies with robust fallback controls.

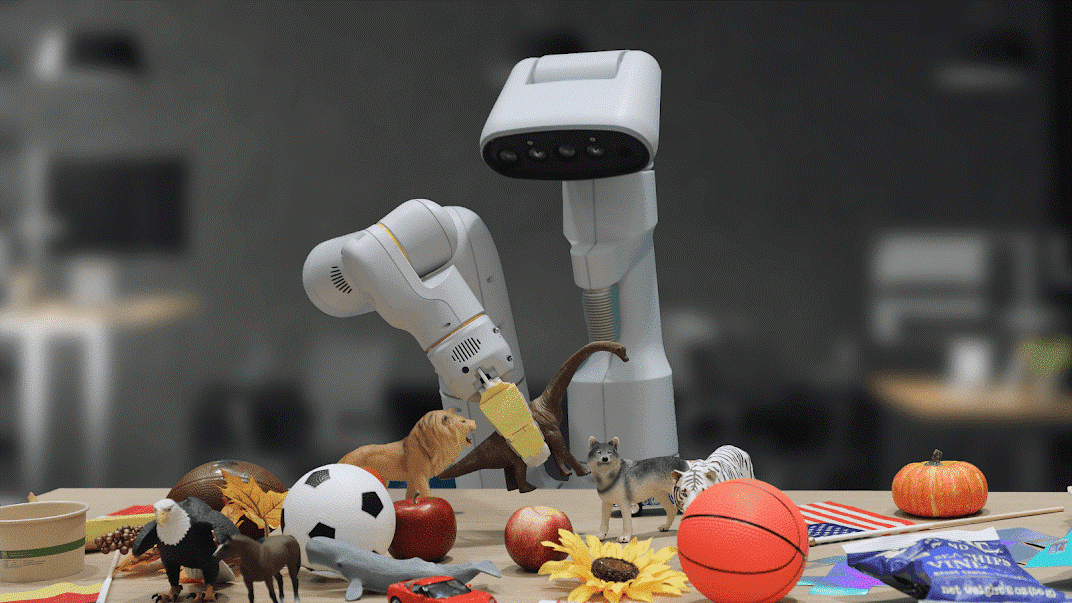

6. Real‑World Demonstrations

What validates any robot control system better than theory is real‑world demonstrations.

Across multiple demonstrations reported by Figure and independent outlets:

- Helix‑equipped robots have collaborated to pick up, sort, and store household items — including objects they had never encountered before.

- Dual robots cooperated in long‑duration tasks, managing things like grocery sorting with natural language instructions.

- The architecture was shown to control every joint — enabling nuanced movements such as grasping, extending, and coordinating head orientation as part of task execution.

These demonstrations are more than proofs of concept — they illustrate higher‑order adaptability and behavioral generalization in environments more complex than flat industrial floors.

Notably, Helix shows capabilities that were nearly impossible with traditional modular controllers without tremendous engineering effort.

7. Helix as a Paradigm Shift

By consolidating perception, language, and action into a single learned policy, Helix signals a paradigm shift in robot control.

Rather than thinking in terms of controllers, planners, and separate vision stacks, developers and researchers can now explore systems that learn control from multimodal experience. This mirrors trends in other areas of AI — from autonomous vehicles to natural language understanding — where end‑to‑end models outperform handcrafted pipelines.

Additionally, Helix’s multi‑robot control and collaboration capabilities show that robots can reason collectively, not just individually — opening paths to emergent behavior, cooperative manipulation, and complex task execution once considered out of reach.

8. Looking Ahead: Robot Control Simplified?

So, does the Figure Helix model simplify real‑world robot control? The answer is nuanced, but fundamentally yes — in how it changes the developer experience, avoids task‑specific programming, and enables more general, adaptable robot behavior.

By shifting the locus of complexity from manual code to learned models, Helix reduces the cost and time required to deploy robots in real‑world scenarios. It also demonstrates that robots can be controlled with natural language instructions and real‑time continuous actions that generalize beyond predefined task lists.

However, this simplicity is not magic: it emerges from decades of AI research, demands high‑quality data, depends on powerful models, and involves new complexity in model design and training. The future of robot control will likely blend such learned policies with hybrid safety systems, data‑efficient training, and robust benchmarking to deliver fully reliable agents across industries.