In the fast‑paced world of robotics, one question keeps both engineers and dreamers up at night: Can a humanoid robot learn everything it needs inside a computer and then walk out into the real world and work effectively? At first glance, this may sound like science fiction. But thanks to rapid advancements in simulation‑based training, reinforcement learning, and transfer learning, this dream is edging closer to reality — not only conceptually but demonstrably. From robots that learn to grasp objects in virtual environments to full humanoid agents that execute complex bipedal locomotion without real‑world training, the field is booming with innovation and robust scientific inquiry.

The Allure of Simulation Training

Training robots in the physical world is expensive, risky, slow, and often impractical. Hardware wears out, environments are unpredictable, and accidents are costly. Simulation offers an appealing alternative: instant reset, massive parallel training, and minimal risk. In a simulated landscape, an algorithm can run millions of experiences in the time it would take a real robot to fall over once.

Yet for all its advantages, simulation comes with a well‑known limitation: the “reality gap.” This is the difference between what happens in a perfect or idealized virtual environment and the messy, imperfect real world. The closer the simulation is to reality, the easier it should be for learned behaviors to transfer — but perfect accuracy is extraordinarily difficult to achieve, especially for complex dynamics like contact physics, friction, sensor noise, and micro‑delays in actuation.

Sim‑to‑Real: The Core Challenge

The heart of this question lies in the Sim‑to‑Real gap — the challenge of training a policy or control strategy in simulation that works just as well when deployed physically. Robotics researchers have identified several sources of this gap:

- Physics discrepancies: Simulators approximate complex physics, but even small errors can produce large differences in actual behavior.

- Sensor fidelity: Simulating realistic sensor noise (like vision, proprioception, or force feedback) is difficult and rarely perfect.

- Environment complexity: Real environments are filled with uncertainty — lighting changes, deformable objects, unexpected obstacles — that simulators cannot fully predict.

Despite these challenges, research is progressing fast.

Techniques to Bridge Sim and Reality

1. Domain Randomization

One widely used tactic is domain randomization, where the simulator intentionally varies environmental parameters — textures, object positions, lighting, friction coefficients, and more — across thousands of permutations during training. The idea is simple: if the agent experiences enough variability, it will learn robust strategies that generalize to unforeseen real‑world conditions.

This method has been shown to improve transfer in tasks as diverse as reach‑to‑grasp and visual perception. In one study, robots trained on domain‑randomized simulated data reached objects in the real world with higher accuracy than models trained without such variability.

2. Multi‑Sim & Cross‑Sim Training

Simulation fidelity varies across engines like MuJoCo, Isaac Gym, or proprietary platforms. To overcome the inductive biases of any one simulator, some researchers train controllers across multiple simulators simultaneously — a technique sometimes referred to as multi‑sim training. The hypothesis is that policies robust to dynamics across distinct physics engines will generalize better to the physical world.

3. Real‑to‑Sim and Hybrid Training

A clever twist on the traditional sim‑to‑real pipeline is real‑to‑sim. Here, a small amount of real‑world data is used to inform and calibrate the simulator itself, creating a “digital twin” of the actual robot environment. The robot then trains in this calibrated simulation before returning to the real world with improved readiness.

This hybrid approach mitigates the reality gap not by perfecting the simulation, but by making it more relevant to the real scenario the robot will face.

4. Human‑in‑the‑Loop Correction

Some frameworks integrate human corrections during early deployment. In such systems, a robot executes its learned policy while a human intervenes only when necessary, offering corrective guidance. The residual policy learned from these interactions then augments the original simulated policy for better real‑world robustness.

This human‑in‑the‑loop adjustment blends autonomous learning with human intuition — a practical middle ground between full autonomy and manual control.

Case Studies: From Simulation to Reality

Zero‑Shot Transfer in Humanoid Learning

A key milestone in this domain is zero‑shot sim‑to‑real transfer, where a robot trained solely in simulation performs complex tasks in the real world without further real‑world retraining. Projects like Humanoid‑Gym have shown that bipedal locomotion and manipulation behaviors learned entirely in virtual environments can transfer successfully to physical humanoid platforms.

Humanoid‑Gym uses advanced reinforcement learning techniques alongside domain randomization and sim‑to‑sim cross‑validation to achieve what was once thought highly improbable: walking, object manipulation, and even acrobatic maneuvers without real‑world policy refinement.

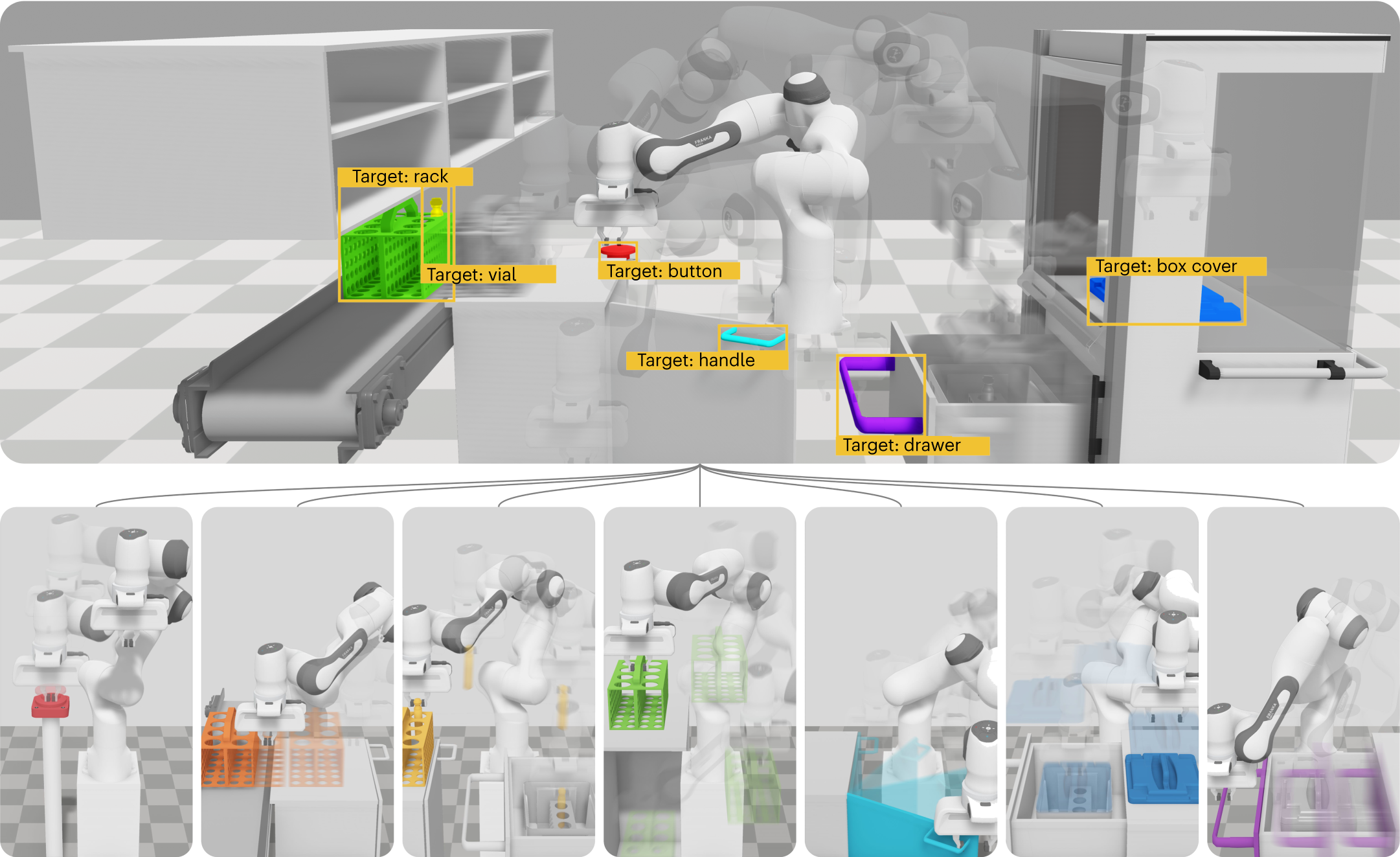

Industrial and Manipulation Tasks

While humanoid locomotion captures the imagination, industrial tasks also benefit from sim‑to‑real training. Methods have been developed that allow robots to assemble parts, manipulate tools, or sort objects in real environments after being trained on virtual replicas of factory scenes. The key remains systematic exposure to environmental variability and simulation of accurate contact and sensory dynamics.

Why Sim‑to‑Real Still Isn’t Trivial

Despite impressive results, sim‑to‑real transfer is not yet a solved problem, especially for human‑scale humanoids performing fine manipulation or interacting with deformable objects. For example:

- High‑precision contact tasks still rely on real‑world fine‑tuning.

- Sensor discrepancies like camera noise or tactile feedback are difficult to replicate perfectly.

- Computational cost of high‑fidelity simulation can be prohibitive for broad tasks.

Researchers often emphasize that current achievements represent milestones, not endpoints — incremental steps toward fully generalist, adaptable robotic systems.

Opportunities and Future Directions

1. Simulation‑as‑a‑Service (SimaaS)

Emerging business models propose simulation infrastructure delivered as a service, enabling developers to train and test robots remotely at scale. This could make sim‑to‑real accessible to more researchers and industries — accelerating innovation.

2. World Models and Prediction

World models — internal predictive representations learned by AI — are becoming essential components in building robust agent intelligence. They allow robots to “imagine” outcomes across states and plan accordingly, reducing reliance on precise physics modeling alone.

3. Continuous Learning and On‑Device Adaptation

Even after deployment, robots can continue to refine their policies using a small amount of real‑world interaction data, a process known as continual learning, thus improving performance over time.

Ethics, Responsibility, and Societal Impact

When humanoids learn in simulation and act in the real world, ethical and societal questions arise:

- Safety: How do we ensure a robot trained in approximated physics doesn’t behave dangerously in reality?

- Trust: Will humans feel comfortable collaborating with humanoids that learned behaviors without physical testing?

- Responsibility: If a robot causes harm, who is accountable — the programmer, the trainer, or the simulator architect?

These questions don’t have easy answers, but they are crucial as sim‑to‑real approaches gain broader use. Discussion about regulation and trust frameworks must develop alongside the technical advances.

Conclusion: A Technological Horizon Within Reach

So, can you train a humanoid in simulation and transfer to the real world? The answer today is a qualified yes — with impressive demonstrations in locomotion, manipulation, and other skills using domain randomization, hybrid learning pipelines, and robust modeling. However, there remain gaps to close before we see fully generalist humanoids that adapt to all real‑world environments without any real‑world training.

The trajectory is clear: simulation will remain central to robotics learning, but the art of bridging the reality gap will continue to evolve — combining rich simulations, real‑world feedback, and ever smarter learning algorithms.