Humanity stands at a crossroads. A new class of machines — humanoid robots — are edging out from research labs into everyday life. Their arrival is not a distant sci‑fi dream, but a present‑day technological wave reshaping work, care, public services, and even how we interpret trust itself. But as these machines gain agency and autonomy, the question grows ever more urgent: Is public trust keeping pace with humanoid robot deployment?

This question matters because trust is not just an emotional reaction — it is the lubricant that makes human‑robot collaboration possible, safe, and effective. Without trust, the promise of humanoid robotics can falter; with misplaced trust, the risks may outweigh the benefits.

In this article, we examine how trust is evolving — scientifically, socially, ethically, and practically — in tandem with increasingly capable humanoid robots. We will explore current research findings, psychological dynamics, design and regulatory factors, socio‑cultural responses, and what lies ahead in aligning public trust with the rapid deployment of humanoid robotic systems.

1. The Rise of Humanoid Robots: Beyond Sci‑Fi to Everyday Reality

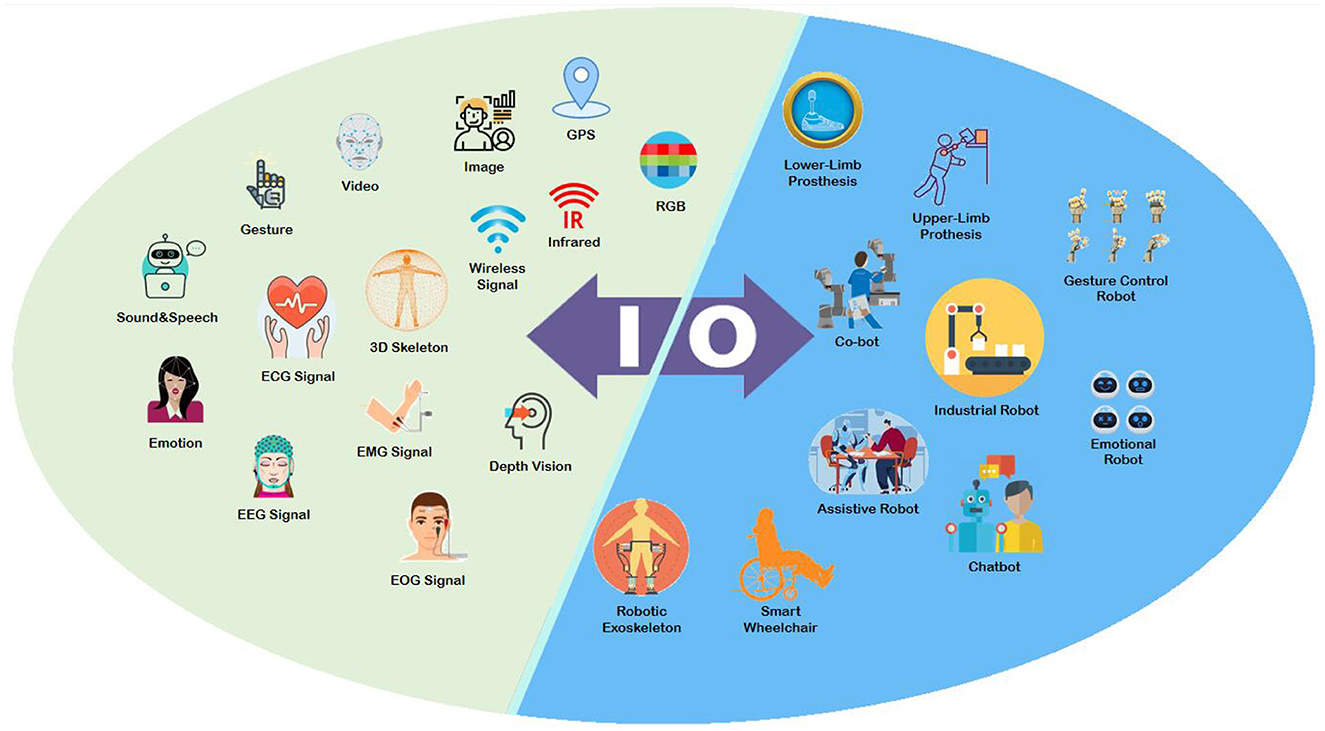

Humanoid robots today encompass a broad class of machines designed with an emphasis on human‑like form or behavior. Characterized by a torso, limbs, and sometimes a face, they are engineered to interact with humans in social, industrial, and service environments. Unlike traditional industrial robots, which are caged in factories, humanoids are intended to share spaces with people — navigating corridors, offering assistance, serving as companions, or even making decisions autonomously.

In the last decade alone, milestones such as Boston Dynamics’ Atlas and commercially oriented humanoids by multiple global firms have accelerated public awareness and deployment. As robots gain sophisticated sensors, AI‑driven decision capabilities, and natural interaction modalities, their roles expand — from logistics and care to public relations and retail assistance.

This rapid technological progress brings palpable excitement and palpable unease. Trust becomes not merely a psychological construct but a socio‑technical constraint that can accelerate or impede real adoption.

2. What We Mean by “Trust” in Robots

To understand whether trust is keeping pace, we must define trust in this context.

In human‑robot interaction research, trust is often operationalized as a willingness to rely on a robot’s actions while being vulnerable to its decisions. This includes cognitive trust (belief in capability and reliability) and affective trust (emotional comfort and rapport). Trust is neither binary nor static; it varies with context, task complexity, and prior experiences.

Studies show that robots that make promises, exhibit sociability, or display human‑like behavior are more likely to be trusted by humans, especially when users perceive them as similar to humans in capability or intent.

But trust is not only influenced by appearance or social cues — it is calibrated through performance, predictability, transparency, and alignment with human goals.

3. Public Perception vs. Real Capabilities

A core challenge is that public perception often lags behind reality. While media hype can inflate expectations about robots becoming flawless helpers or companions, real‑world performance is still limited by constraints in AI reasoning, durability, safety, and context awareness.

Research indicates that public trust often correlates with perceived intelligence and likability — even if actual capabilities are modest. For example, humanoid robots are generally trusted more than non‑humanoid machines in caregiving or personal contexts, largely because people ascribe higher intelligence and “aliveness” to machines that look more human‑like.

This trust based on form rather than function can be a double‑edged sword. On one hand, it helps people accept and cooperate with robots; on the other, it risks over‑trust, where users rely on robots beyond what is safe or justified by real performance.

4. The Science of Trust: What Studies Reveal

Empirical research offers nuanced insights into how trust evolves:

4.1 Ethical Compliance and Trust

Experiments show that robots adhering to ethical principles (e.g., safety, harm minimization, obedience to human directives) earn higher trust than those that violate norms. However, trust is also sensitive to decision type — a robot that takes action may be trusted more in some contexts but less in others when ethical priorities conflict.

This complexity highlights that ethical behavior — or at least the perception of it — is central to trust, not just technical competence.

4.2 Sociability and Trust Gains

Studies confirm that robots perceived as socially adept — able to understand and respond to human behavior — are more likely to be trusted. Sociability accounts for a large part of why people trust certain robots more than others.

Yet sociability does not guarantee appropriate trust; if robots mimic empathy without genuine understanding, users may form misleading emotional attachments at odds with the robot’s capabilities.

4.3 Embodiment and Human Models

Human trust isn’t purely rational; people form mental models of agents. A robot perceived to have high agency — the ability to act autonomously and intentionally — can be trusted more, even if its actual performance is poorer than expected.

This insight suggests that design elements — including behavior patterns, responsiveness, and human‑like agency — can shape trust independently of objective performance. It raises key questions about whether design should emphasize appearance or trustworthy performance.

5. Cultural and Individual Differences in Trust

Trust is not universal. Different populations vary in their baseline trust of robots based on cultural norms, age groups, and experiences.

Children, for example, show increasing trust in humanoid robots with age, and the degree of anthropomorphism affects trust differently depending on developmental stage.

Similarly, cross‑cultural studies suggest that societal values influence how robots are perceived — where collectivist cultures may emphasize harmony and collaboration while individualist cultures emphasize autonomy and skepticism.

6. Trust Calibration: Not Too Much, Not Too Little

Whether trust is “keeping pace” depends on calibration: balancing under‑trust and over‑trust. Too little trust hinders adoption; too much trust can lead to misuse, harms, or unsafe delegation.

Researchers conceptualize trust bias in human‑robot interaction as the gap between perceived reliability and actual reliability. Trust calibration involves strategies to avoid both extremes — for example, increasing transparency, providing clear explanations of robot decisions, or designing robots to repair trust when errors occur.

7. Design Strategies to Support Trust

Designers play a key role in shaping public trust. Trust‑centric design includes:

- Predictable behavior: Robots that behave consistently foster confidence.

- Transparent decision processes: Explanations of why a robot chose a course of action can mitigate mistrust.

- Appropriate embodiment: Choosing robot forms that match expectations without inflating perceived capability.

- Safety and redundancy: Building fail‑safe mechanisms that reassure users even during unexpected situations.

When robots communicate clearly about their limits and uncertainties, users can form more accurate mental models — mutually beneficial for human and robot alike.

8. Deployment Contexts and Trust Challenges

Humanoid robots are entering diverse domains:

8.1 Healthcare and Caregiving

In healthcare settings, trust stakes are high. Research on public trust in humanoid robot doctors suggests that, in some cases, trust in robot doctors is as high or even slightly higher than trust in human doctors — though results vary across contexts and populations.

This signals that humans may accept robotic assistance, particularly for routine or informational tasks, but it also raises critical questions about medical accountability and ethical responsibility.

8.2 Workplace Integration

In organizational environments, trust affects employee acceptance and collaboration with assistive robots. Studies indicate that trust levels relate not only to functional ability but also emotional rapport — with emotional trust leading to higher perceptions of warmth and greater work contribution intentions.

As robots assist with repetitive tasks or augment human roles, workforce adaptation and trust dynamics will shape organizational outcomes.

9. Regulation and Public Policy: Trust Beyond Emotion

Technical performance and design are only part of the trust equation. Regulatory frameworks are critical to ensure that deployment is safe, ethical, and aligned with social values.

Governments and industry bodies are proposing and implementing AI and robotics regulations targeting transparency, safety standards, and liability. Effective regulation can reinforce public trust when people know that independent oversight and accountability mechanisms exist.

For example, proposed AI laws in various jurisdictions include requirements for human oversight, safety testing, and impact assessments. These efforts acknowledge that trust is not solely an individual feeling but a societal contract backed by institutions.

Bad actors, opaque systems, or weak governance can erode trust faster than robotics innovation can build it.

10. Ethical and Societal Implications

Trust in humanoid robots intersects with ethics and societal values:

- Privacy and surveillance: Robots with cameras and sensors can collect sensitive data — raising concerns about data use and consent.

- Responsibility and liability: When robots err, who is accountable — the developer, operator, or robot itself?

- Human dignity and autonomy: In caregiving or companionship roles, overly persuasive machines could influence human behavior or undermine autonomy.

- Socio‑economic impact: As automation increases, how will trust evolve in a society where robots replace or augment human labor?

These questions require multi‑disciplinary thinking — beyond engineering and design, into ethics, law, and public discourse.

11. Where Trust Is Headed: Future Directions

So, is public trust keeping pace with humanoid robot deployment? The answer is mixed.

On one hand, gains in sociability, human‑like design, and positive experiences are building trust in specific contexts. On the other, gaps remain between perceived trustworthiness and actual capability, and public understanding varies widely across demographics and applications.

To keep pace, stakeholders — including researchers, designers, policymakers, and educators — must work together to:

- Cultivate transparent communication about robot capabilities and limitations.

- Develop trust calibration frameworks that avoid both under‑trust and over‑trust.

- Embed ethical principles into design and deployment.

- Strengthen regulatory standards that protect users and promote confidence.

Trust is not guaranteed by technology alone; it is earned through consistent performance, clear boundaries, and accountable governance.

Humanoid robots may not be fully trusted everywhere tomorrow — but with thoughtful stewardship, public trust can rise in tandem with their growing presence.