Introduction: A New Regulatory Landscape

Across industries and societies, machines that think and act autonomously are no longer science fiction — they are part of everyday life. Robots powered by advanced artificial intelligence (AI) are transforming manufacturing lines, customer service, transportation, healthcare, and even government operations themselves. These technologies promise efficiency, cost savings, and new capabilities that redefine human potential. Yet each advance carries regulatory questions that are unprecedented in both scale and complexity. How should governments respond when technology evolves faster than the laws meant to govern it? And can regulatory systems, rooted in centuries‑old frameworks, keep pace with robot‑driven transformation? This article explores this pivotal question, examining governance challenges, global efforts to adapt legal frameworks, emerging regulatory experiments, leadership struggles, and the deep ethical implications at stake.

The Scope of Robot‑Driven Disruption

When we talk about robot‑driven regulation, we mean a regulatory environment that must account for machines capable of autonomous or semi‑autonomous perception, decision‑making, and action. Robots today include industrial arms on factory floors, autonomous vehicles navigating public roads, algorithmic systems managing critical infrastructure, care robots in hospitals and homes, and AI systems engaging in complex reasoning. Their capabilities differ significantly, yet all share one thing in common: they challenge the assumptions upon which legal and regulatory frameworks were built. Existing laws generally presume a human agent — someone who can be held accountable, can reason with intent, and can be sanctioned for wrongdoing. Robots complicate each of those assumptions.

Regulatory gaps are widespread. For instance, autonomous weapons — machines capable of selecting and engaging targets independently — are proliferating on battlefields even as global regulation lags far behind. At the United Nations Convention on Conventional Weapons (CCW), nations have met to discuss limiting autonomous weapons systems, yet no binding international rules have been agreed. Major powers remain reluctant to constrain their own military technology, undermining consensus. At the same time, experts warn that without clear limits, unregulated autonomous weapons could accelerate an arms race and erode legal accountability for lethal actions.

Beyond military technology, robots and AI systems are reshaping civilian sectors. Governments are experimenting with AI assistants inside their own bureaucracies — such as AI “employees” handling administrative tasks — prompting questions about rights, transparency, auditability, and public trust. In one Chinese city, an AI‑powered team of “digital employees” was formally regulated under a pioneering policy that defines rights and obligations for automated government assistants.

These developments underscore a key point: regulations must now govern both how robots are developed and how they are deployed in civil society.

Why Robot‑Driven Regulation Is Hard

1. The Speed of Innovation Outpaces Lawmaking

One central challenge is speed. Technological change — particularly within AI and robotics — moves at exponential rather than linear rates. Legislatures and regulatory bodies, by contrast, operate through deliberative processes that are slow by design. This mismatch means lawmakers are constantly playing catch‑up, writing rules about technologies that are already evolving beyond earlier limitations.

The Organisation for Economic Co‑operation and Development (OECD) has documented that inflexible, outdated, or fragmented legal environments hinder smart regulatory adaptation. While some rules can be adjusted, others simply don’t anticipate autonomous agents, predictive systems, or learning machines. These gaps create uncertainty for both regulator and industry.

2. Complexity of Technology and Expertise Gaps

Technology complexity compounds this speed mismatch. Modern robots combine sensors, AI reasoning models, autonomous navigation, and communications networks into highly adaptive systems. Most lawmakers and regulators lack deep technical expertise in these domains, making it difficult to foresee risks or craft effective interventions.

This problem is not hypothetical: policymakers struggle with basic questions like how to define “autonomy,” when human oversight is sufficient, or when algorithmic decision‑making constitutes the highest level of operational control. Without clear definitions and shared technical reference points, creating robust rules becomes almost impossible.

3. Fragmented Jurisdictions and Global Coordination Challenges

Robots and AI do not stop at national borders. Data flows freely across jurisdictions, and multinational companies often deploy AI systems worldwide. Yet regulatory regimes vary dramatically between nations. Some prioritize innovation, others consumer protection, and still others focus on national security or social values. Without global coordination, policymakers risk regulatory fragmentation: a world where companies must navigate incompatible requirements or exploit loopholes in nations with weaker oversight.

International governance efforts — such as UN deliberations on autonomous weapons — illustrate just how hard global consensus can be, especially where military or economic interests diverge.

4. Competing Policy Goals: Innovation Versus Regulation

Regulators often struggle to balance two competing imperatives: fostering innovation and protecting public safety. Some industry leaders argue that overly restrictive regulation will stifle innovation and slow economic growth. For example, Microsoft’s chief scientist has suggested that effective regulation could accelerate, not hinder, AI development — provided that it is designed thoughtfully and in collaboration with technologists.

Others counter that without stringent rules, technology may harm society in ways that outweigh its benefits. For instance, poorly regulated AI systems can amplify misinformation, entrench biases, or make automated decisions that negatively impact individuals’ rights and livelihoods. Lawmakers therefore face an unenviable task of writing rules that are both safe and innovation‑friendly.

Emerging Approaches to Governance

Governments and international bodies are experimenting with a variety of approaches to tackle robot‑driven regulatory needs. These methods reflect different philosophical and practical approaches to lawmaking.

1. Sector‑Specific Regulation

Some countries are adopting sector‑specific rules that target particular high‑risk applications. For example, California has passed one of the first AI companion regulation laws, requiring safety protocols and penalties for chatbots that could harm vulnerable users.

Sector-specific frameworks allow regulators to tailor rules to particular risks — such as medical robots, self‑driving cars, or automated financial traders — rather than trying to impose one rigid solution on all AI and robotics systems.

2. Dynamic and Adaptive Regulation (“Regulatory Sandboxes”)

Another promising approach is creating regulatory sandboxes. These are controlled environments where companies can test innovative technologies under regulatory supervision. Sandboxes allow regulators to learn about new systems firsthand, observe risks in real time, and iteratively update rules based on evidence rather than speculation. This approach has been used in financial technology and is now being explored for AI and robotics.

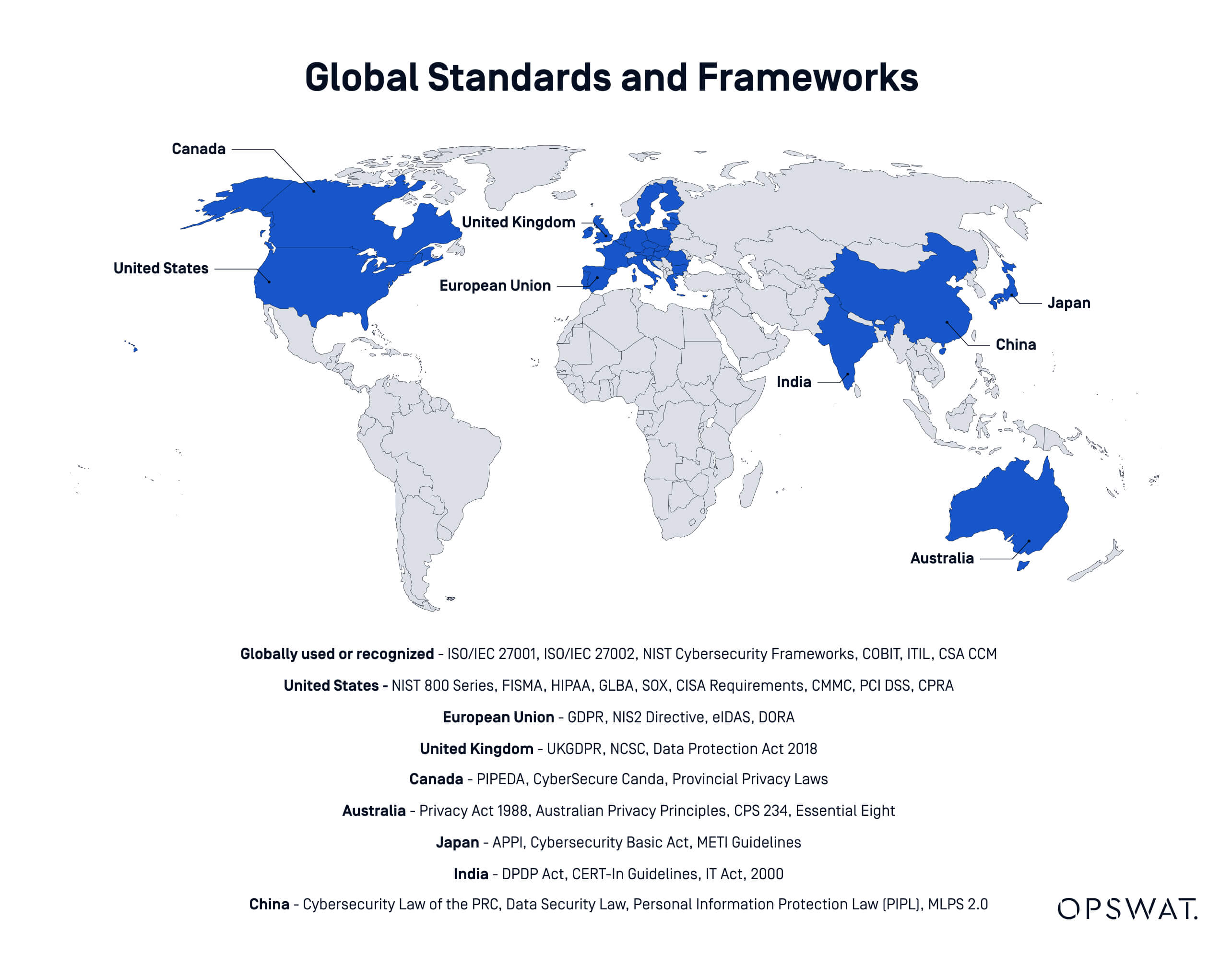

3. Standards and Best Practices

International technical standards — developed collaboratively by governments, industry, and standards organizations — can offer baselines for safety, interoperability, and risk management. These standards do not replace laws but help create a shared foundation for regulation. Interoperability in safety governance, for example, has been identified as a way to reduce systemic risks and enhance competitiveness across borders.

4. Ethical and Rights‑Based Frameworks

Governments are also incorporating ethical principles into regulatory frameworks. These include ideas such as transparency, accountability, human rights protection, and fairness. The United Nations and UNESCO have promoted principles for AI governance that emphasize human dignity, privacy, and public participation in policymaking.

Such frameworks aim to ground technical rules in broader societal values, ensuring that regulations do not merely manage risk but also align technology’s deployment with democratic norms and human dignity.

5. Hybrid National‑International Frameworks

Because AI and robotics are global phenomena, some experts advocate hybrid governance models that combine national frameworks with international standards and treaties. This hybrid approach seeks to balance local autonomy with global consistency, while enabling regulatory experimentation within a shared worldwide architecture.

Case Studies: National and Local Innovations

European Union: Attempting Comprehensive Regulation

The European Union has been at the forefront of attempting broad AI regulation, proposing directives that clarify liability for harm caused by robots and autonomous systems. These proposals aim to make it easier for citizens to seek redress when harmed by AI‑based products and services, and to clarify how manufacturers and deployers share responsibility.

By focusing on structured frameworks that define responsibilities, the EU model attempts to ensure accountability without stifling innovation.

United States: Federal Versus State Tensions

In the United States, federal and state governments are wrestling over who should regulate AI and robot technologies. Recent executive actions have sought national consistency, but critics argue these may overreach or conflict with state authority.

Meanwhile, states like California have moved ahead with their own AI rules, particularly in areas like digital assistants and consumer protection. This patchwork approach reflects both the innovation dynamics and political structure of the U.S., but also underscores the difficulty of maintaining coherent nationwide regulation.

China: Ambitious Directives and Social Controls

China has adopted high‑profile plans to govern AI and robotics as part of its national development strategy. These include requirements that AI technologies align with state principles and values and specific interim measures for generative AI services to address content safety.

Local initiatives — such as regulatory frameworks for “digital civil servants” — demonstrate how municipal governments are experimenting with governance models that blend supervision, ethical standards, and industrial promotion.

Key Challenges in Practice

Enforcing Accountability and Liability

When robots operate autonomously and continuously learn from environments, determining accountability becomes difficult. If a self‑driving vehicle injures a pedestrian, who is at fault? The manufacturer? The software developer? The owner? The regulator who approved it? Traditional liability rules struggle to handle these scenarios.

Reforming liability systems to address autonomous actors, dynamic algorithms, and continuous updates is a major legal challenge.

Balancing Innovation and Public Safety

Too lax a regulatory regime can expose society to risks such as data breaches, wrongful outcomes, or systemic bias; too strict a regime can stifle innovation and economic competitiveness. Finding the right balance requires both technical understanding and policy nuance.

Resource and Expertise Limitations

Many regulatory bodies lack the technical expertise to fully understand the technologies they regulate. Building in‑house capability or partnering with independent technical advisory panels is necessary but also resource‑intensive.

Global Misalignment and Strategic Competition

In areas like autonomous military AI, regulatory misalignment can have strategic implications. Competing geopolitical priorities may stall global agreements, leaving governance gaps. Competing national interests — economic or military — make international treaties hard to negotiate.

Can Governments Truly Keep Up?

The answer is complex. Governments alone cannot keep up if they rely exclusively on traditional legislative processes and existing institutions. Yet no government is powerless. By adopting innovative regulatory tactics, investing in expertise, engaging in international cooperation, and embedding ethical principles into legislation, regulation can evolve alongside technology.

Instead of seeing regulation and innovation as opposites, framing them as complementary goals — where thoughtful rules enable trustworthy, safe, and socially beneficial technology — is a more productive paradigm. This shift requires more than laws; it necessitates stewardship: governments that guide technological progress to serve societal goals, protect rights, and empower citizens.

In many ways, robot‑driven regulation is a grand experiment in modern governance. Its success will determine not only how we control technology, but also how we define human autonomy, social values, and public welfare in the age of intelligent machines.