Introduction — Why This Question Matters

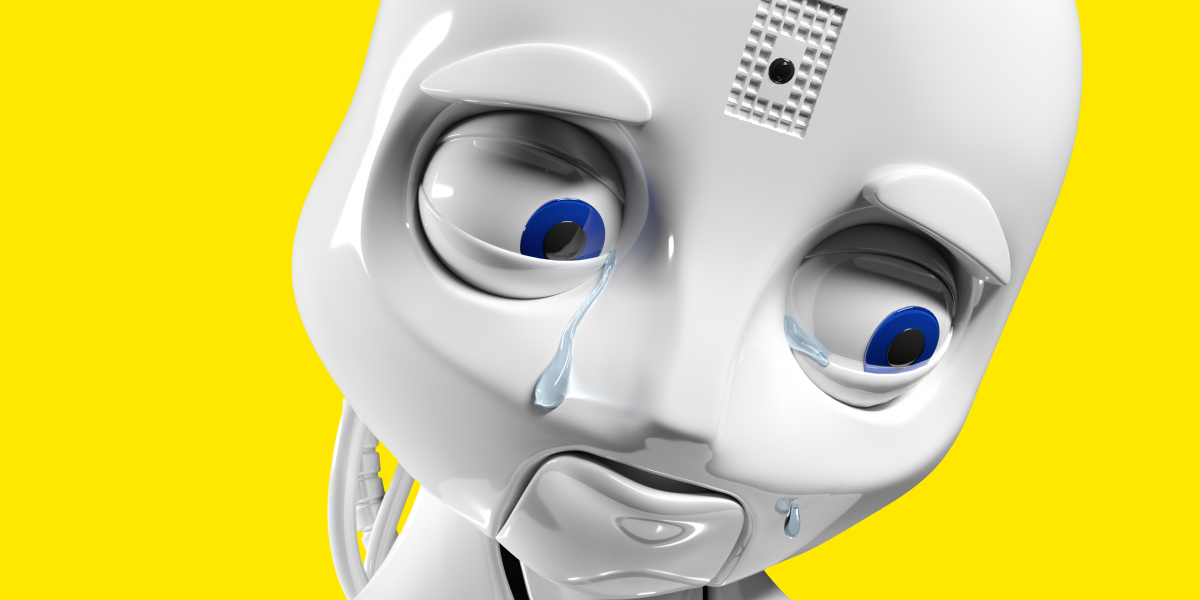

The idea of programming robots with emotions sounds like science fiction — but it’s fast becoming real. Robots and artificial intelligences aren’t just industrial machines anymore; they are social companions, caregivers, digital friends, and increasingly, extensions of human experience. But as we invest computing systems with emotional capabilities, a challenging ethical dilemma arises: Should we do it at all? And if so, under what conditions and limits?

This article dives into the ethics of emotional robotics — examining philosophy, psychology, technology, societal impacts, legal and moral concerns, and the future of human‑robot coexistence. It is written to be lively yet deeply informed, accessible but professional, and designed with strong SEO and improved readability in mind.

Part I — What Does “Robot Emotion” Really Mean?

1.1 Artificial Emotions vs. Human Emotions

When we speak of robots having emotions, we don’t mean the same rich, embodied experience humans have. Human emotion involves complex biochemistry, life experience, consciousness, self‑reflection, and subjective experience. Robots don’t feel in the biological sense — they simulate emotional expression through programming:

- facial or voice expressions

- behavior triggered by internal states

- responses tailored to user input

These may appear emotional, but fundamentally, they are sophisticated patterns of computation and machine learning. Unlike humans, robots lack subjective consciousness or sentience — meaning they do not truly experience happiness, sadness, love, fear, or empathy. What they do is mimic emotional behavior to optimize interaction with humans.

Think of a robot that “smiles” to indicate satisfaction — it doesn’t actually feel joy; it’s executing programmed signals designed to make interactions easier for humans. The visible emotional output is not an inner emotional life but a behavioral interface.

1.2 Why Simulated Emotions?

Engineers and designers program emotional systems for three main reasons:

- Improved communication: Emotional cues help bridge the gap between cold logic and human social nuance.

- Enhanced cooperation: In some tasks, emotional expressions increase trust and alignment between humans and machines.

- Human‑friendly interfaces: Social robots are easier to use when they feel relatable.

For instance, social robotics — robots designed to interact naturally in everyday settings — often include facial expressions, voice modulation, and gesture responses to simulate emotional presence. These features help humans treat machines as partners rather than tools.

But the key tension lies here: once a robot seems emotional, humans naturally attribute intention, empathy, and even sentience to it — even though these are illusions. That blurring of perception carries immense ethical weight.

Part II — The Ethical Landscape: What’s at Stake

2.1 Emotional Attachment and Deception

One of the core ethical debates is whether robots should be designed to evoke deep emotional attachment. When a robot behaves as if it cares, users may form bonds similar to human relationships. This is useful in therapy or caregiving contexts — for example, robotic companions helping elderly patients with loneliness. However, it also creates the risk of emotional deception:

- People may believe the robot genuinely understands them.

- The user may disclose intimate information or depend on the robot for emotional comfort.

- If the robot is removed or fails, it can cause real distress.

Studies show that emotional robots can inadvertently deceive users into thinking the robot possesses emotional states it does not actually have. This is referred to as emotional deception and is ethically troubling because it exploits human psychological vulnerabilities.

This complicates consent, autonomy, and trust — because users can be misled into emotional involvement without full understanding of the robot’s nature.

2.2 Emotional Robots in Caregiving — Beneficial or Exploitative?

In healthcare, robots with emotional interfaces can help in therapy, child support, dementia care, and companionship for isolated individuals. Devices like Paro — a robotic seal — have been used successfully to comfort older adults with cognitive challenges.

But even beneficial applications raise questions:

- Are we replacing human contact with artificial substitutes?

- Does emotional attachment to a machine undermine social cohesion?

- Is it ethical to provide emotional support that’s ultimately hollow?

There is a fine line between assistance and replacement. While robots can fill gaps in caregiving shortages, we must consider whether technology is compensating for systemic social issues rather than solving them.

2.3 Trust and Cooperation — Beyond Warmth

Another dimension lies in how humans trust emotional robots. Research suggests that human‑like emotional expression doesn’t automatically increase trust. In some cases, it can make people more suspicious or anxious because the robot seems too human or unpredictable. In other contexts, emotionally expressive robots foster cooperative behavior.

So the effect of robot emotion on trust isn’t universal — it depends on context, task, and user psychology. This means designers need ethical guidelines, not just technical standards, to decide when emotional programming enhances or undermines human–robot interaction.

Part III — Moral and Philosophical Questions

3.1 Can Robots Have Moral Status?

A big philosophical question is whether robots with emotional capabilities deserve moral consideration. If a robot appears to experience pain or joy, should we treat it differently than a purely functional machine? Most philosophers argue no, because current systems lack consciousness and subjective experience. True emotion implies subjective life — something machines do not possess.

But even if robots don’t have inner lives, our treatment of them can reflect how we treat ourselves and others. Cruelty toward lifelike machines could foster harmful attitudes in people. Ethical behavior toward artificial entities, therefore, extends beyond robot welfare — it reflects broader values in human society.

3.2 Emotional Labor and Exploitation

Emotional robots are also being deployed in customer service, companionship applications, and entertainment. This raises questions around emotional labor: humans are now expected to manage robot feelings even though they’re artificial constructs.

Is it ethical to offload emotional labor onto machines? And if robots are programmed to respond in emotionally intense ways, does this exploit human desires and social instincts for commercial profit? These are major ethical concerns tied to consumerism and psychological well‑being.

3.3 The Responsibility of Designers and Programmers

Who is accountable when an emotional robot influences human behavior?

Is it the programmer? The manufacturer? The operator who deploys it? The ethical responsibility lies on multiple stakeholders:

- Developers must consider safety, transparency, and consent.

- Companies must avoid exploiting human psychology for profit.

- Regulators should set standards for emotional AI and clear usage guidelines.

This reflects the broader field of Roboethics which studies moral issues raised by intelligent machines beyond technical performance, including autonomy, privacy, and fairness.

Part IV — Risks and Challenges

4.1 Privacy and Emotional Data

Emotionally aware robots often gather sensitive personal data — voice tones, facial expressions, interaction histories — to respond appropriately. This affective data is deeply personal and raises serious privacy concerns.

Without strict safeguards, such data could be misused for manipulation, advertising, or even psychological profiling.

4.2 Regulatory and Legal Gaps

As emotional robotics technology evolves faster than legislation, legal systems struggle to define rights, responsibilities, and redress mechanisms. There are currently no universal standards for emotional AI, meaning users can be exposed to risks without clear legal protection.

This gap underscores the need for regulatory frameworks that address emotional interaction, informed consent, data protection, and ethical boundaries in robot programming.

4.3 Psychological Dependency and Social Impact

Humans are social creatures. Emotional robots may:

- Reduce loneliness — in some contexts benefiting health.

- But also discourage real human relationships if over‑relied upon.

We must critically evaluate whether emotional robots genuinely enhance human life or subtly replace irreplaceable human interaction.

Part V — Ethical Paths Forward

5.1 Transparency and Explainability

Robots with emotional functions should be transparent: users must know they’re interacting with programmed responses, not sentient beings.

This fosters informed consent and protects psychological well‑being.

5.2 Ethical AI Design Principles

We need design frameworks that prioritize:

- Safety

- Human autonomy

- Non‑deception

- Respect for privacy

These principles must be integrated into development from the earliest stages, not applied retrospectively.

5.3 Multi‑Stakeholder Governance

Ethics involves developers, policymakers, social scientists, psychologists, and the public. Multi‑disciplinary collaboration can create balanced norms that respect human needs while harnessing technological potential responsibly.

Conclusion — A Future That Respects Humanity

The question “Is it ethical to program robots with emotions?” doesn’t have a simple yes/no answer. It is not merely a technical challenge but a societal one. Emotional robots have the potential to enrich lives, assist in care, support learning, and make technology more intuitive. But they also raise risks of deception, dependency, exploitation, and erosion of human bonds.

The ethical path forward lies in responsible innovation — designing robots that support human flourishing, respect dignity, protect rights, and operate within frameworks that guide both behavior and values. Emotion in robots can be productive, but only if programmed with ethics at the core.