In the rapidly evolving frontier of industrial automation, humanoid robots—machines with body structures inspired by the human form—are transforming from futuristic curiosities into practical workhorses. What makes this possible? The powerful combination of artificial intelligence (AI) and advanced sensors, which together elevate these robots from simple programmed machines to perceptive, adaptive collaborators capable of tackling real-world industrial tasks.

This article explores how AI and sensors work in concert to improve humanoid robot performance in industrial environments. We’ll dive into the mechanics of perception, cognition, motion, and interaction, painting a comprehensive picture that’s both engaging and technically rich. Let’s get into it.

1. The Industrial Challenge: Why Humanoid Robots?

Industrial environments are dynamic and often unstructured. Unlike fixed robotic arms bolted to production lines, humanoid robots are designed to navigate unpredictable spaces, adapt to variations in tasks, and interact safely with humans and objects. Imagine robots capable of walking across a factory floor, handling tools, inspecting equipment, or even carrying out maintenance tasks on complex machinery. That’s the promise of humanoid robotics in industry.

But realizing this promise requires two critical components:

- Perception — Robots must sense the world with high fidelity.

- Intelligence — Robots must decide and act intelligently based on what they sense.

This is where AI and sensors become indispensable.

2. Sensors: The Gateway to the Physical World

2.1. Seeing the World with Vision Systems

Vision is arguably the most important sense for humanoid robots. Industrial environments are filled with objects of varying shapes, sizes, and orientations. To handle these objects, robots must “see” them.

Advanced camera systems—often paired with depth sensors like LiDAR or RGB‑D cameras—provide 3D perception, letting robots build rich spatial maps of their surroundings. These sensor systems allow robots to:

- Detect and localize objects

- Distinguish between workpieces and obstacles

- Navigate complex floor plans

In vision‑guided robotics, images from cameras are processed to provide continuous feedback on object positions and robot movements, enhancing task precision and adaptability.

2.2. Balancing and Motion with IMU Sensors

Walking and balancing like a human is a complex control problem. Humanoid robots rely on Inertial Measurement Units (IMUs)—sensors combining accelerometers and gyroscopes—to monitor orientation and motion in real time. These sensors allow robots to:

- Detect tilts, rotations, and sudden perturbations

- Adjust joint torques instantly to prevent falls

- Maintain stability across uneven surfaces

IMUs act as a robot’s “inner ear,” continually informing AI algorithms about its physical state so balance and gait can be optimized on the fly.

2.3. Touch and Force Sensing

Just as human hands feel texture and weight, humanoid robots need touch and force perception to manipulate objects with care. Force sensors and tactile skins embedded in robotic limbs and fingertips provide feedback on grip strength and contact forces. These sensors let robots:

- Adjust grasp strength dynamically

- Detect slip or deformation during manipulation

- Ensure precision in assembling parts or handling fragile items

Six‑axis force sensors, for example, measure multidirectional forces and torques simultaneously, essential for delicate industrial tasks like tightening bolts or inserting components.

3. AI: The Brain Behind the Brawn

Sensors provide raw data, but AI makes sense of it. Modern humanoid robots use advanced AI algorithms to interpret sensor data, plan actions, and learn from experience.

3.1. Perception Through Machine Learning

Raw sensor data—images, motion readings, contact forces—are noisy and complex. AI systems use machine learning to extract meaningful information:

- Computer vision models classify and localize objects.

- Sensor fusion algorithms integrate multiple inputs (visual, inertial, tactile) to form a coherent environmental understanding.

- 3D mapping and localization systems help robots build internal models of industrial spaces.

This deep perceptual capability lets robots adapt to variations in lighting, object appearance, and task conditions, which traditional programmed systems could never handle reliably.

3.2. Decision Making with Reinforcement Learning and Planning

Once a robot perceives its environment, it must decide what to do next. Here, decision‑level AI shines:

- Reinforcement learning (RL) allows robots to learn optimal strategies by trial and error.

- Motion planning algorithms compute safe, efficient trajectories for walking or arm movement.

- Generative AI enables robots to understand language and high‑level task descriptions, converting them into actionable steps.

Reinforcement learning, in particular, has been used to train robots to perform complex motions, such as coordinated manipulation or dynamic balancing, in simulated environments before deployment on physical hardware.

3.3. Adaptation and Continuous Learning

In a real factory, conditions change: new parts are introduced, workers reconfigure layouts, and unexpected obstacles arise. AI allows humanoid robots to:

- Generalize learned behaviors to novel scenarios

- Adapt motion plans based on real‑time feedback

- Optimize task workflows for efficiency

Through continuous learning, robots become faster, safer, and more dependable over time.

4. AI + Sensors in Action: Industrial Use Cases

Let’s look at how this powerful combo manifests in actual industrial scenarios.

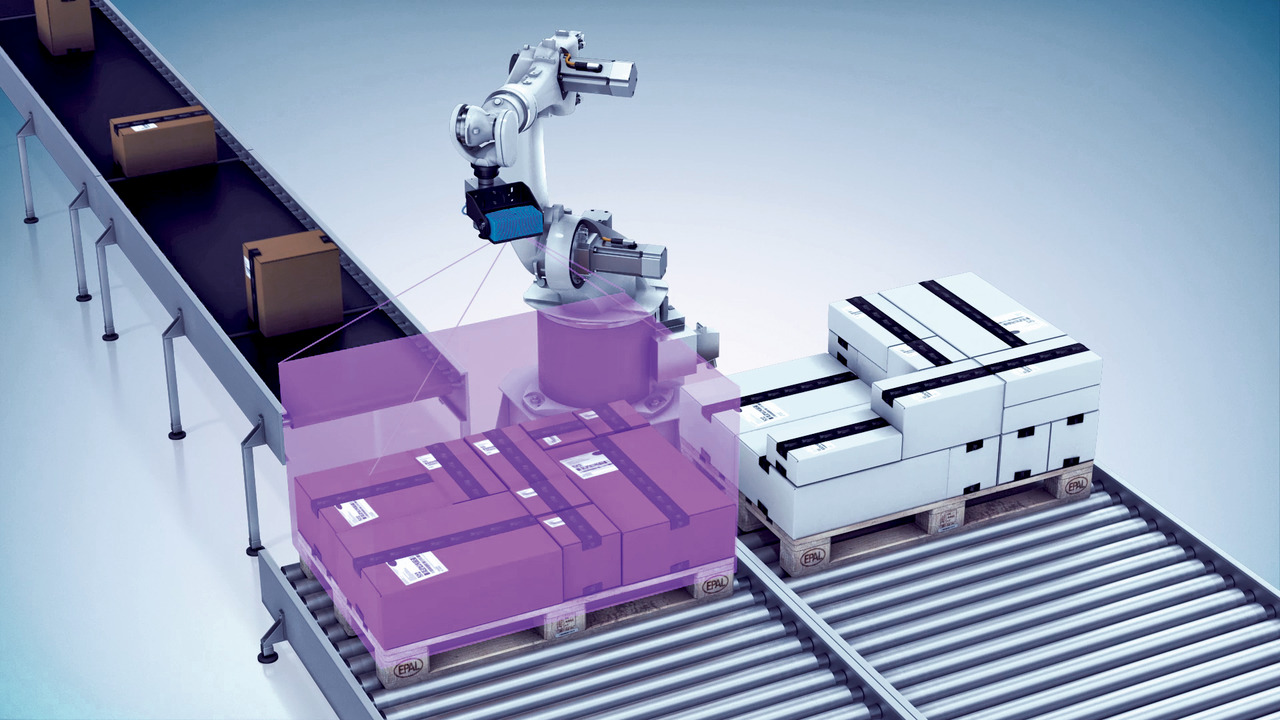

4.1. Logistics and Material Handling

In warehouses and manufacturing plants, humanoid robots equipped with vision and tactile sensors can:

- Scan shelves and locate items

- Pick parts from bins with soft grip control

- Load and unload conveyor belts

- Navigate crowded aisles while avoiding collisions

AI algorithms ensure tasks are performed swiftly and adaptively—whether handling a familiar object or a new type of part.

4.2. Assembly and Quality Assurance

Assembly lines traditionally used rigid, fixed robots. Humanoid robots break this mold by operating flexibly across different stations. They can:

- Tighten fasteners with precise torque control

- Install components requiring delicate alignment

- Inspect finished products and flag defects using AI vision models

Robots can even adjust their actions based on detected deviations in part placement or orientation.

4.3. Maintenance and Inspection

Equipped with vision and force sensing, humanoid robots can inspect equipment in hard‑to‑reach or hazardous locations. AI assists by:

- Recognizing wear patterns or anomalies

- Planning safe routes through machinery

- Communicating findings to human supervisors

These robots not only reduce the risk for human workers but also help catch issues before costly downtime occurs.

4.4. Collaboration with Human Workers

Humanoid robots are not replacements for humans—at least not yet—but collaborators. They can:

- Share workspaces safely, using sensors to detect human presence

- Adjust actions to avoid interfering with humans

- Learn from human demonstrations, mimicking expert techniques

AI plays a key role in interpreting shared context and predicting human movement for smooth co‑working.

5. Challenges and Future Directions

Despite these advancements, integrating AI and sensors into industrial humanoid robots still faces challenges:

- Computation and Power: AI inference and sensor processing require high compute power in real time.

- Real‑World Learning: Simulated training needs to transfer reliably to physical tasks.

- Safety and Trust: Robots working near humans need robust safety controls and predictable behavior.

- Cost and Reliability: Industrial equipment must operate continuously under harsh conditions.

Solutions are emerging, from cloud‑native AI architectures and specialized hardware to better safety verification standards and predictive maintenance tools.

6. Conclusion

The synergy between AI and sensors is what truly enables humanoid robots to excel in industrial use. Sensors give robots their “senses,” delivering rich, continuous streams of environmental data. AI turns this sensory input into understanding, decision‑making, and intelligent action, allowing robots to adapt, learn, and work safely alongside humans.

This combination is moving humanoid robots from research labs into real factories and warehouses—transforming industries through smarter automation, improved productivity, and new forms of human‑machine collaboration. The journey is well underway, and its pace suggests that future factories will be populated by perceptive, capable robotic colleagues empowered by AI and sensors.