From science fiction to cutting‑edge labs around the world, the idea of humanoid robots learning directly from human demonstrations is no longer the pure fantasy it once was. In the past decade, robotics research has shifted dramatically from simple pre‑programmed sequences toward learning that resembles human learning: observing, generalizing, and adapting. Researchers now ask not just if humanoid robots can learn from human demonstrations, but how they can do so efficiently, robustly, and safely. The stakes are high: mastering this capability could revolutionize automation, caregiving, industries, and daily human life. This article explores the state‑of‑the‑art science behind this vision, the underlying technologies, key challenges, ethical considerations, and the road ahead.

A New Paradigm: From Programming to Demonstration

Traditional robots operate on a rigid instruction set — programmed tasks executed in fixed environments. Teaching such systems requires a specialist: a programmer with robotics expertise who defines every motion and condition. While effective for repetitive industrial processes, this approach fails in dynamic or unstructured settings, especially those involving complex human activities.

Contrast this with how humans learn. From infancy, we learn by watching others, imitating actions, inferring goals, and applying those skills in new contexts. The idea of transferring this human style of learning to machines is seductive and powerful, and it underpins a field known as Learning from Demonstration (LfD). In LfD, robots observe human behavior and try to extract patterns of motion, intention, and context so they can replicate and adapt those behaviors autonomously.

Humanoid robots—the machines designed to mimic the human body with two legs, two arms, and a head—are ideal candidates for demonstration learning, in part because of their physical resemblance to humans. This bodily similarity, called embodiment, theoretically makes mapping human actions onto robots more intuitive. Yet in practice, it introduces deep technical complexity that researchers are actively working to solve.

The Technological Building Blocks

A humanoid robot learning from human demonstrations is not one technology, but a synergy of advances in sensing, perception, learning, and control. Below are the essential components:

1. Sensing and Perception Systems

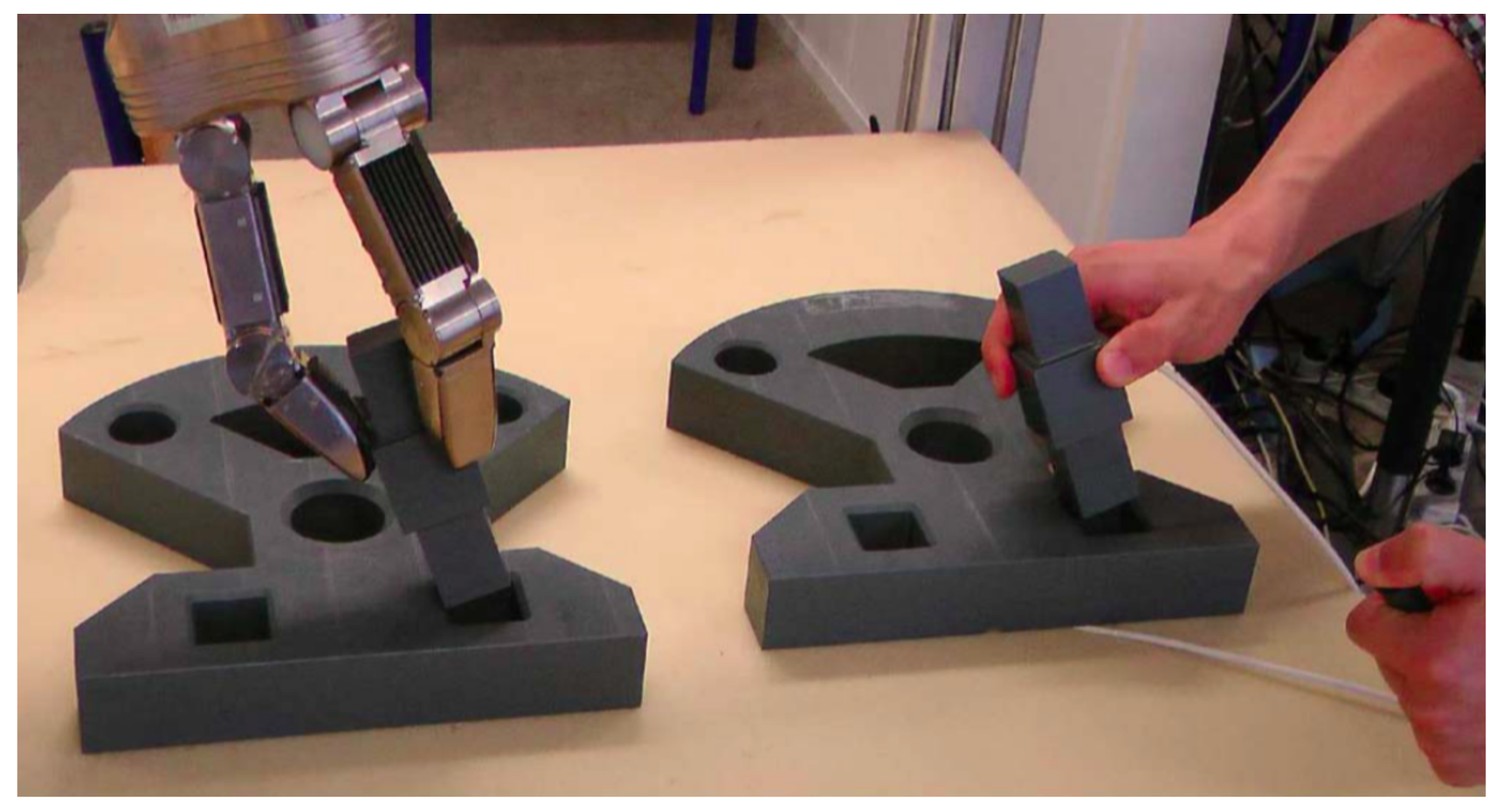

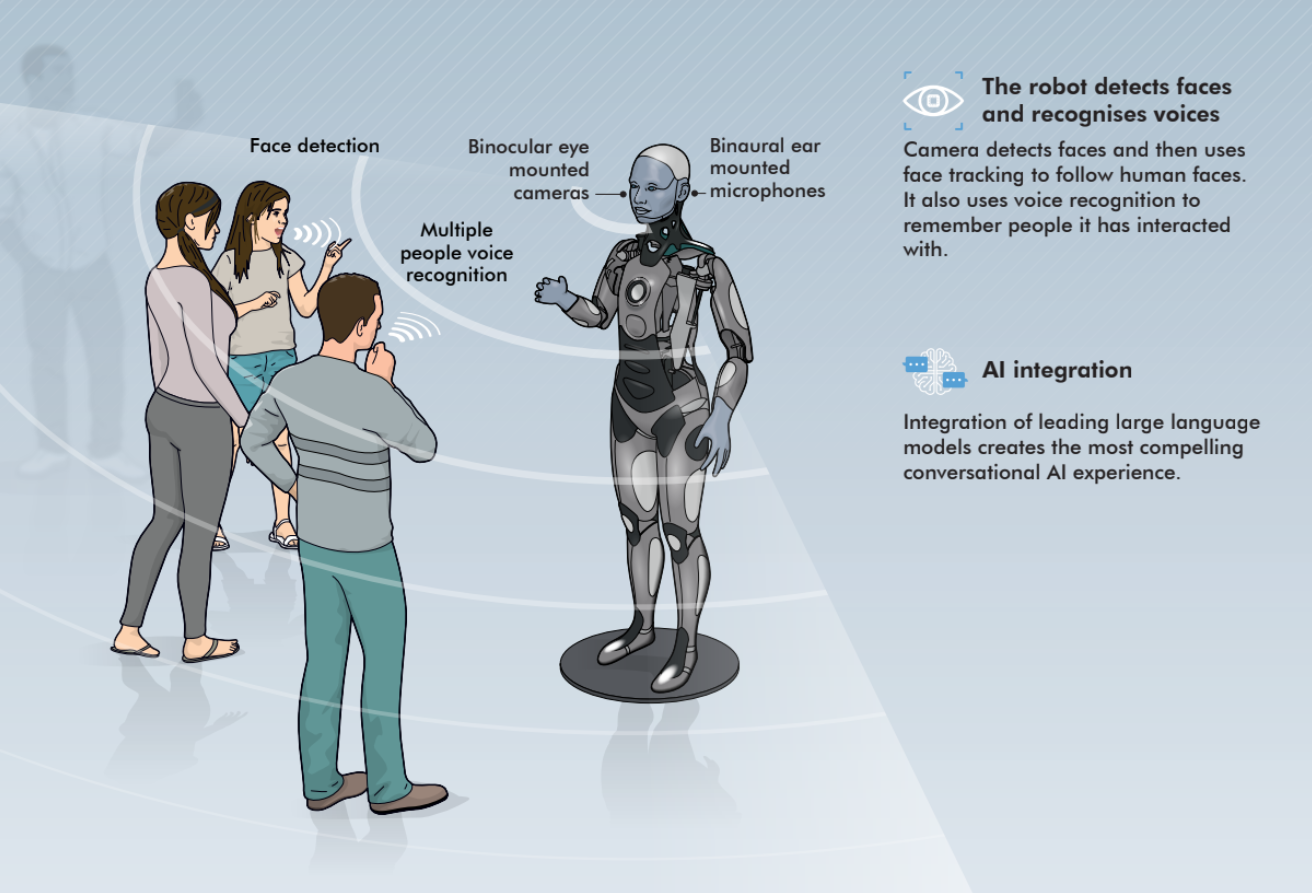

Humanoid robots must see and understand what humans demonstrate. This means integrating an array of sensors—cameras, depth sensors, inertial measurement units (IMUs), tactile grids, and sometimes wearable devices on the human demonstrator. These sensors capture motion, object interactions, spatial context, and sometimes subtle cues like force and pressure. In advanced research setups, wearable gloves embedded with multiple sensors help the robot perceive detailed grasping and manipulation demonstrations.

Perception systems use computer vision and machine learning to convert raw sensor inputs into structured representations: this is a cup, this is a spoon, this is the trajectory of a hand moving toward an object. The better robots understand the context and intent behind actions, the more effectively they can generalize from demonstrations.

2. Human‑to‑Robot Mapping

Once a robot sees what a human does, the next step is linking human motion to robot motion. This is deceptively hard. Humans and robots have different joint limits, proportions, and motion dynamics. A human shoulder rotates differently than a robotic shoulder; the fingers on a robot may lack the full dexterity of a human hand.

Researchers mitigate this “embodiment gap” with techniques such as inverse kinematics, motion retargeting, and unified state‑action representations. A popular recent strategy is to use a unified policy architecture that can interpret human demonstrations by projecting both human and robot motions into a shared representation, and then mapping that back into robot actions. These approaches have shown promising results in improving generalization across diverse tasks and robot platforms.

3. Imitation and Reinforcement Learning

At the heart of demonstration learning are machine learning models that can extract meaningful patterns from data. Two core approaches dominate:

- Imitation Learning: The robot tries to mimic the behavior observed in human demonstrations. Traditional imitation learning involves behavior cloning from recorded trajectories.

- Reinforcement Learning (RL): The robot explores and receives feedback (rewards or penalties) to improve performance. When combined with demonstration data, RL helps robots refine their abilities beyond mere mimicry toward goal‑oriented mastery.

Some frameworks even blend these approaches, using imitation learning to bootstrap a policy and reinforcement learning to refine it.

4. Teleoperation and Hybrid Training

In many systems, human experts demonstrate tasks by teleoperating a robot’s motions in virtual reality (VR) or through motion capture. This captures exact robot‑relevant trajectories but requires significant resources and expertise. Newer methods aim to reduce this burden by training robots with fewer demonstrations and augmenting human data with simulation or autonomous exploration.

Major Research Breakthroughs

Over the last few years, multiple research breakthroughs have nudged humanoid demonstration learning toward reality:

Cross‑Embodiment Skill Transfer

One significant breakthrough leverages cross‑embodiment learning, where robots learn behavior primitives from humans and then retarget those skills to different robot architectures. One such framework decomposes complex actions into modular components and dynamically coordinates them to generalize across robots with different morphologies. This reduces the need for retraining from scratch for every new robot platform.

Real‑Time Interaction Models

New hierarchical frameworks enable humanoid robots to react to human instruction and interruption in real time. These architectures decouple high‑level intent planning from low‑level motion execution, allowing robots to interpret nuanced human signals like gestures or verbal cues and adjust behavior dynamically.

Video‑Based Demonstration Learning at Scale

Rather than relying on carefully curated demonstrations in labs, researchers are now exploring the use of vast collections of human videos as training data. Models trained on millions of human action clips from the internet can convert natural commands into movement policies, effectively broadening the range of tasks robots can attempt.

Challenges on the Path to Learning from Demonstrations

Despite rapid progress, significant hurdles remain.

The Embodiment Gap

Even with advanced retargeting, the mismatch between human and robot bodies means that not all human movements translate directly. Robots need to interpret what matters — which aspects of a motion are essential for task success and which are incidental.

Data Efficiency

Humans can learn complex tasks from very few examples; robots often require far more. Reducing demonstration requirements — ideally to just a few instances — is a central research goal. Some systems aim to use as few as three to five demonstrations per task while yielding robust performance.

Generalization and Transfer Learning

Learning a specific task in a controlled environment is one thing; scaling that capability to diverse real‑world contexts is quite another. Robots must generalize to new objects, environments, and variations in human style, which current models can struggle with.

Real‑World Interaction Complexity

Human environments are messy — objects vary in size, shape, and purpose; lighting changes; humans move unpredictably. Teaching a robot to function reliably outside controlled lab conditions remains a formidable challenge.

Human Teachers in the Loop

Interestingly, research shows that robots learning from demonstrations impact human teachers too. A recent study on robot learning found that human participants alter their demonstration style and expectations when interacting with robots that sometimes fail or succeed. This feedback loop suggests that future robot training will be a co‑adaptive process, where human and machine continuously shape each other’s learning behaviors.

Teaching a robot effectively may become a new specialized skill — one that improves with practice and feedback, even from novice users. Indeed, some experimental frameworks show that training non‑expert users to provide demonstrations dramatically improves the robot’s ability to learn efficiently.

Applications Across Sectors

If humanoid robots can learn from demonstrations reliably, the potential applications are staggering:

Industrial Automation

Humanoid robots could take on tasks that vary by context or environment, adapting on the fly without explicit reprogramming. This could revolutionize warehouses, manufacturing, and logistics.

Healthcare and Caregiving

Robots capable of learning from human caregiver demonstrations could assist in elder care, rehabilitation, and physical therapy. Imagine a robot observing a nurse demonstrate how to help a patient with mobility exercises and then replicating those supportive actions.

Homes and Services

Domestic robots could observe how humans perform daily chores and then assist or autonomously carry out those routines.

Education and Skill Transfer

Humanoid robots could act as tutors or teaching assistants by learning pedagogical routines from human educators and personalizing interactions with students.

Ethical, Social, and Economic Considerations

As humanoid robots learn more like humans, complex ethical issues arise:

- Responsibility: Who is accountable when a robot trained from human demonstrations makes a mistake?

- Trust: Users must trust robots to act safely and predictably, especially when robots learn from loosely controlled human examples.

- Labor and Economy: Robots capable of learning complex tasks could displace jobs, but they could also enable new forms of labor and productivity.

- Privacy: Collecting human demonstrations—especially video data—raises serious privacy concerns.

Addressing these concerns requires interdisciplinary collaboration among engineers, ethicists, policymakers, and the public.

The Road Ahead: Toward Truly Autonomous Learning

Will humanoid robots eventually learn from human demonstrations the way humans learn from each other? The answer is increasingly leaning toward yes, but with important caveats. Research trends point to hybrid systems where demonstration learning is complemented by autonomous exploration, simulation pre‑training, large video datasets, and real‑time human feedback loops. Companies are investing in advanced AI, and new hardware platforms designed specifically to interpret human instructional signals are emerging.

However, full autonomy is not yet here. Some advanced systems are even reducing reliance on human demonstrations by having robots learn from their own experience or video data captured by themselves. Combined with generative AI tools that convert language and visual inputs into actionable policies, the frontier of learning for humanoid robots continues to expand rapidly.

Conclusion

Humanoid robots learning from human demonstrations is one of the most exciting intersections of robotics, artificial intelligence, and human‑machine interaction. The journey from scripted automation to adaptive, demonstration‑based learning is well underway, powered by advances in perception, machine learning, control theory, and behavioral science.

While challenges remain, each year brings fresh breakthroughs — from simulation‑to‑real transfer techniques to massive video datasets that teach robots new skills from everyday human actions. This is not a distant sci‑fi fantasy but an active scientific reality with profound implications for labor, society, ethics, and the future of work. The robots of tomorrow may very well learn from us — not by programming, but by example.